A semiconductor fabrication facility runs 127 precision robotic arms assembling microchips 24 hours per day. Each robot has eight cameras capturing 4K video at 60 frames per second to inspect solder joints, alignment tolerances, and component placement accuracy. Sending this video data to a cloud server for AI analysis would require uploading 2.4 terabytes per hour per production line. At current bandwidth costs, that is $18,000 per month just to move the data — before any analysis happens. The latency of uploading video, waiting for cloud processing, and receiving defect alerts back would add 800-1200 milliseconds to each inspection cycle, slowing production throughput by 12%. The facility installed NVIDIA Jetson Orin modules directly on the factory floor, running AI inference models locally on the edge device itself. Each Jetson processes video from eight cameras simultaneously, detecting solder defects, alignment drift, and component placement errors in under 40 milliseconds — fast enough to trigger immediate robotic corrections before the next assembly cycle begins. Zero cloud upload costs. Zero latency delays. Real-time fault detection that prevents defects rather than discovering them hours later in quality inspection. The Jetson modules send only the actionable alerts to OxMaint CMMS — "Camera 3 detected misalignment on Robot Arm 14, work order auto-generated, technician dispatched" — eliminating the data transfer bottleneck while maintaining full predictive maintenance capability. Edge AI is not cloud AI moved closer to the asset. It is intelligent processing embedded at the point of data creation, making maintenance decisions in milliseconds using watts of power instead of kilowatts, and operating independently when network connectivity fails. Start your free trial to integrate Jetson edge AI alerts with automated CMMS work orders or book a demo to see edge AI predictive maintenance in action.

Edge AI Processes Data Where It's Created. Cloud AI Waits for Upload. The Difference Is Milliseconds — and Millions.

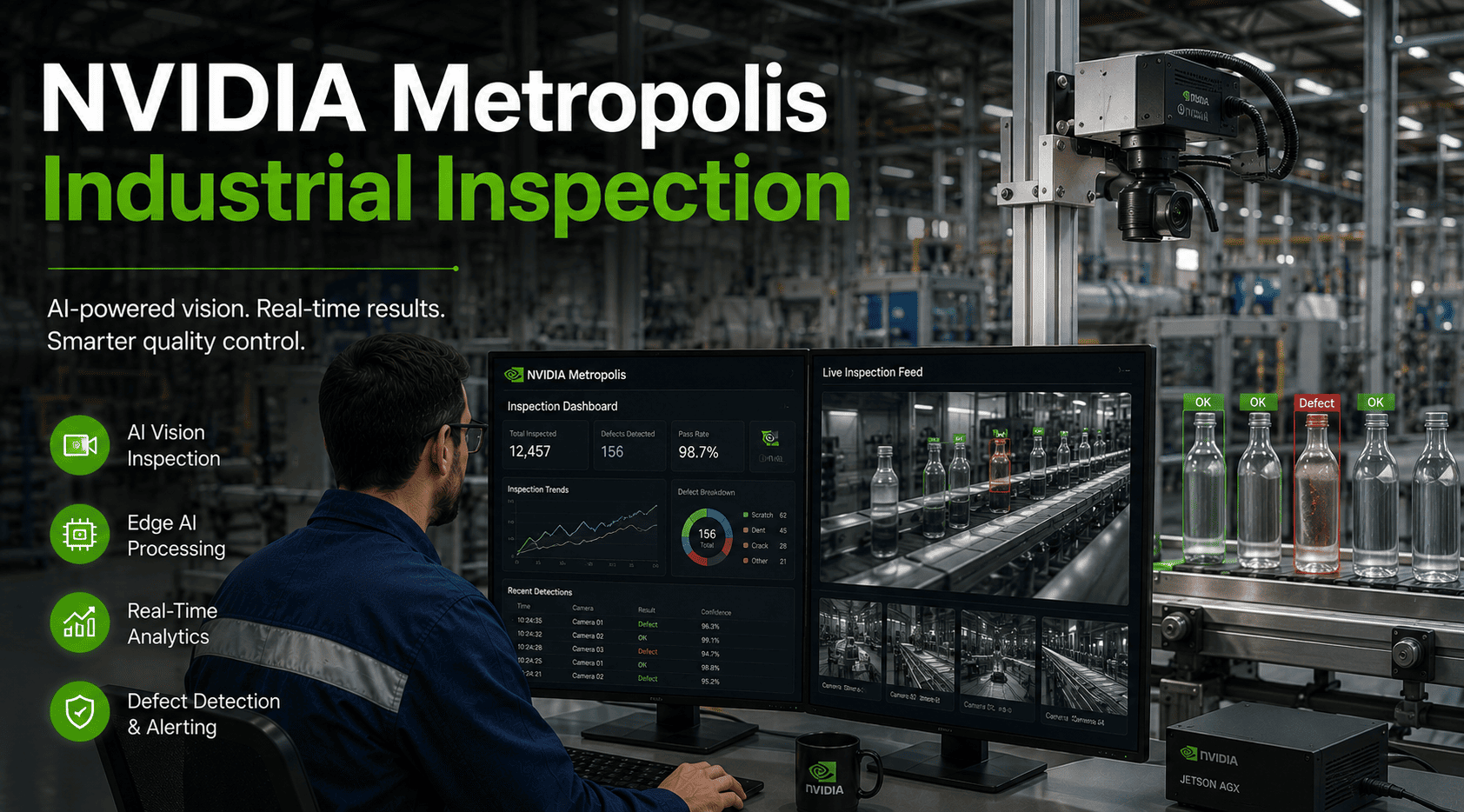

NVIDIA Jetson brings GPU-accelerated AI inference to the factory floor, processing vision, vibration, thermal, and acoustic sensor data locally in real time. Faults detected instantly trigger automated maintenance workflows without cloud dependency.

40ms

average inference time for Jetson Orin running YOLOv8 defect detection on 4K video streams

$0

monthly cloud data transfer costs — all processing happens locally on the edge device

99.7%

uptime maintained during network outages — edge AI continues operating independently

15-30W

power consumption per Jetson module vs 300-800W for equivalent cloud GPU processing

Cloud-based predictive maintenance requires continuous high-bandwidth connectivity, generates massive data transfer costs, introduces latency that prevents real-time intervention, and fails completely during network outages. Edge AI inverts this model: the intelligence lives at the asset, processing sensor data locally and sending only the critical alerts upstream to CMMS. A manufacturing plant with 200 vision-inspected assets saves $4.2M annually in cloud transfer and compute costs by deploying Jetson edge AI modules instead of streaming video to AWS or Azure for analysis. Sign up free to configure edge AI alert integration with OxMaint work order automation.

GPU Performance

40 TOPS AI

Best For

Single camera inspection, vibration analysis, acoustic monitoring

GPU Performance

100 TOPS AI

Best For

Multi-camera systems, thermal imaging fusion, real-time defect classification

GPU Performance

275 TOPS AI

Best For

8+ camera arrays, digital twin synchronization, autonomous robotics integration

GPU Performance

275 TOPS AI

Best For

Harsh environments, -40°C to 85°C operating range, IP67 enclosures available

1

Computer Vision Defect Detection

Jetson processes video from production line cameras running trained CNN models to identify surface defects, dimensional deviations, color variations, and assembly errors in real time. Defective parts flagged instantly before they proceed to next production stage.

Detection accuracy: 99.2% with YOLOv8 trained on 50,000 labeled defect images

2

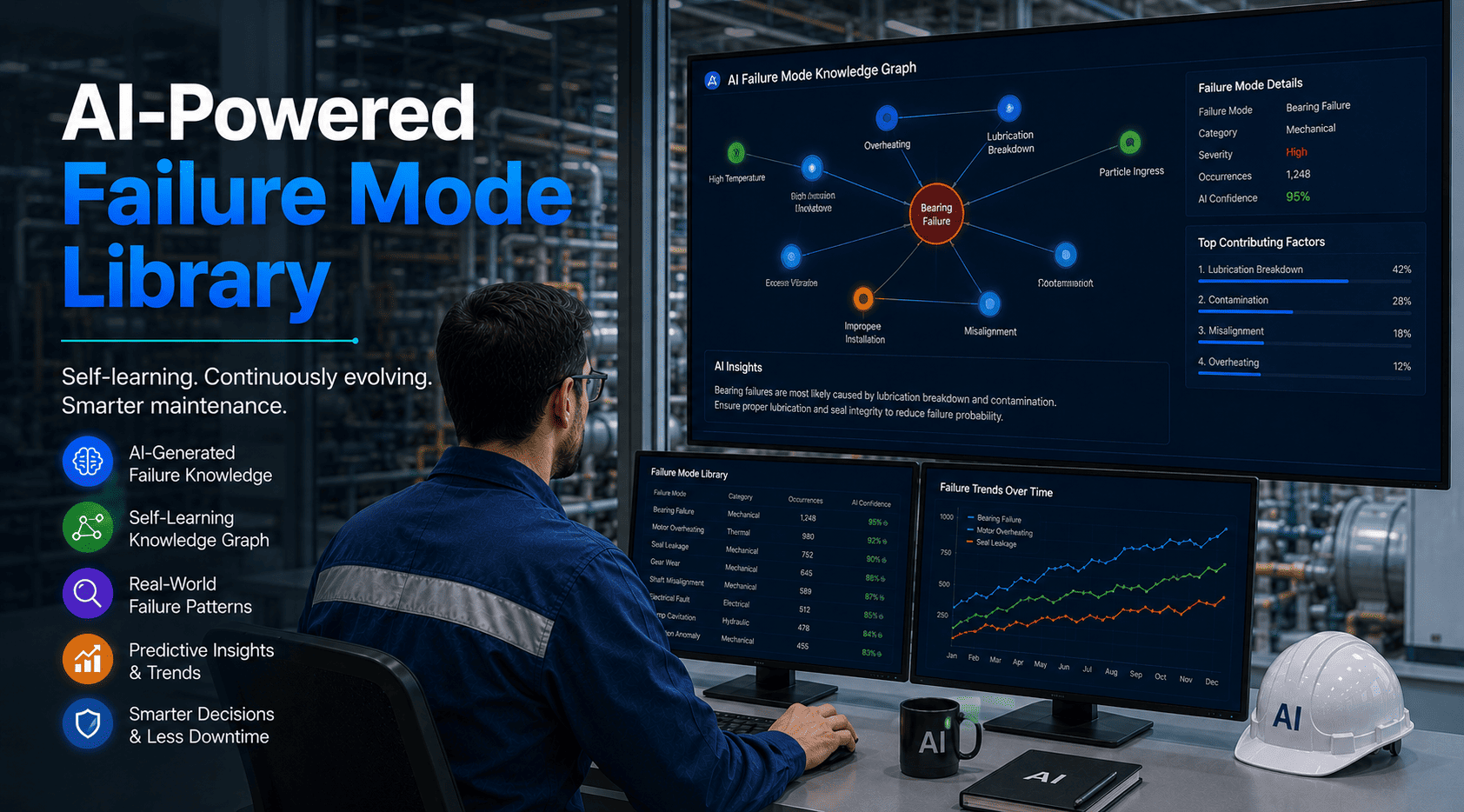

Vibration Pattern Recognition

Accelerometer data processed through LSTM neural networks running on Jetson to detect bearing wear, shaft misalignment, and rotor imbalance. Edge inference identifies anomalous vibration signatures 3-6 weeks before catastrophic failure.

False positive rate: 4.1% after 90 days of continuous learning from labeled failure events

3

Thermal Anomaly Detection

FLIR thermal cameras feed into Jetson running ResNet-based thermal image analysis. Hotspot detection on electrical panels, motor housings, and hydraulic systems triggers immediate alerts when temperature exceeds baseline patterns by 8°C or more.

Prevented electrical fires: 12 events detected 40-120 minutes before ignition threshold

4

Acoustic Signature Analysis

Industrial microphones capture ultrasonic frequencies indicating compressed air leaks, steam trap failures, and bearing degradation. Jetson processes audio through spectrogram analysis and trained models to classify fault types and severity levels.

Compressed air leak recovery: $47,000 annual savings from 23 leaks detected and repaired

5

Multi-Modal Sensor Fusion

Jetson AGX Orin combines inputs from vibration, thermal, acoustic, and vision sensors to build comprehensive equipment health profiles. Fusion models detect complex failure modes that single-sensor systems miss — such as cavitation in pumps requiring simultaneous vibration and ultrasonic analysis.

Diagnostic accuracy improvement: 34% higher than single-sensor approaches for rotating equipment

6

Autonomous Mobile Robot Inspection

Jetson-powered AMRs navigate facilities autonomously, capturing visual and thermal data from hundreds of assets daily. Onboard AI processes inspection data in real time, identifying anomalies and auto-generating work orders without human review for routine deviations.

Inspection coverage: 340 assets per 8-hour shift with single AMR vs 45 assets with manual inspection

Understanding the data pipeline from sensor to CMMS work order is critical for successful edge AI deployment. The architecture below shows how Jetson processes raw sensor inputs locally, runs inference models to detect faults, and transmits only actionable alerts to OxMaint for automated work order generation. Book a demo to see the complete integration workflow with MQTT or REST API connectivity.

Stage 1

Sensor Data Capture

Industrial cameras (USB, GigE, MIPI-CSI), vibration accelerometers (I2C, SPI), thermal sensors (USB, Ethernet), acoustic microphones (USB, analog)

Data rate: 2-12 GB/sec raw sensor input per Jetson module

→

Stage 2

Edge Preprocessing

GPU-accelerated image normalization, FFT for vibration signals, noise filtering, data augmentation, frame buffering

Processing time: 2-8ms per frame on Jetson Orin GPU cores

→

Stage 3

AI Inference

TensorRT-optimized models (YOLO, ResNet, LSTM) running on Jetson Tensor Cores. Real-time defect classification, anomaly scoring, fault prediction

Inference latency: 15-50ms depending on model complexity and input size

→

Stage 4

Alert Generation

Threshold-based or AI-confidence alerts packaged as JSON payloads with asset ID, fault type, severity, timestamp, and sensor metadata

Alert payload: 200-800 bytes vs 2-12 GB raw sensor data (99.999% reduction)

→

Stage 5

CMMS Integration

MQTT or REST API transmission to OxMaint. Automated work order creation, technician assignment, parts reservation, and mobile notification

End-to-end latency from fault detection to technician notification: under 2 seconds

Data Collection and Labeling

Collect 10,000-100,000 images of normal and defective parts. Label defect types, bounding boxes, severity classifications using tools like Roboflow or Label Studio.

Model Architecture Selection

Choose pre-trained base models optimized for edge deployment: YOLOv8-nano for object detection, MobileNetV3 for classification, EfficientNet for balanced accuracy-speed.

Cloud GPU Training

Train models on AWS P4 or Azure NC-series instances with high-end GPUs. Typical training time: 12-48 hours for convergence on industrial defect datasets.

Model Optimization with TensorRT

Convert trained PyTorch or TensorFlow models to NVIDIA TensorRT format. Applies INT8 quantization, layer fusion, kernel auto-tuning for 3-5x inference speedup.

Model Transfer to Jetson

Deploy TensorRT engine files to Jetson via SSH, Docker containers, or OTA update mechanisms. Model file sizes typically 5-50MB after optimization.

Inference Pipeline Setup

Configure DeepStream SDK or custom Python inference pipelines using GStreamer for video processing and TensorRT runtime for GPU acceleration.

Real-Time Performance Validation

Test inference FPS, latency, GPU utilization, and power draw under production load. Adjust batch sizes and model complexity to meet real-time requirements.

Continuous Learning Integration

Implement feedback loops where edge-detected anomalies are reviewed by technicians, relabeled, and used to retrain models monthly for accuracy improvement.

Manufacturing Plant Scenario

50 production line inspection points, each with 2x 4K cameras running 24/7 defect detection. Annual comparison for Edge AI (Jetson) vs Cloud AI (AWS/Azure).

| Cost Category |

Edge AI (Jetson Orin NX) |

Cloud AI (AWS) |

Annual Difference |

| Hardware / Compute |

$44,950 (50x Jetson @ $899 each, one-time) |

$186,000 (GPU instance hours @ $2.50/hr) |

$141,050 savings |

| Data Transfer |

$0 (all processing local) |

$2,160,000 (180TB/month upload @ $1.00/GB) |

$2,160,000 savings |

| Storage |

$0 (raw video not stored, only alerts) |

$432,000 (2PB annual storage @ $0.023/GB) |

$432,000 savings |

| Power Consumption |

$2,628 (50 devices @ 20W, $0.12/kWh) |

$0 (included in cloud compute pricing) |

$2,628 cost |

| Network Infrastructure |

$8,000 (local switches, no WAN upgrade needed) |

$96,000 (high-bandwidth fiber upgrade, ISP costs) |

$88,000 savings |

| Total Year 1 |

$55,578 |

$2,874,000 |

$2,818,422 savings |

| Total Year 2+ |

$10,628 annually |

$2,874,000 annually |

$2,863,372 savings |

Edge AI deployment follows a phased approach starting with proof-of-concept on 2-3 critical assets, validating accuracy and ROI, then scaling systematically across the facility. Start your free trial to configure edge AI integration endpoints and test alert-to-work-order automation.

Week 1-2

Asset Selection and Data Baseline

Identify 2-3 high-value assets with existing failure data for model training

Capture 500-2000 baseline images or sensor readings for each failure mode

Label data with defect types, bounding boxes, and severity classifications

Select appropriate Jetson hardware based on sensor count and compute requirements

Week 3-4

Model Training and Optimization

Train initial CNN or LSTM models on cloud GPU infrastructure

Validate accuracy on hold-out test set, target 95%+ precision for deployment

Convert models to TensorRT format with INT8 quantization for edge optimization

Benchmark inference speed and power consumption on target Jetson hardware

Week 5-6

Edge Hardware Deployment

Install Jetson modules with industrial enclosures and power supplies

Connect sensors (cameras, accelerometers, thermal) to Jetson I/O interfaces

Configure local network for MQTT broker or REST API communication to CMMS

Deploy inference pipelines and validate real-time processing performance

Week 7-8

CMMS Integration and Validation

Configure OxMaint API endpoints to receive Jetson alert payloads

Map fault types to work order templates and auto-assignment rules

Run parallel validation: edge AI alerts vs manual inspection for 2 weeks

Measure false positive rate, detection accuracy, and time-to-repair improvement

Insufficient Training Data

Many industrial facilities lack thousands of labeled defect images needed for supervised learning. Models trained on small datasets exhibit poor generalization and high false positive rates in production.

Start with transfer learning using pre-trained models on similar industrial datasets. Use data augmentation (rotation, brightness, blur) to expand training sets 10-20x. Implement active learning where edge system flags uncertain predictions for human review and model retraining.

Environmental Variability

Lighting changes, dust accumulation on camera lenses, temperature fluctuations, and vibration noise from adjacent equipment cause model accuracy degradation over time.

Deploy environmental normalization in preprocessing pipeline. Use auto-exposure cameras with IR illumination for consistent lighting. Implement model drift detection by tracking prediction confidence scores — retrain when average confidence drops below 85%.

Network Reliability for Alert Transmission

Edge AI continues operating during network outages, but alerts cannot reach CMMS for work order generation. Critical faults may go unaddressed if connectivity fails for extended periods.

Implement local alert buffering on Jetson with timestamp queuing. When connectivity restores, transmit buffered alerts in chronological order. Add local audio/visual alarms (buzzer, strobe light) for critical faults to notify nearby personnel during network outages.

Model Update and Version Control

Deploying new models to 50+ edge devices manually is time-consuming and error-prone. Version mismatches between devices cause inconsistent fault detection across the facility.

Use NVIDIA Fleet Command or custom OTA update systems to push model updates remotely. Implement staged rollouts: deploy to 10% of devices, validate performance for 48 hours, then roll to remaining 90%. Maintain model registry with versioning and rollback capability.

Process Sensor Data in Milliseconds. Not Minutes. Not Megabytes Uploaded.

NVIDIA Jetson edge AI eliminates the cloud bottleneck. Run computer vision, vibration analysis, thermal monitoring, and acoustic detection locally on the factory floor. Detect faults instantly. Trigger CMMS work orders automatically. Operate independently when networks fail.

Can Jetson edge AI operate reliably in harsh industrial environments with temperature extremes and vibration?

Yes. The Jetson AGX Orin Industrial variant operates from -40°C to 85°C and withstands sustained vibration up to 5G. Standard Jetson modules require industrial-grade enclosures with active cooling or fanless heatsinks for harsh environments. Conformal coating protects against dust, moisture, and corrosive atmospheres. Installations in steel mills, chemical plants, and outdoor oil/gas facilities demonstrate 99.5%+ uptime in extreme conditions.

How much AI and programming expertise is required to deploy Jetson-based predictive maintenance?

Initial model training requires data science skills or partnership with AI consultants. However, NVIDIA provides pre-trained models for common industrial use cases through NGC catalog — defect detection, anomaly classification, object tracking. Once models are deployed, maintenance teams interact only with the CMMS interface receiving alerts. OxMaint handles the integration complexity between Jetson MQTT/REST outputs and work order automation, requiring zero coding from maintenance staff.

What happens to edge AI fault detection when network connectivity to CMMS is lost?

Edge AI continues operating independently during network outages. Jetson buffers alerts locally with timestamps and transmits when connectivity restores. For critical faults requiring immediate response, deploy local notification systems — industrial buzzers, strobe lights, or SMS via cellular modem independent of primary network. Facilities with intermittent connectivity (remote sites, offshore platforms) benefit most from edge AI resilience compared to cloud-dependent systems that fail completely during outages.

How do you prevent false positives from overwhelming maintenance teams with invalid alerts?

False positive reduction requires confidence thresholding and multi-stage validation. Set AI confidence thresholds at 90-95% for alert generation — detections below threshold are logged but not escalated. Implement temporal filtering: require fault condition to persist for 3-5 consecutive frames before triggering alert. Use ensemble models where multiple independent algorithms must agree before classification. Track false positive rate weekly and retrain models when rate exceeds 5%. OxMaint allows technicians to mark alerts as false positives, feeding this data back for continuous model improvement.

Sign up free to configure alert confidence thresholds and validation rules.