A Tier III data center in Singapore lost $840,000 in a single weekend after a CRAC unit failure triggered an uncontrolled thermal event across two adjacent halls — the root cause was a condenser coil fouling pattern that had been building for 11 weeks with no alert, no work order, and no preventive action. Sign in to OxMaint to deploy predictive maintenance AI across your CRAC units, chillers, cooling loops, and humidity systems — or book a demo to see how real-time anomaly detection works across a live data center cooling topology.

Blog · Data Center HVAC · Predictive Maintenance AI

Data Center HVAC Predictive Maintenance Software: Protecting Uptime at the Cooling Layer

CRAC units, chillers, cooling towers, pumps, humidity systems, and hot/cold aisle containment — every component in your data center cooling chain is a single point of failure. Predictive maintenance AI detects degradation patterns weeks before they become thermal events.

P1

CRAC-07 — Supply air temp rising 2.4°C above baseline · Compressor current draw +18% · Work order auto-generated

P2

Chiller-02 — Approach temperature trending +0.8°C over 6 days · Condenser fouling probability 71%

PM

CW Pump-3 — Vibration baseline deviation 14% · Bearing inspection due in 9 days

OK

Cooling Tower CT-01 — All parameters nominal · Next PM: 14 days

$740K

Average cost of a data center thermal event

68%

Of CRAC failures preceded by detectable anomalies 2–6 weeks earlier

40%

Of data center energy consumed by HVAC cooling systems

99.999%

Tier IV uptime target — impossible without predictive HVAC maintenance

Why Reactive HVAC Maintenance Fails in Data Centers

Data center cooling is not a commercial HVAC problem — it is a precision infrastructure problem. A hotel losing its HVAC for two hours creates discomfort. A data center losing cooling for 11 minutes can trigger automatic IT load shedding, SLA breaches, and regulatory notifications. The tolerance window is measured in degrees and minutes, not hours and days. Reactive maintenance — responding to failures after they occur — is structurally incompatible with that operating requirement.

The compounding problem is that data center cooling systems fail progressively, not suddenly. A chiller that fails on a Tuesday morning began showing measurable degradation signatures — rising approach temperatures, increasing compressor differential pressures, declining coefficient of performance — weeks or months earlier. Those signatures are detectable. What has been missing is the software layer to detect them continuously across a full cooling topology and route corrective maintenance actions before the degradation becomes a failure event.

01

CRAC Unit Failures

Computer Room Air Conditioning units cycle 24/7/365 at load profiles that change with IT workload. Condenser coil fouling, refrigerant undercharge, compressor degradation, and fan belt wear accumulate undetected in reactive maintenance models. A single CRAC failure in a non-redundant zone creates a thermal runway event within 8–15 minutes at typical rack densities.

Predictive signal: Supply/return delta-T deviation, compressor current anomaly, superheat trending

02

Chiller Plant Degradation

Centrifugal and screw chillers serving data center cooling plants degrade through fouled heat exchangers, refrigerant contamination, oil carryover, and compressor wear. Each degradation mode reduces COP and increases energy consumption before triggering alarms. A chiller running at 85% efficiency is consuming 18% more power than its rated spec — an invisible cost without performance trending.

Predictive signal: Approach temperature rise, condenser pressure trend, kW/ton degradation

03

Cooling Tower Fouling

Cooling tower performance directly governs condenser water supply temperature, which governs chiller COP. Scale buildup, biological contamination, and fill media degradation raise leaving water temperature by 1–3°C — forcing chillers to work harder and increasing compressor wear. Cooling tower fouling is slow, invisible, and expensive if undiscovered until the next maintenance cycle.

Predictive signal: Approach temperature increase, blowdown conductivity trend, fan amp deviation

04

Chilled Water Pump Failures

Chilled water and condenser water pumps operate continuously with minimal redundancy. Bearing wear, seal degradation, and impeller erosion produce vibration signatures and flow deviations weeks before failure. A pump failure that cuts cooling flow to a computer room at 40kW/rack density creates a thermal emergency within minutes. Flow monitoring and vibration trending detect pump degradation at the earliest mechanical stages.

Predictive signal: Vibration baseline deviation, differential pressure trend, motor current anomaly

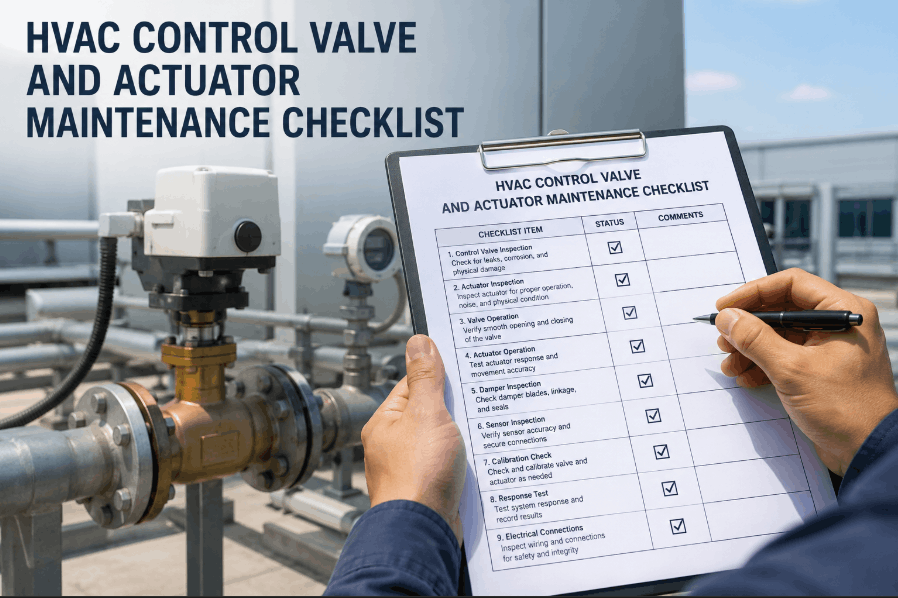

The Cooling Asset Hierarchy — What OxMaint Monitors and Why

Data center cooling operates as a hierarchical system — outdoor heat rejection feeds the condenser water loop, which feeds the chiller plant, which feeds the chilled water distribution, which feeds perimeter or in-row CRAC/CRAH units, which manage rack-level airflow and temperature. A predictive maintenance system that monitors only the end-point cooling units while ignoring upstream plant performance is monitoring symptoms, not causes.

| Cooling Asset |

Key Failure Modes |

Predictive Signals Monitored |

Failure Impact |

OxMaint PM Trigger |

| CRAC / CRAH Units |

Coil fouling, refrigerant loss, fan failure, compressor wear |

Supply air temp delta-T, compressor current, superheat, airflow |

Critical — direct rack thermal risk |

Real-time AI anomaly + runtime hours |

| Chillers (centrifugal / screw) |

Heat exchanger fouling, refrigerant contamination, compressor wear |

Approach temp, COP trend, kW/ton, leaving water temp |

Critical — loss of entire cooling plant |

Performance deviation + runtime hours |

| Cooling Towers |

Scale fouling, biological growth, fill media degradation, fan wear |

Leaving water temp approach, conductivity, fan current, static pressure |

High — forces chiller operating point degradation |

Approach temp trend + 90-day calendar |

| CW / CHW Pumps |

Bearing wear, seal failure, impeller erosion, motor degradation |

Vibration baseline, differential pressure, motor current, flow rate |

Critical — loss of fluid distribution |

Vibration deviation + runtime hours |

| Precision Humidifiers |

Scale buildup on heating elements, electrode degradation, drain blockage |

Steam output vs setpoint, electrode current, drain cycle frequency |

Medium — humidity excursions cause ESD and corrosion risk |

Output deviation + calendar-based cleaning |

| Air Handling Units |

Filter clogging, coil fouling, belt/bearing wear, damper failure |

Static pressure rise, supply temp, motor current, airflow volume |

Medium — zone temperature uniformity degradation |

Static pressure trigger + filter runtime |

Cooling System Availability

99.97%

Target: 99.95% · 3 CRAC units on PM this week

Active P1/P2 Cooling Alerts

3

2 CRAC anomalies · 1 chiller approach temp trending

PM Compliance — Cooling Assets

94%

38 of 40 cooling assets current on PM schedule

Cooling Energy vs Baseline

−11%

PUE improvement from optimised chiller plant maintenance

Predictive Maintenance AI

Connect Your Cooling Assets to OxMaint in 48 Hours

OxMaint connects to existing BMS, SCADA, and sensor infrastructure without replacing your control systems. The AI layer begins detecting cooling anomalies as soon as your first 30 days of historical data is ingested — no hardware changes, no production interruption.

Before vs After: Predictive vs Reactive Data Center HVAC Maintenance

The performance gap between reactive and predictive maintenance programmes in data center cooling is not marginal — it is structural. These comparisons are drawn from documented OxMaint deployments across co-location and enterprise data center environments.

Unplanned HVAC Downtime Events per Year

Mean Time to Detect Cooling Anomaly

Annual Cooling HVAC Maintenance Cost (per MW IT load)

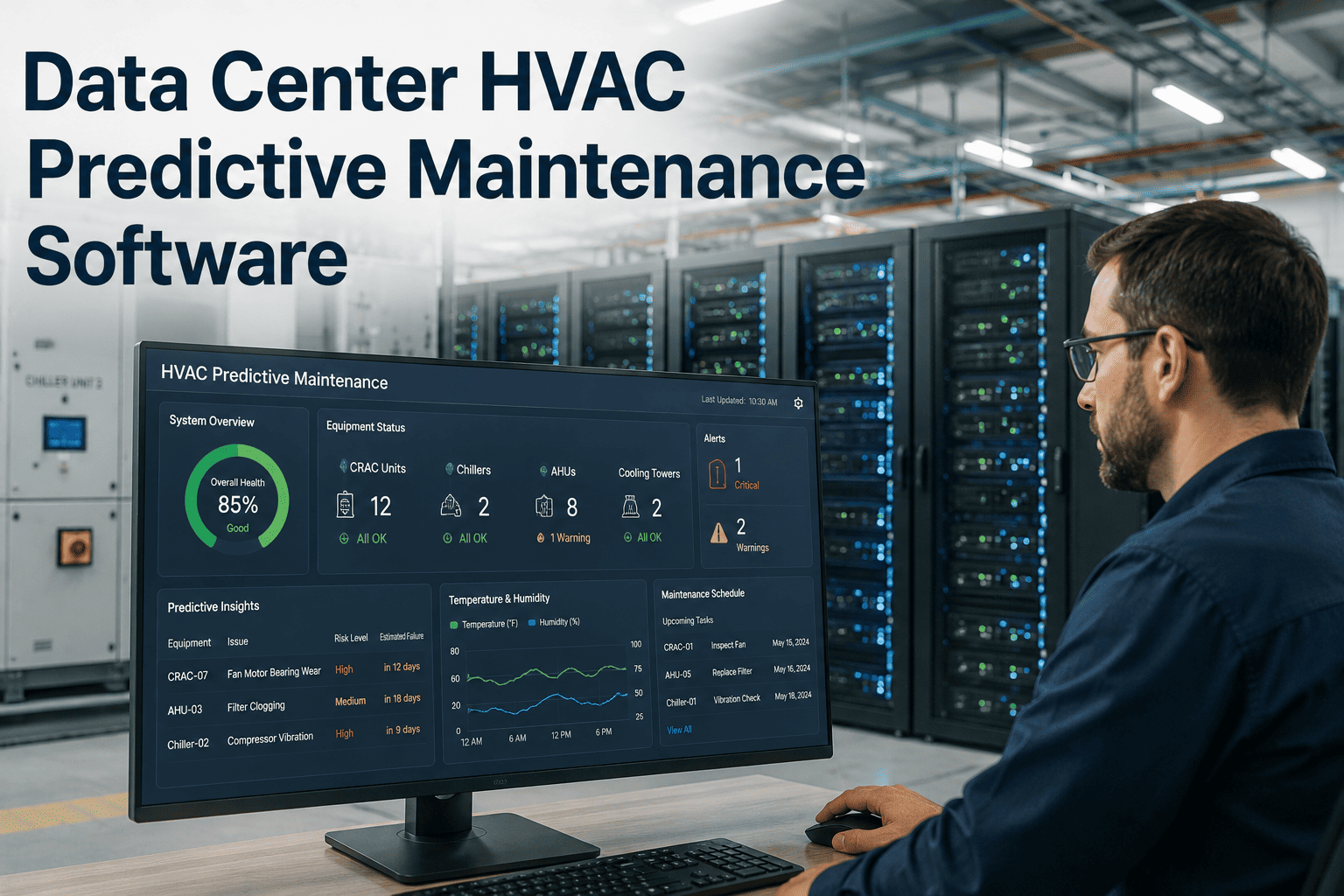

How OxMaint Predictive AI Works Across the Cooling Topology

Day 1–2

BMS Integration and Asset Registry

OxMaint connects to your BMS, SCADA, or direct sensor outputs via BACnet, Modbus, or REST API. All cooling assets — CRAC units, chillers, cooling towers, pumps, AHUs, humidifiers — are registered with nameplate data, capacity, design operating parameters, and criticality classification. No hardware replacement required. Integration validation completed within 48 hours.

Day 3–30

Baseline Calibration and Normal Operating Envelope

The AI engine ingests 30 days of operating data per asset and establishes individual baselines — not generic thresholds. A chiller serving a high-density colocation hall has a different normal operating signature than one serving a lightly loaded enterprise data center. Baselines account for diurnal load cycles, seasonal conditioning variations, and IT workload fluctuations. False positive rates drop below 4% after the calibration period.

Day 30 onwards

Continuous Anomaly Detection and Work Order Generation

OxMaint monitors 20–60 parameters per cooling asset simultaneously. When a deviation from the calibrated baseline is detected and confirmed across correlated parameters — not just a single threshold breach — the system generates a prioritized work order with the anomaly description, affected asset, recommended corrective action, and urgency classification. P1 alerts route directly to the on-call engineer via SMS and app notification within 2 minutes of detection.

Ongoing

Failure Probability Scoring and Maintenance Window Planning

Each monitored asset receives a continuously updated failure probability score — a percentage likelihood of failure within a defined window (7, 14, or 30 days). Assets with scores above configurable thresholds surface in the maintenance planning dashboard with suggested service windows that avoid peak IT load periods. Facilities managers schedule corrective maintenance during low-risk windows rather than responding to failures during production peaks.

Predictive Insights — Live Sample

CRAC-07 — Hall B West

Failure Probability: 78% within 14 days

Supply air temp delta-T: +2.4°C above 30-day baseline

Compressor current draw: +18% above baseline

Condenser fan vibration: elevated — bearing wear pattern

Recommended: Condenser coil cleaning + compressor inspection · Suggested window: Saturday 02:00–06:00

Chiller-02 — Central Plant

Failure Probability: 41% within 30 days

Approach temperature: +0.8°C trend over 6 consecutive days

kW/ton efficiency: declined 9% vs 60-day baseline

Condenser water return temp: 0.6°C above design at equivalent load

Recommended: Condenser tube brushing + water treatment review · Suggested window: Next scheduled downtime

CW Pump-3 — Secondary Loop

Failure Probability: 23% within 30 days

Bearing vibration: 14% above established baseline (trending)

Motor current: +6% vs baseline at equivalent flow setpoint

Differential pressure: 4% reduction — possible impeller wear

Recommended: Bearing inspection + lubrication · Suggested window: 9-day planning horizon

PUE Impact: How Predictive Maintenance Reduces Data Center Energy Consumption

Power Usage Effectiveness (PUE) is the primary energy efficiency metric for data centers. A PUE of 1.0 is theoretical perfection — all power goes to IT equipment. Every fraction above 1.0 represents overhead energy consumed by cooling, lighting, UPS losses, and other infrastructure. Cooling systems are the dominant PUE driver — typically accounting for 35–50% of total overhead energy.

Predictive maintenance reduces PUE through two mechanisms. First, it keeps cooling equipment operating at its designed efficiency — a chiller running with clean heat exchangers and optimally charged refrigerant consumes 15–22% less power than the same chiller with degraded performance that hasn't yet triggered a fault alarm. Second, it reduces the frequency and duration of emergency operating modes — when a primary CRAC unit fails, its redundant pair operates at higher load, increasing total cooling energy consumption for the duration of the event.

| Maintenance Model |

Average PUE |

Cooling Energy Overhead |

Annual Cooling Cost (10 MW facility) |

vs Predictive Model |

| Reactive only |

1.72 |

42% of total energy |

$3.1M |

+$840K per year |

| Calendar-based PM |

1.58 |

37% of total energy |

$2.7M |

+$440K per year |

| Predictive (OxMaint) |

1.41 |

29% of total energy |

$2.26M |

Baseline |

"

The data center industry talks about five-nines uptime as a cooling standard, but most facilities are managing their cooling infrastructure with maintenance practices borrowed from commercial office HVAC. That mismatch — between the criticality of the asset and the sophistication of the maintenance programme — is what produces thermal events. The cooling failure modes in a 10MW data center are predictable, detectable, and preventable. A CRAC unit that is going to fail in three weeks will tell you in its sensor data today. The only question is whether you have the software infrastructure to listen. Predictive maintenance AI applied to data center cooling assets closes that gap structurally — it's not about better technicians or more frequent inspections, it's about continuous monitoring of the right parameters with the right anomaly detection logic running continuously against every asset simultaneously.

Every Cooling Asset. Monitored Continuously. Failures Prevented Before They Happen.

OxMaint Predictive Maintenance AI connects to your existing BMS and sensor infrastructure to monitor CRAC units, chillers, cooling towers, pumps, and humidity systems — detecting degradation patterns weeks before they become thermal events. Protect your uptime SLAs without replacing your control infrastructure.

Frequently Asked Questions

What cooling assets does OxMaint predictive maintenance cover in a data center?

OxMaint monitors all primary cooling assets: CRAC and CRAH units, centrifugal and screw chillers, cooling towers, chilled water and condenser water pumps, precision humidifiers, air handling units, and perimeter cooling units. Each asset class has its own anomaly detection model trained on relevant failure modes — not a single generic threshold applied across all equipment types. Assets are registered individually with their design operating parameters, and the AI establishes per-asset baselines rather than relying on manufacturer-generic alarm setpoints.

Sign in to OxMaint to configure your data center cooling asset registry.

How does OxMaint connect to an existing data center BMS without disrupting operations?

OxMaint integrates with BMS platforms from Siemens Desigo, Johnson Controls Metasys, Schneider EcoStruxure, Honeywell EBI, and others using standard protocols — BACnet/IP, Modbus TCP, OPC-UA, and REST API. The integration is read-only by default — OxMaint receives data streams but does not send control commands. No changes to BMS logic or network architecture are required. Most data center integrations are operational within 24–48 hours of credential provisioning.

Book a technical integration demo to see the BMS connection workflow.

How does predictive maintenance reduce data center PUE?

Cooling equipment that operates with degraded heat exchangers, undercharged refrigerant, or worn components consumes more energy than its design spec — often 10–22% more — without triggering any fault alarm. This invisible inefficiency directly increases PUE. OxMaint detects these degradation patterns through performance trending and routes corrective work orders before the degradation becomes severe. Across OxMaint deployments in data center environments, average cooling energy reductions of 11–18% have been documented — translating to PUE improvements of 0.08–0.19 points.

Start your free trial to see the PUE analytics dashboard.

Can OxMaint manage CRAC unit PM schedules alongside predictive anomaly detection?

Yes — OxMaint manages both predictive triggers (AI-detected anomalies that generate immediate work orders) and scheduled preventive maintenance (time-based and runtime-based PM for filter changes, coil cleaning, refrigerant checks, and electrical inspections). Both work order streams appear in the same dashboard, with priority scoring that ensures P1 predictive alerts are not delayed behind scheduled PM work. CRAC units in high-availability zones can be assigned redundancy-aware scheduling so PM work is always staggered rather than performed simultaneously on N+1 pairs.

Book a demo to see CRAC unit PM configuration in OxMaint.

What is the typical false positive rate for OxMaint cooling anomaly detection in data centers?

During the 30-day calibration period, false positive rates are typically 8–12% as baselines are being established. After the calibration period, the AI model's false positive rate drops below 4% for cooling asset anomaly detection. The system uses multi-parameter confirmation — an alert is generated only when deviations are detected across correlated parameters simultaneously, not from a single sensor value crossing a threshold. This correlation logic prevents nuisance alerts from sensor drift, transient load spikes, or seasonal temperature variations from generating unnecessary work orders.

Sign in to OxMaint to explore anomaly detection configuration settings.