When cooling fails in a commercial office, people get warm. When cooling fails in a data center, servers overheat within minutes, hardware sustains permanent damage, workloads crash mid-process, and SLA penalties trigger automatically. 97% of large enterprises report downtime costs exceeding $100,000 per hour — and cooling failures are among the top causes. The difference between 99.99% and 99.999% uptime is not better hardware. It is better, more disciplined maintenance of every CRAC unit, chiller, cooling tower, and CDU in your facility. This guide gives your team the exact framework to achieve it. Start managing critical cooling maintenance on Oxmaint — free trial, no credit card.

Blog / Data Center / HVAC & Cooling

Data Center HVAC and Cooling System Maintenance: Protecting Uptime-Critical Infrastructure

CMMS + Predictive Maintenance

CRAC · CRAH · Chilled Water · In-Row

2025 Data

Power and cooling issues account for approximately 70% of significant data center outage incidents. This is not a hardware problem — it is a maintenance discipline problem. Here is how leading operators build maintenance programs that protect five-nines availability.

$300K+

per hour, average enterprise downtime cost

70%

of major outages caused by power & cooling failures

41%

of enterprises report $1M+ cost per outage hour

15–20%

HVAC energy savings from preventive maintenance programs

Cooling System Overview

The Four Cooling Architectures That Protect Your Data Hall

Not all data center cooling is the same. The maintenance program you build must match the architecture you operate. Each system type has distinct failure modes, distinct service intervals, and distinct consequences when maintenance is deferred.

CRAC Units

Computer Room Air Conditioners

Use direct expansion (DX) refrigerant and a compressor. Self-contained units ideal for smaller to mid-size facilities under 200 kW load. Higher maintenance frequency on compressor and refrigerant circuit. Suitable for N+1 redundancy configurations.

CRAH Units

Computer Room Air Handlers

Use chilled water coils instead of refrigerant — more energy efficient, ideal for large-scale facilities over 200 kW. No compressor on unit; maintenance focus shifts to chilled water quality, pump systems, and control valve operation.

In-Row Cooling

High-Density Rack Cooling

Units sit directly between server racks, delivering cold air precisely at the point of heat generation. Modular and scalable but requires careful maintenance access planning. Common in AI and GPU compute environments with rack densities above 20 kW.

Chilled Water Plant

Central Chilled Water Systems

Central chiller plant providing chilled water to CRAH units across the facility. Most energy efficient at scale. Requires water treatment program, pump maintenance, and expansion vessel monitoring. Single point of failure risk demands N+1 or 2N chiller redundancy.

ASHRAE Environmental Standards

Operating Parameters Your Maintenance Program Must Protect

Every PM task in a data center exists to protect one thing: the environmental envelope inside the data hall. ASHRAE A1 class — the standard most enterprise facilities target — defines tight operating bands that must be maintained continuously, not just during business hours.

Server Inlet Temperature

64.4°F – 80.6°F

18°C – 27°C · ASHRAE A1 Class

Breach triggers thermal throttling, then emergency shutdown

Relative Humidity

40% – 60% RH

45–55% optimal operating band

Too low: static discharge risk. Too high: condensation, corrosion

Air Filtration

MERV 11–14

Minimum for sensitive electronics

Particle buildup on circuit boards causes unexpected failures

Temperature Stability

±1°C/minute

Max rate of change — ASHRAE guideline

Rapid swings trigger thermal stress on solder joints and ICs

PM Frequency Schedule

The Data Center Cooling PM Schedule: Daily to Annual

Data center cooling cannot run on commercial maintenance schedules. When cooling fails, downtime is measured in seconds. This is the maintenance frequency framework used by facilities targeting five-nines uptime — mapped by interval for CRAC and CRAH systems.

Daily

Discharge temperature verification on all active cooling units

BMS alarm review — temperature, humidity, and flow alerts

Confirm standby unit status and readiness

Weekly

Filter differential pressure checks — flag units approaching replacement threshold

Refrigerant pressure verification on DX (CRAC) units

Condenser coil visual inspection for blockage or fouling

Water treatment test for chilled water systems (pH, conductivity, inhibitor)

Monthly

Compressor current draw analysis — trend against baseline (CRAC units)

Fan motor vibration check and bearing condition assessment

Humidifier system inspection — steam canister, water pan, mineral scale

Standby unit rotation into active service — test under real load

Condensate drain flush and pump operation test

Quarterly

Full refrigerant circuit leak test — EPA Section 608 compliance documentation

Deep condenser and evaporator coil cleaning for thermal efficiency

Control valve calibration and sensor verification (CRAH proportional valves)

Belt tension adjustment on units with belt-driven fans — maintain factory parameters

Redundancy failover test — verify backup unit capacity under controlled conditions

Annual

Full system commissioning and capacity verification against rated output

Infrared thermal scan of all electrical connections and power panels

Ductwork inspection and cleaning — airflow path integrity

Emergency shutdown system test with documented results

Full-load capacity verification of all redundant cooling assets

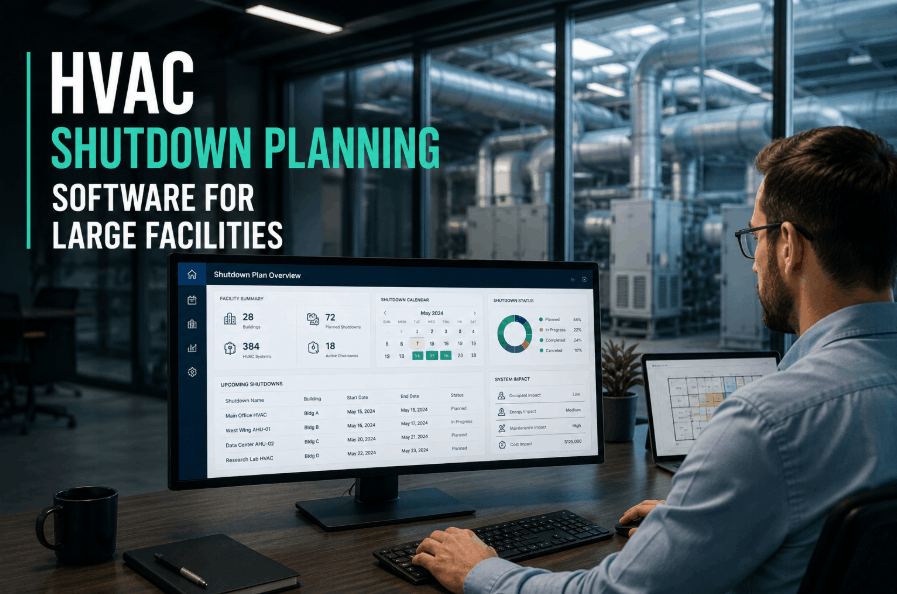

Automate Your PM Schedule

Every PM Interval. Every Unit. Automated and Documented.

Oxmaint generates data center cooling PM work orders on daily, weekly, monthly, and quarterly schedules — tied to individual CRAC, CRAH, and chiller assets. Technicians get mobile task lists. Compliance history builds automatically.

Top Failure Modes

The 6 Cooling Failures That Kill Data Center Uptime

Every critical cooling failure has a maintenance root cause — a PM task skipped, a threshold ignored, a standby unit never tested. These are the six most common pathways from deferred maintenance to data hall incident.

1

Dirty Filters Reducing Cooling Capacity

Clogged filters overwork motors, reduce airflow, and cut cooling capacity by 15–30% before any alarm is triggered. Filters on high-load units can reach replacement threshold in 4–6 weeks during peak summer. Monthly differential pressure checks catch this before efficiency degrades.

2

Refrigerant Leaks — Gradual Capacity Loss

CRAC unit refrigerant leaks rarely cause immediate failure — they cause slow, invisible capacity degradation over weeks. A unit running 15% low on refrigerant appears operational but cannot handle peak loads. Quarterly leak tests and pressure verification catch this before it becomes a data hall incident.

3

Standby Units That Cannot Deliver on Failover

The most dangerous failure pattern in data center cooling: a backup unit that has never been load-tested. Standby CRACs accumulate maintenance debt silently. Monthly rotation into active service and quarterly capacity verification under load is the only way to confirm a standby unit will actually perform when needed.

4

Condenser Fouling on Rooftop and Outdoor Units

Condenser coil fouling raises head pressure, increases compressor load, and reduces cooling efficiency by up to 25% before the system trips. Quarterly cleaning is the most direct maintenance action for preserving CRAC unit capacity in high-ambient or dusty environments.

5

Chilled Water Quality Degradation

CRAH systems depend entirely on chilled water quality. Untreated water causes scaling, corrosion, and biological growth in coils and pipes. Weekly water analysis for pH, conductivity, and inhibitor levels is the minimum maintenance cadence — failures from water quality rarely give warning before a coil blocks or a pump seizes.

6

Hot/Cold Aisle Containment Bypass

Gaps in blanking panels, unsealed cable cutouts, and damaged containment curtains allow hot exhaust air to recirculate into cold aisles — raising server inlet temperatures without triggering cooling unit alarms. Regular airflow audits and containment inspections prevent the thermal mixing that creates localized hot spots and unexpected shutdowns.

Uptime Benchmarks

What Each Uptime Tier Actually Means for Cooling Maintenance

Uptime percentages look small on paper. In practice, the difference between tiers represents a massive gap in maintenance discipline — and in the financial exposure your facility carries.

| Uptime Tier |

Annual Downtime Allowed |

Cooling PM Cadence |

Redundancy Required |

CMMS Requirement |

| 99.9% (Tier I) |

8.76 hours/year |

Monthly minimum |

No N+1 required |

Basic work order tracking |

| 99.99% (Tier III) |

52.6 minutes/year |

Weekly + Monthly |

N+1 on all cooling paths |

Scheduled PMs + documentation |

| 99.999% (Tier IV) |

5.26 minutes/year |

Daily + full schedule |

2N full fault tolerance |

Predictive AI + full audit trail |

At 99.999% uptime, you have 5.26 minutes of allowable downtime per year. That is less time than it takes to restart a single CRAC unit after an unexpected trip. Every minute of that budget is protected by maintenance discipline — not redundant hardware alone.

Predictive Maintenance AI

How AI-Driven Maintenance Catches Cooling Failures Before They Happen

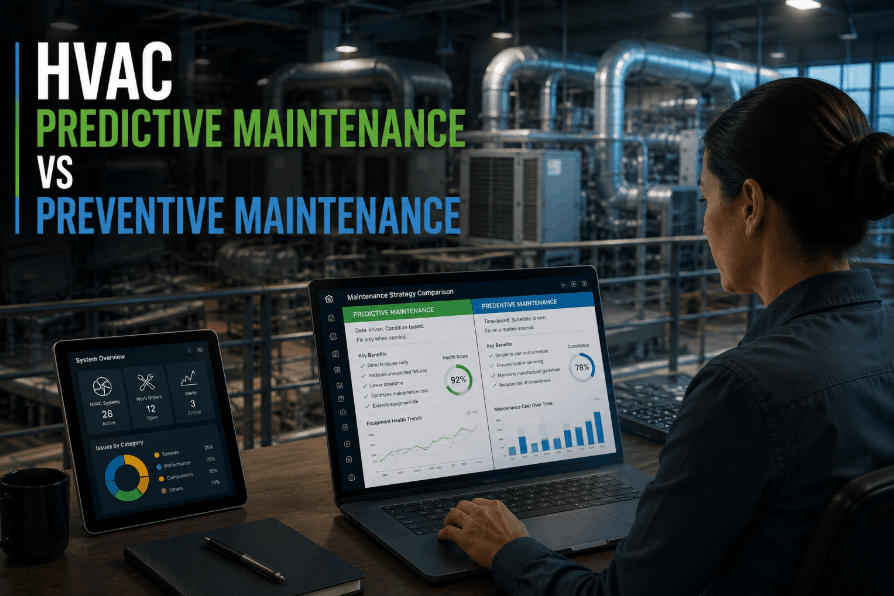

The limitation of calendar-based PM is that it catches failures on a schedule — not when they are actually developing. AI-powered predictive maintenance monitors real-time sensor data to detect degradation patterns weeks before a unit fails, giving maintenance teams time to act without risking the data hall.

■

Compressor Current Trending

Increasing current draw on a CRAC compressor is a leading indicator of bearing wear or refrigerant shortage. AI detects a 3–5% trend upward weeks before failure. Calendar PM would schedule a check in 90 days — the failure happens in 30.

■

Discharge Temperature Drift

A CRAC unit's discharge temperature creeping upward by 1–2°C over two weeks signals coil fouling or low refrigerant before any visible symptom appears. Daily readings fed into a trend model flag this automatically, triggering an inspection work order.

■

Chilled Water Return Delta

A narrowing delta-T between chilled water supply and return in CRAH systems indicates coil fouling, flow restriction, or a failing control valve. AI monitoring catches a 10% delta-T reduction and generates a coil cleaning work order before efficiency loss triggers a capacity shortfall.

■

Fan Motor Vibration Signature

Bearing degradation in fan motors produces a characteristic vibration frequency shift detectable 3–6 weeks before mechanical failure. Vibration monitoring feeds continuously into Oxmaint's AI model, which schedules bearing replacement in the next planned window — not after the motor seizes at 2 AM.

Expert Review

What Data Center Facility Teams Say After Deploying CMMS-Driven Cooling Maintenance

DH

David H.

Critical Facilities Manager, Colocation Data Center — 8MW capacity

★★★★★

We had three significant cooling incidents in 18 months — all from deferred maintenance that had no documentation trail. After deploying Oxmaint, every CRAC and CRAH unit has a complete PM history accessible on mobile. Our last audit took 4 hours instead of 4 days. We haven't had a cooling-related incident since deployment, and our SLA renewal rates improved significantly because customers can now see the documented maintenance record.

AN

Ananya N.

Data Center Operations Director, Enterprise Facility — 3 sites

★★★★★

The standby unit rotation feature alone justified the platform cost. We discovered that two of our six standby CRAC units had maintenance deficits that would have prevented them from delivering rated capacity on failover. We would never have known without systematic monthly rotation and capacity logging. Oxmaint made that invisible risk visible before it became a 2 AM incident.

Five-Nines Maintenance

Your Cooling Maintenance Program Should Be as Reliable as Your Uptime SLA.

Oxmaint gives data center operators CRAC and CRAH asset tracking, redundancy-aware PM scheduling, AI-driven capacity trend monitoring, and audit-ready compliance documentation. Deploy on your critical cooling assets this week.

FAQs

Data Center Cooling Maintenance — Questions Facility Teams Ask

How often should CRAC units be serviced in a Tier III or Tier IV data center?

Tier III and Tier IV facilities should follow at minimum a daily (BMS alarm review, discharge temp verification), weekly (filter pressure, refrigerant pressure), monthly (compressor current trend, fan vibration, standby unit rotation), and quarterly (full refrigerant leak test, coil cleaning, control calibration, redundancy failover test) cadence. Calendar-based PM should be supplemented with condition-based triggers via CMMS so that anomalies detected between scheduled intervals generate immediate work orders.

Start building your PM schedule in Oxmaint — free trial.

What is the biggest maintenance mistake data center operators make with standby cooling units?

The most common and dangerous mistake is assuming a standby unit is ready simply because it has power and no active alarms. Standby units accumulate maintenance debt silently — refrigerant degrades, coils foul, bearings wear — all without triggering any alert because the unit never runs under load. Monthly rotation into active service with load testing, and quarterly capacity verification, are the only reliable ways to confirm a standby unit will actually deliver when the primary fails.

Book a demo to see how Oxmaint schedules redundancy testing automatically.

Does a CMMS help with EPA Section 608 refrigerant compliance documentation?

Yes — and for data centers running large CRAC fleets, this is one of the most immediate operational benefits. EPA Section 608 requires documented leak inspections at defined intervals for systems above threshold refrigerant charge. A CMMS like Oxmaint auto-schedules these inspections, captures technician readings and test results directly to the work order, and generates on-demand compliance reports that are audit-ready within minutes. Manual spreadsheet tracking of refrigerant compliance across a multi-unit fleet is a major audit liability.

See how compliance documentation works in Oxmaint.

How does hot/cold aisle containment maintenance prevent outages?

Aisle containment integrity directly affects server inlet temperatures. Gaps from missing blanking panels, unsealed cable penetrations, or damaged containment curtains allow hot exhaust to recirculate into cold aisles — raising server inlet temperatures without triggering cooling unit alarms, since the cooling units themselves are still operating normally. A quarterly airflow and containment audit with findings logged in CMMS creates a systematic record of containment health. Oxmaint allows technicians to log containment inspection results directly to each rack asset's record during rounds.

Start your free trial and log your first containment inspection today.

What sensor data should feed into a data center cooling predictive maintenance program?

Minimum viable sensor inputs for predictive cooling maintenance are: CRAC compressor current draw (trending), CRAC and CRAH discharge temperature (continuous), chilled water supply and return delta-T, fan motor vibration (accelerometers on large units), filter differential pressure, and server inlet temperature per row. These data streams feed into Oxmaint's predictive model, which flags trending deviations from each unit's individual healthy baseline — not generic industry thresholds. Most data centers already have BMS infrastructure generating these readings. Oxmaint connects to SCADA and BMS via standard protocols without additional hardware.

Book a demo to review sensor integration for your cooling infrastructure.

Data Center HVAC · Predictive Maintenance · Free to Start

Five-Nines Uptime Starts With Five-Star Maintenance Discipline.

Oxmaint gives your data center cooling team asset-level tracking for every CRAC, CRAH, chiller, and CDU — with AI-driven failure detection, redundancy-aware PM scheduling, and instant audit-ready documentation that enterprise customers require before signing contracts.