When a cement kiln support roller bearing collapses without warning, the recovery window is not hours — it is 5 to 7 days of shutdown, emergency procurement, and lost production that no maintenance budget has room for. Cloud-connected AI condition monitoring can predict these failures, but at most cement plants the network infrastructure, data sovereignty requirements, and process control system architecture make sending vibration data to a cloud server impractical or impossible. NVIDIA Jetson-powered edge AI changes the equation: AI inference runs on-premise, on the plant floor, processing vibration signatures, thermal readings, and motor current data in real time with sub-30 millisecond latency — no cloud connection required. When the on-premise model detects a bearing fault signature, it pushes a work order directly into your CMMS. Start a free trial with Oxmaint CMMS and build the CMMS layer that receives and tracks your edge AI maintenance predictions, or book a 30-minute session with our cement reliability engineers to design your edge AI to CMMS integration architecture.

Why Edge AI for Cement

Cloud AI vs. On-Premise Edge AI: The Cement Plant Decision

Most cement plants operate with industrial control networks (OT networks) that are deliberately isolated from the internet for cybersecurity and process stability reasons. Sending high-frequency vibration data from a kiln support roller bearing to a cloud AI server requires bridging that isolation — creating both a security exposure and a reliability dependency on internet connectivity. Edge AI inference on NVIDIA Jetson hardware eliminates both risks by running the failure prediction model on the plant floor, next to the equipment it monitors.

Cloud-Based AI Condition Monitoring

Network requirement

Continuous internet or WAN connectivity required for sensor data upload

Inference latency

100–500ms typical; unreliable during network congestion

Data sovereignty

Sensor data and operational parameters leave plant control network

OT/IT integration

Requires firewall rule changes and OT network exposure — typically blocked by plant IT policy

Model training

Centralized training on multi-plant data — broader failure pattern library

Hardware cost

Lower upfront hardware — subscription-based pricing model

NVIDIA Jetson Edge AI (On-Premise)

Network requirement

No internet required — model runs on local hardware, sends work orders to on-premise CMMS

Inference latency

Sub-30ms with TensorRT optimization — real-time fault detection without cloud round-trip

Data sovereignty

All sensor data stays within OT network — no data leaves the plant perimeter

OT/IT integration

Edge device connects locally to sensor inputs and CMMS — no firewall exposure required

Model training

Initial model trained on industry failure libraries; improves on plant-specific data over time

Hardware cost

Upfront hardware investment per monitoring node; no ongoing data transmission costs

Sub-30ms

Jetson TensorRT inference latency vs 100–500ms cloud round-trip

5–7 days

Typical kiln support roller failure recovery if undetected before bearing collapse

60 days

Advance warning achievable by AI on micro-fault signatures before catastrophic bearing failure

30–40%

Gearbox overhaul interval extension from AI-driven oil change scheduling vs fixed calendar

Target Equipment

Where Jetson Edge AI Delivers the Highest ROI in a Cement Plant

Not every piece of equipment in a cement plant warrants an AI-powered edge monitoring node. The correct deployment targets are assets where a single unexpected failure generates downtime costs that justify the hardware and integration investment — typically assets that are expensive to repair, long to source parts for, or process-critical enough that their failure stops the entire production line.

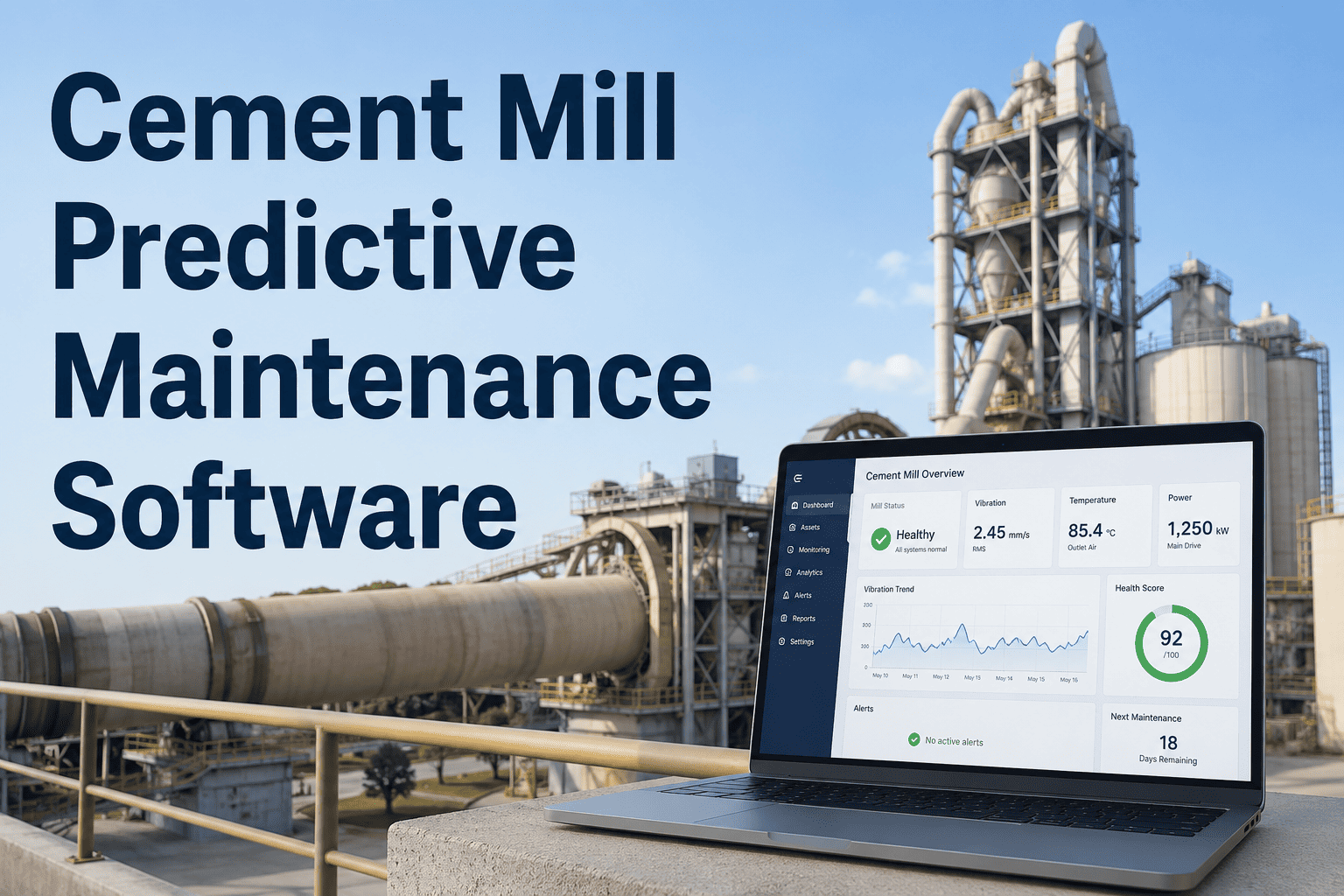

Kiln Support Rollers and Bearings

Critical

Failure consequence5–7 day kiln shutdown; refractory damage possible if kiln runs misaligned

AI detection methodTri-axial vibration FFT analysis — inner race and roller defect frequencies detected 30–60 days before audible noise

Sensor inputs to JetsonTri-axial vibration, bearing temperature (RTD/thermocouple), kiln shell temperature array

CMMS work order triggeredVibration route inspection → oil sampling work order → planned bearing replacement at next outage window

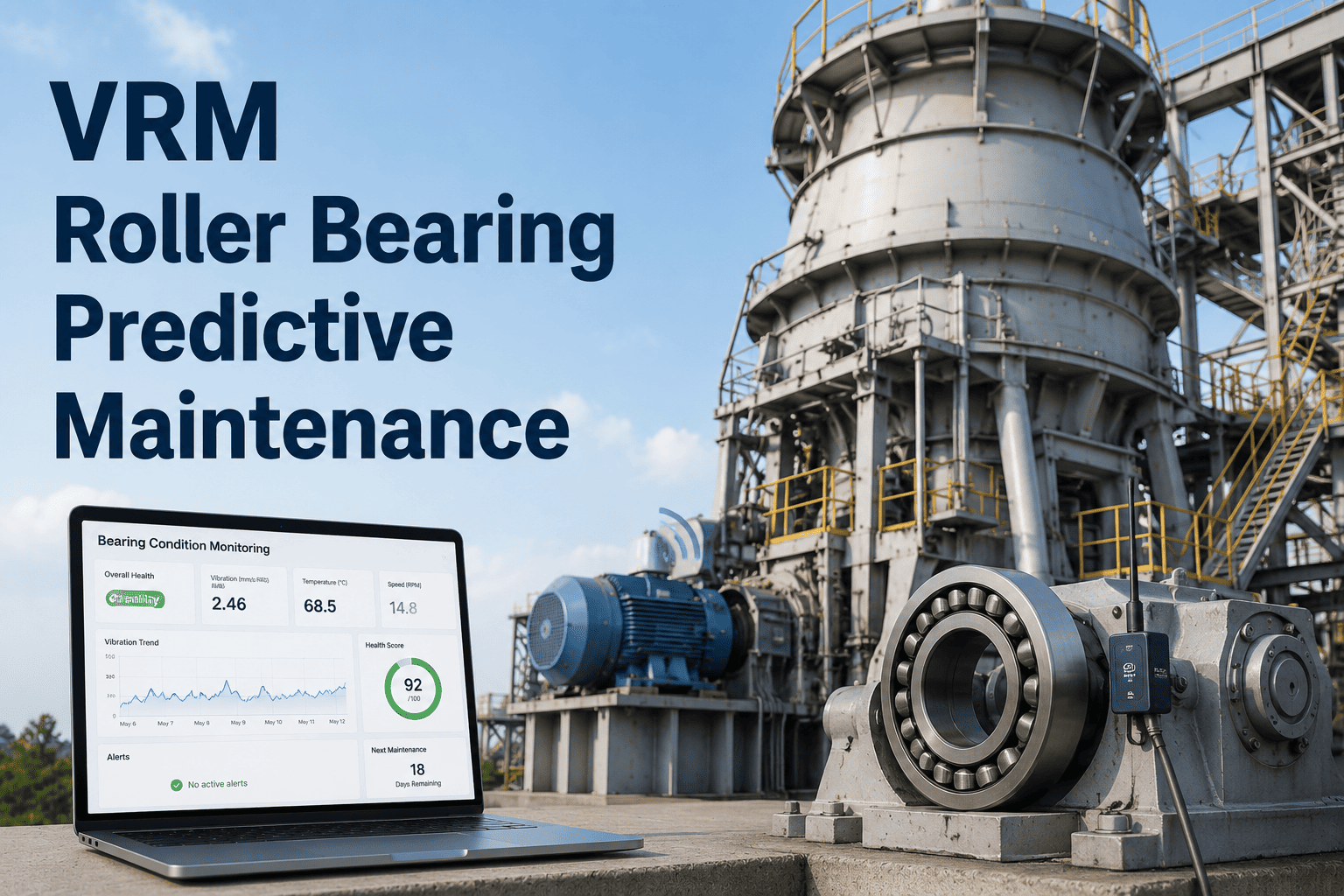

VRM and Ball Mill Drive Gearboxes

Critical

Failure consequence72-hour mill shutdown typical; gearbox rebuild cost $200K–$500K depending on size

AI detection methodGear mesh frequency analysis and envelope detection for tooth wear; in-line oil particle count trending for wear debris accumulation

Sensor inputs to JetsonVibration (horizontal/vertical/axial), oil temperature, in-line particle counter, motor current draw

CMMS work order triggeredOil sample analysis request → tooth inspection at next scheduled stop → planned gearbox rebuild scheduling

ID Fans and Kiln Exhaust Fans

High Priority

Failure consequenceKiln draft loss forces production reduction or shutdown; bearing replacement typically 8–16 hours

AI detection methodImbalance and misalignment detection from vibration signatures; blade erosion inferred from power draw vs airflow ratio degradation

Sensor inputs to JetsonDrive-end/non-drive-end bearing vibration, motor current, differential pressure across fan, bearing temperature

CMMS work order triggeredVibration trending inspection → planned bearing replacement → blade erosion check at next outage

Conveyor Belts and Bucket Elevators

High Priority

Failure consequenceBelt tear or bucket elevator failure stops material flow to kiln within 30–60 minutes; belt splicing repair 4–8 hours minimum

AI detection methodBelt slip detection from speed differential sensors; idler bearing vibration monitoring for pre-failure seizure signatures; belt thickness via ultrasonic

Sensor inputs to JetsonHead/tail drum speed, idler vibration (periodic sample), motor current, belt thickness sensor output

CMMS work order triggeredIdler replacement route → belt thickness inspection → belt tension check work order

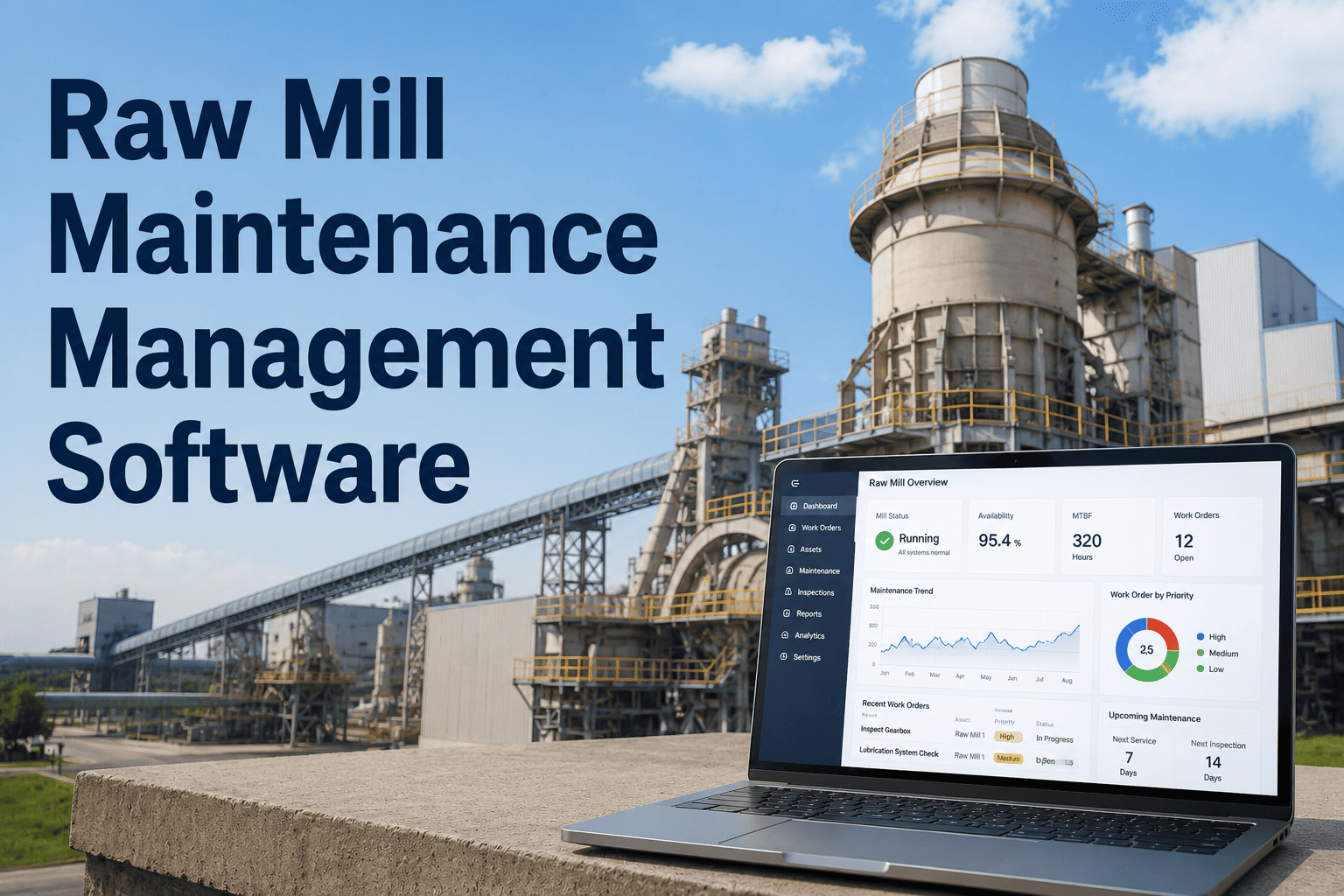

CMMS Ready for Edge AI

Your Edge AI Predictions Need a CMMS to Act On Them

Oxmaint CMMS receives work order triggers from edge AI systems, tracks prediction-to-resolution outcomes, and builds the failure pattern history that makes your on-premise AI models more accurate over time.

Architecture Deep Dive

How NVIDIA Jetson Edge AI Integrates with Cement Plant CMMS: The Architecture

An edge AI early-warning system is not a standalone product — it is an architecture that connects physical sensors, edge inference hardware, and CMMS into a closed-loop maintenance system. The three-layer architecture below shows how a Jetson-powered edge node takes raw sensor data at the equipment level and delivers an actionable, documented maintenance work order in CMMS — without any cloud dependency.

Layer 1: Sensor Acquisition

Tri-axial vibration accelerometer (ICP/IEPE)

RTD bearing temperature

Motor current transducer

In-line oil particle counter

Acoustic emission sensor

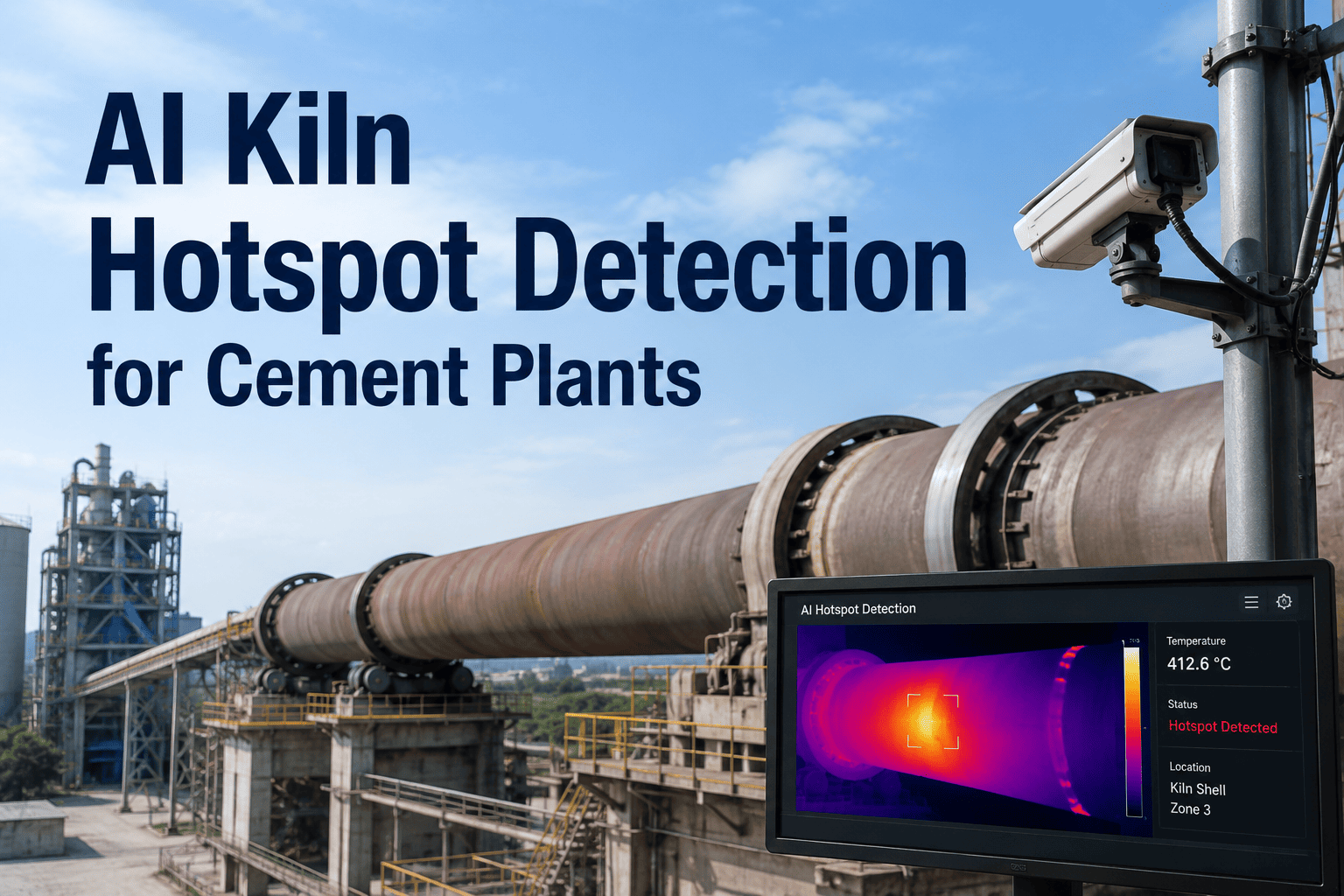

Infrared thermal camera

Sensor data over industrial fieldbus or hardwired analog I/O

Layer 2: NVIDIA Jetson Edge Inference Node

Signal Processing

FFT decomposition for fault frequencies

Envelope analysis for bearing defects

Thermal image processing via TensorRT

Time-series anomaly detection

AI Model Outputs

Fault type classification with confidence score

Remaining useful life (RUL) estimate

Recommended maintenance action

Urgency level (planned / urgent / immediate)

Structured JSON work order trigger over local OT network — no internet required

Layer 3: CMMS — Oxmaint

Auto-generated work order with asset ID, fault type, and RUL estimate

Technician assignment and parts pre-staging from storeroom inventory

Completion record with actual vs predicted failure condition

Historical accuracy tracking — prediction confirmed or false positive logged

Deployment Guide

Deploying Jetson Edge AI at a Cement Plant: What the Program Looks Like in Practice

The gap between a Jetson developer kit and a production-grade cement plant monitoring program is real. Industrial environments — 60°C ambient temperatures, fine cement dust, vibration, and electromagnetic interference from large motors — demand hardware rated for the conditions, validated sensor mounting, and integration testing with the plant's existing control system. Here is the deployment program in practical terms.

Phase 1

Asset Criticality Assessment and Sensor Point Selection

Map the 10–15 highest-consequence failure modes at your plant. Rank by downtime cost, lead time for critical parts, and current inspection frequency. Select sensor deployment points for the top 5–8 assets. Document existing sensor coverage — some assets may already have vibration transmitters that can feed a Jetson node without new hardware.

Typical duration: 2–3 weeks

Phase 2

Edge Hardware Selection and Industrial Hardening

Select the Jetson platform based on inference workload — Jetson Orin NX for multi-channel vibration and thermal analysis; Jetson AGX Orin for sites with visual inspection requirements via camera feeds. Mount in IP-rated industrial enclosures with positive pressure purging for dust protection. Specify ATEX or IECEx rating if monitoring equipment in explosive dust zones.

Typical duration: 4–6 weeks including procurement

Phase 3

Baseline Period and Model Calibration

Run all sensors for 60–90 days before enabling AI alert generation. During this period, the edge model establishes the equipment's unique "healthy" vibration and thermal signature — the baseline that all subsequent anomaly detection is measured against. Collecting baseline data during a known-good equipment state is critical; alerts generated before this period are unreliable.

Typical duration: 60–90 days

Phase 4

CMMS Integration and Work Order Routing

Configure the Jetson node's output to push structured work order triggers to Oxmaint CMMS via API or local network message broker. Map each fault type to the correct CMMS work order template — bearing fault → vibration inspection route; oil contamination → oil sample analysis; thermal anomaly → thermographic inspection. Configure escalation logic so RUL estimates below threshold auto-upgrade urgency level.

Typical duration: 2–3 weeks

Phase 5

Model Improvement Loop — Using CMMS Outcomes to Improve Predictions

After each predicted failure is investigated and the work order closed, CMMS captures the actual equipment condition found — whether the AI prediction was confirmed, overstated, or missed. This outcome data feeds back into the edge AI model training cycle, progressively improving prediction accuracy for your specific plant's equipment and operating conditions. This is the compounding value of the CMMS-edge AI integration.

Ongoing — model accuracy improves over 12–18 months

Frequently Asked Questions

NVIDIA Jetson Edge AI and CMMS Integration: Common Questions

Which NVIDIA Jetson platform is right for cement plant vibration monitoring?

For vibration-only monitoring of 4–8 channels with bearing fault FFT analysis, the Jetson Orin NX (16GB) provides sufficient inference performance at moderate power consumption. For plants adding thermal camera feeds or visual inspection AI models alongside vibration, the Jetson AGX Orin provides the headroom for multi-modal inference. Both run TensorRT-optimized models for sub-30ms inference latency. Industrial enclosure and dust protection is the more important hardware specification decision for cement plant environments than Jetson module selection.

See how Oxmaint CMMS integrates with edge AI work order outputs.

How does edge AI connect to CMMS without internet access?

The Jetson node connects to Oxmaint CMMS over the plant's local OT or IT network using a REST API call or MQTT message broker — both methods work on isolated local networks with no internet routing required. The Jetson generates a structured JSON payload containing asset ID, fault classification, confidence score, and recommended action, which Oxmaint receives and converts into a formatted work order. No cloud server is involved in the data path.

Book a session to design the CMMS integration architecture for your plant.

How long does it take before edge AI predictions become reliable?

The first 60–90 days after deployment are a baseline collection period — alerts generated during this time have lower reliability because the model has not yet characterized the equipment's normal operating signature. Reliable fault detection typically activates around day 90, and prediction accuracy for your specific equipment continues improving for 12–18 months as the CMMS outcome feedback loop provides confirmed or disconfirmed fault predictions to retrain the model.

Can existing vibration sensors feed a Jetson edge node, or do we need new sensors?

Existing sensors can feed a Jetson node if they output standard industrial signals — 4–20mA, ICP/IEPE (constant current excitation), or Modbus RTU/TCP. Jetson nodes with appropriate industrial I/O modules can acquire from these sources directly. Where existing sensors output proprietary protocols (some older OEM-installed monitoring systems use closed protocols), new tri-axial IEPEaccelerometers are typically the practical solution and provide significantly better frequency resolution for AI fault detection.

Oxmaint CMMS tracks all sensor assets and calibration records in the maintenance hierarchy.

What is a realistic ROI timeline for an edge AI and CMMS program in a cement plant?

A single prevented kiln support roller bearing failure — avoiding a 5-day shutdown at a 4,000 TPD plant running $60/tonne cement — represents approximately $1.2M in avoided production loss. A complete Jetson monitoring program for a kiln line (8–12 monitoring nodes, integration, and baseline period) typically costs $150K–$250K deployed. The ROI payback from the first significant failure prevented is immediate and complete. More conservatively, maintenance labor savings from eliminating unnecessary calendar-based inspections typically generate 20–30% ROI within the first year.

Get an ROI estimate scoped to your plant configuration.

The CMMS Layer Your Edge AI Needs

On-Premise AI Predicts It. CMMS Tracks, Assigns, and Closes the Loop.

Oxmaint CMMS is the operational layer that converts your Jetson edge AI predictions into scheduled work orders, documents technician findings, and feeds outcome data back to your AI model — so the system gets smarter with every prediction it makes.