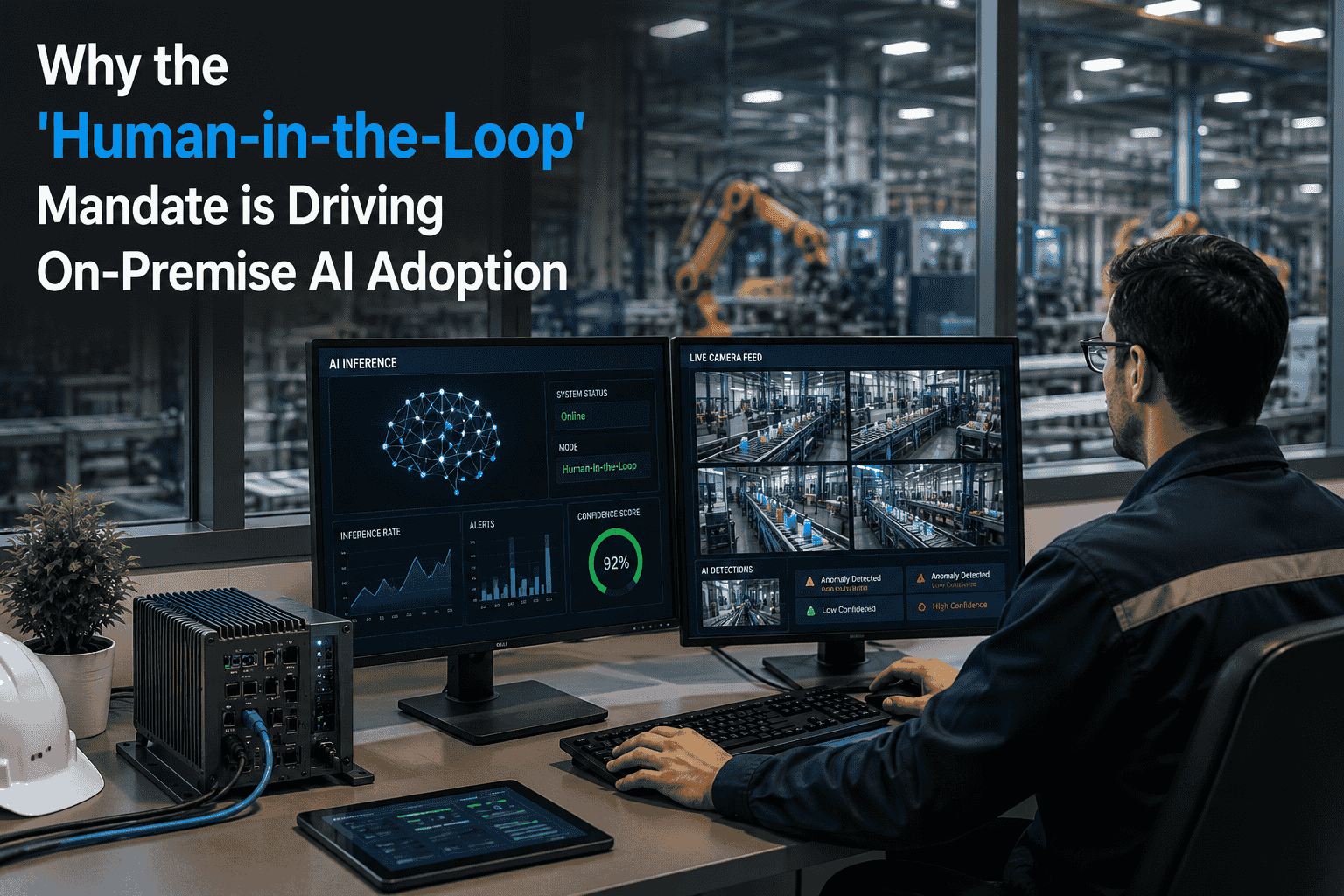

Not every byte of data should leave your building. In industrial maintenance, the sensor readings from a turbine, the vibration signatures from a compressor, and the failure predictions for a reactor vessel all carry operational intelligence that regulators, customers, and competitive reality say must stay on-site. On-premise AI servers exist for exactly this reason — they deliver the processing power of cloud AI with the data sovereignty, low latency, and compliance assurance that sensitive industrial operations demand. When paired with a maintenance platform like Oxmaint and connected to SAP, on-premise AI turns raw plant-floor data into real-time predictions without a single packet leaving your firewall. Start your free Oxmaint trial — deployable on-premise or in the cloud, your choice. Or book a demo to see Oxmaint running on-premise AI for predictive maintenance with full data sovereignty.

Infrastructure Guide

On-Premise AI Servers: Data Sovereignty & High-Performance Edge AI

Why the fastest, most secure path to industrial AI keeps your data exactly where it belongs — inside your own walls.

Your data. Your servers. Your AI.

The Three Forces Driving On-Premise AI

The cloud is not the enemy. But for a growing category of industrial workloads, the cloud is the wrong architecture. Three converging forces are pushing enterprises to deploy AI inference — and increasingly AI training — on their own hardware, inside their own perimeters. Understanding which force applies to your operation determines whether on-premise AI is a preference or a requirement.

Latency

A vibration anomaly on a turbine bearing needs to be detected in milliseconds, not the 80–200ms round-trip to a cloud data centre. On-premise inference runs in under 5ms — fast enough for real-time control loops and safety-critical shutdown decisions.

<5ms on-premise vs 80–200ms cloud round-trip

Data Sovereignty

GDPR, China's PIPL, India's DPDPA, and sector-specific regulations in energy, defence, and healthcare require that certain data categories never leave national or facility boundaries. On-premise AI processes data where it was created — no cross-border transfer, no third-party access.

0 bytes leaving the facility perimeter

Bandwidth & Volume

A single vibration sensor sampling at 50 kHz produces 4.3 GB per day. Multiply by thousands of sensors and sending raw data to the cloud becomes economically absurd. On-premise AI processes at the source and sends only insights upstream.

4.3 GB/day per sensor at high-frequency sampling

On-Premise vs Cloud vs Hybrid: The Decision Matrix

The choice is not binary. Most mature enterprises end up with a hybrid pattern — on-premise for inference and sensitive workloads, cloud for training and non-sensitive analytics. The matrix below maps seven common industrial AI workloads to their optimal deployment tier based on latency, sovereignty, and cost constraints.

| AI Workload |

On-Premise |

Edge |

Cloud |

Why |

| Real-time anomaly detection |

Best |

Good |

Poor |

Latency below 10ms required for control loops |

| Failure prediction (RUL) |

Best |

Good |

Good |

Sensitive asset data; moderate latency tolerance |

| Model training (initial) |

Good |

Poor |

Best |

GPU-intensive; burst capacity; data can be anonymized |

| Safety system monitoring |

Best |

Best |

Poor |

Cannot depend on internet connectivity for safety |

| Work order optimization |

Best |

Poor |

Good |

Needs full SAP + Oxmaint dataset; moderate latency ok |

| Quality inspection (vision) |

Good |

Best |

Poor |

High bandwidth imagery; line-speed inference required |

| Enterprise analytics |

Good |

Poor |

Best |

Multi-site aggregation; not latency sensitive |

The On-Premise AI Server Stack

A production-grade on-premise AI deployment is not just a GPU in a rack. It is a four-layer stack — hardware at the bottom, orchestration in the middle, the AI model layer, and the application layer at the top where Oxmaint translates predictions into maintenance actions. Each layer has specific technology choices and sizing considerations.

Application

Oxmaint + SAP Integration

Work order generation, technician dispatch, SAP posting, dashboard rendering, mobile delivery

AI Models

Inference & Edge Training

Anomaly detection, RUL prediction, failure classification, condition scoring, optimization agents

Orchestration

Model Serving & Data Pipeline

Model registry, inference scheduling, time-series ingestion, feature store, monitoring

Hardware

Compute, Storage & Network

GPU servers, NVMe storage, 25GbE networking, UPS, environmental controls, physical security

Data Sovereignty by Regulation

Data sovereignty is not one global rule. It is a patchwork of national and sector-specific regulations, each with different requirements about where data can be stored, who can access it, and when it can cross borders. On-premise AI eliminates this complexity by processing everything locally — no data transfers, no third-party processors, no cross-border data flow assessments.

EU

GDPR

Personal data of EU residents

Data processing agreements, transfer impact assessments, adequacy decisions for cross-border flows

On-premise: No cross-border transfer needed

CN

PIPL + DSL

All personal data + "important data" categories

Government security assessment required before cross-border transfer of important data

On-premise: Data stays in-country by design

IN

DPDPA

Digital personal data of Indian citizens

Government may restrict transfer to specific countries; sector rules layer on top

On-premise: Compliance without monitoring transfers

US

Sector Rules

ITAR (defence), HIPAA (health), FERC/NERC (energy)

Controlled unclassified information; critical infrastructure data; patient health records

On-premise: Meets all sector-specific localization rules

Performance Benchmarks: On-Premise vs Cloud

The performance argument for on-premise AI is not theoretical. These benchmarks are drawn from actual industrial deployments running predictive maintenance inference across vibration, temperature, and pressure sensor streams — the exact workloads Oxmaint processes for condition monitoring.

Availability During Internet Outage

100% operational

On-Premise

3-Year TCO (100-asset deployment)

Deploy On Your Terms

Oxmaint deploys on-premise, in the cloud, or hybrid — same platform, your infrastructure choice

Unlike cloud-only maintenance platforms, Oxmaint gives you full deployment flexibility. Run AI inference on your own GPU servers, keep SAP data inside your firewall, and let technicians use the same mobile app regardless of where the backend lives.

Sizing Guide: What Hardware You Actually Need

One of the biggest barriers to on-premise AI is overestimating the hardware required. Modern inference-optimized hardware means that a predictive maintenance deployment for 100–500 assets fits in a single rack unit. Here is the sizing guidance based on actual Oxmaint on-premise deployments across industrial environments.

Small

10–100 assets

GPU1x NVIDIA T4 or L4

CPU16-core Xeon or EPYC

RAM64 GB ECC

Storage2 TB NVMe + 8 TB HDD

Rack1U–2U server

Power350–500W typical

$12K–$25K hardware

Medium

100–500 assets

GPU2x NVIDIA A30 or L40

CPU32-core Xeon or EPYC

RAM128 GB ECC

Storage4 TB NVMe + 24 TB HDD

Rack2U–4U server

Power800W–1.2kW typical

$35K–$65K hardware

Large

500–5,000 assets

GPU4x NVIDIA A100 or H100

CPU64-core dual-socket

RAM512 GB ECC

Storage16 TB NVMe + 100 TB

RackMulti-node cluster

Power4–8kW typical

$120K–$350K hardware

Security Architecture: Defense in Depth

On-premise AI does not automatically mean secure AI. The server still needs to be hardened, the data pipeline encrypted, and the model endpoints protected. Here are the five security layers that distinguish a production-grade on-premise AI deployment from a GPU someone plugged in under a desk.

Layer 1

Physical Security

Locked server room, badge access, environmental monitoring, tamper-evident enclosures, video surveillance

Layer 2

Network Isolation

Dedicated VLAN for AI workloads, firewall rules blocking outbound data, micro-segmentation from OT networks

Layer 3

Data Encryption

AES-256 at rest, TLS 1.3 in transit, encrypted model weights, secure key management with HSM

Layer 4

Access Control

RBAC for model endpoints, API token scoping, SAML/OAuth integration, audit logging of all access

Layer 5

Model Governance

Version control, drift detection, approval workflows for model updates, reproducible training pipelines

Where Oxmaint Sits in On-Premise Deployments

Oxmaint is deployment-agnostic by design. The same platform, same mobile app, same SAP connector, and same AI model library run identically whether the backend is hosted in a cloud region, on your own servers, or split across both. For on-premise deployments, Oxmaint provides three specific capabilities that cloud-only platforms cannot match.

01

Local AI Inference Engine

Oxmaint's predictive maintenance models run directly on your on-premise GPU hardware. Sensor data never leaves the facility. Predictions generate work orders inside your firewall in under 5ms.

02

Air-Gapped SAP Connectivity

Oxmaint's certified SAP connector operates over your internal network only. Asset masters, work orders, parts data, and cost postings flow between Oxmaint and SAP without any external network dependency.

03

Offline-First Mobile App

Technicians use Oxmaint's mobile app in areas with zero connectivity — turbine halls, basements, offshore platforms. Data syncs to the on-premise server when connection returns, not to a cloud endpoint.

Your Infrastructure, Your Rules

See Oxmaint running on-premise with SAP integration and AI inference in a live demo

In 30 minutes, we walk through the exact hardware requirements, network topology, and deployment steps for running Oxmaint + AI on your servers — with full SAP PM, MM, and FI/CO integration inside your firewall.

Frequently Asked Questions

Does Oxmaint support full on-premise deployment including AI inference?

Yes. Oxmaint deploys entirely on-premise — the application server, database, AI inference engine, and SAP connector all run on your hardware inside your network. No data leaves your facility. The same mobile app and dashboards work identically regardless of deployment mode.

What GPU hardware does Oxmaint require for on-premise AI?

For deployments under 100 assets, a single NVIDIA T4 or L4 GPU is sufficient. Larger deployments (100–500 assets) typically use 2x A30 or L40 GPUs. Enterprise-scale operations (500+ assets) use A100 or H100 clusters. Oxmaint's models are optimized for inference efficiency.

Book a demo to get sizing guidance for your asset count.

Can we run Oxmaint in a hybrid mode with some workloads on-premise and others in the cloud?

Yes. The most common hybrid pattern keeps AI inference and SAP connectivity on-premise while using cloud for model training, multi-site analytics, and non-sensitive reporting. Oxmaint's architecture supports this split natively.

How does on-premise Oxmaint handle software updates?

Updates are delivered as versioned packages that your IT team reviews and deploys on your schedule. No automatic cloud-pushed updates. Rollback capability included with every release. Air-gapped environments receive updates via secure media transfer.

Is on-premise AI more expensive than cloud over time?

For sustained inference workloads (running 24/7 against sensor streams), on-premise typically reaches cost parity within 12–18 months and is significantly cheaper at 3 years. Cloud is more economical for burst training workloads. Most enterprises use both.

Start a free trial to model your specific cost comparison.

Does on-premise deployment affect Oxmaint's mobile app functionality?

Not at all. The Oxmaint mobile app connects to whichever backend your organization runs — cloud, on-premise, or hybrid. Offline capability works identically. Technicians see no difference in the field experience regardless of deployment architecture.

Oxmaint: The Maintenance AI Platform That Deploys Where You Need It

On-premise, cloud, or hybrid — same powerful platform, same SAP integration, same predictive AI, same mobile experience. Data sovereignty, low latency, and full compliance without sacrificing a single feature. Your infrastructure, your rules.