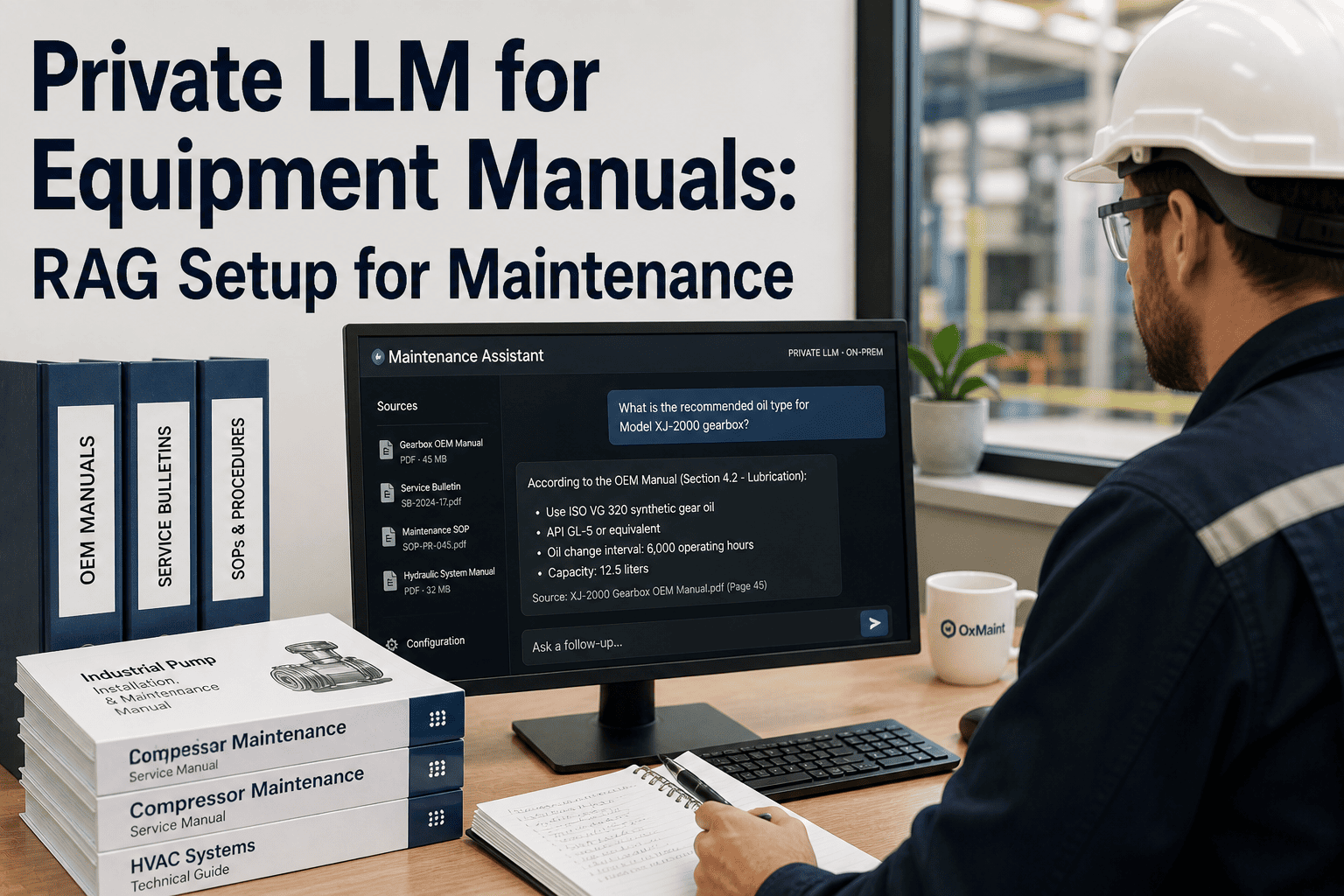

A technician stands in front of a 12-year-old compressor at 2 AM. The fault code is unusual. The OEM manual is a 400-page PDF on a shared drive no one has indexed. The senior engineer who knew this machine retired in March. Without fast, accurate answers from institutional knowledge, that technician guesses — and guesses in maintenance cost money, time, and sometimes safety. A private LLM built over your equipment manuals, OEM bulletins, and SOPs using Retrieval-Augmented Generation (RAG) solves this problem permanently. OxMaint's on-prem AI maintenance platform integrates RAG-based manual retrieval directly into the technician workflow — turning 40 years of equipment documentation into instant, cited answers on any connected device.

Live · Maintenance LLM · On-Premises AI

Upcoming Oxmaint AI Live Webinar- Private LLM for Equipment Manuals: RAG Setup for Maintenance

Build a RAG system over OEM manuals, SOPs, and bulletins — on-prem, private, fast technician answers

MAY 12, 2026 · 5:30 PM EST · Orlando

OxMaint AI Live Webinar — Deploy Private LLM on Your Existing Maintenance Data in One Session

RAG Query · Live

"What is the torque spec for BF-220 coupling bolt at 80°C operating temp?"

Answer · Source: BF-220 OEM Manual p.147

Torque spec: 85 Nm dry / 72 Nm with thread lubricant. Above 75°C, reduce by 15% per thermal expansion guidance.

0.6s · On-prem · No cloud

73%

Faster fault diagnosis with RAG vs manual search

400+

Pages per OEM manual — impossible to memorise

0

Data leaves your network — fully on-prem

60 days

Typical deployment to production-ready system

Why Standard Search Fails Maintenance Teams

01

Keyword Search Returns the Document, Not the Answer

A technician searching "coupling torque BF-220" gets a 400-page PDF. The answer is on page 147. In a time-critical repair, navigating that document costs 20–40 minutes per fault event.

02

Expert Knowledge Walks Out When Engineers Retire

Senior maintenance engineers carry 10–15 years of asset-specific knowledge that was never documented. When they leave, that knowledge disappears — RAG over historical work orders and annotations preserves it permanently.

03

Cloud AI Cannot Touch Proprietary OEM Data

Equipment manuals, SOPs, and OEM service bulletins contain sensitive IP. Sending them to a cloud LLM violates most OEM licensing agreements and creates data governance risk. On-prem RAG solves this — nothing leaves the network.

04

Hallucination Risk in Generic LLMs Is Unacceptable

A general-purpose LLM answering a torque specification question without retrieval grounding will confabulate. In maintenance, a wrong specification answer can cause equipment damage or injury. RAG grounds every answer in cited source documents.

RAG Architecture for Maintenance — How It Works

Document Ingestion

OEM Manuals (PDF)

Service Bulletins

SOPs & Work Instructions

Closed Work Orders

P&ID Diagrams

↓

Embedding & Vector Store

Chunked at 512 tokens

Embedded via sentence-transformers

Stored in Qdrant / pgvector / Weaviate

Metadata: asset ID, doc type, revision date

↓

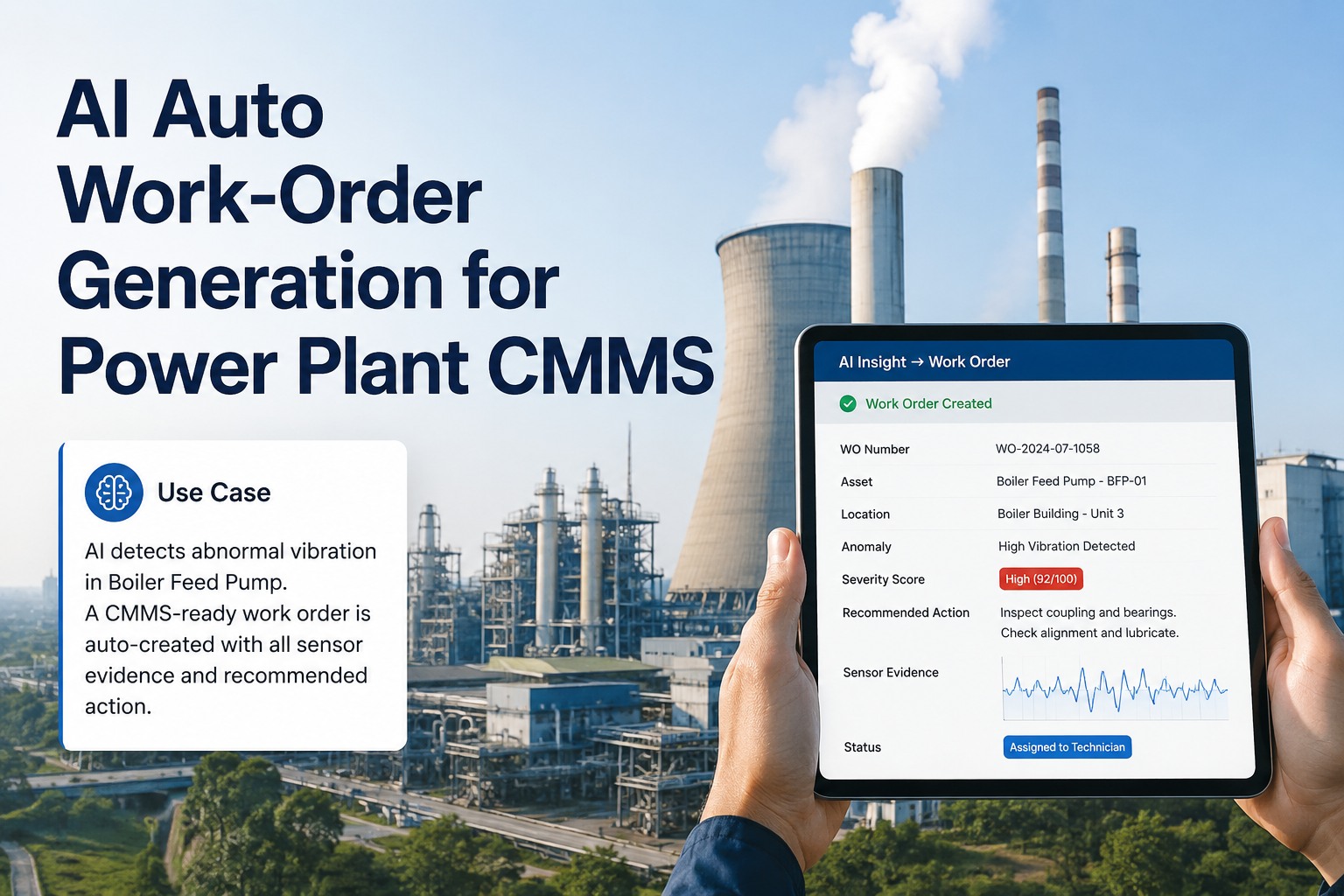

Inference & Answer

Top-K retrieval (K=5–8 chunks)

LLM: Mistral 7B / LLaMA 3 / Phi-3 (on-prem GPU)

Answer + cited source + page reference

Work order auto-populated if fault resolved

Component Selection Guide — Build vs Configure

| Component |

Recommended Option |

Alternative |

Notes for Maintenance Use |

| Embedding Model |

sentence-transformers/all-MiniLM-L6-v2 |

BGE-M3, E5-large |

MiniLM fast enough for mobile technician queries; BGE better for multilingual plants |

| Vector Database |

Qdrant (on-prem Docker) |

pgvector, Weaviate |

Qdrant handles 500K+ chunks with sub-50ms retrieval; pgvector if PostgreSQL already deployed |

| LLM (On-Prem) |

Mistral 7B Instruct |

LLaMA 3 8B, Phi-3 Mini |

Mistral 7B fits on single A10G/RTX 3090; Phi-3 Mini for CPU-only deployments |

| GPU Sizing |

NVIDIA A10G (24 GB VRAM) |

RTX 3090, RTX 4090 |

24 GB handles 7B model at 4-bit quant; 13B requires 2× 24 GB or A100 |

| Orchestration |

LangChain + FastAPI |

LlamaIndex, Haystack |

LangChain best for multi-document chains; LlamaIndex simpler for pure RAG pipelines |

| PDF Parsing |

PyMuPDF + Unstructured.io |

Apache Tika, pdfplumber |

Unstructured handles scanned OEM PDFs with OCR; essential for pre-2005 manuals |

GPU Sizing Reference — Production Maintenance RAG

Entry

RTX 3090 / 4090

24 GB VRAM

Mistral 7B · 4-bit quant

Suitable for single-facility deployments up to 50 concurrent technician queries. Latency: 1.2–2.0 seconds per answer.

Recommended

NVIDIA A10G

24 GB VRAM · Server-grade

Mistral 7B / LLaMA 3 8B

Handles 150–200 concurrent queries. Sub-second retrieval, 0.8–1.5s generation. Optimal for multi-shift plants with 50–300 technicians.

Enterprise

NVIDIA A100 80G

80 GB VRAM

LLaMA 3 70B · Full precision

Multi-facility enterprise deployment. 300+ concurrent users, sub-500ms full pipeline. Required for 70B parameter models without quantisation.

What Gets Indexed — Document Types and Coverage

01

OEM Equipment Manuals

Torque specs, clearance tolerances, lubrication intervals, part numbers, wiring diagrams

PDF · Scanned · HTML

02

Service Bulletins & ECNs

OEM-issued field updates, safety recalls, upgraded component specifications, revised procedures

PDF · Email archives

03

SOPs & Work Instructions

Step-by-step maintenance procedures, lockout/tagout sequences, inspection checklists, permit requirements

DOCX · PDF · SharePoint

04

Historical Work Orders

Past failure resolutions, technician notes, parts used, root cause findings — institutional knowledge made searchable

CMMS export · CSV · JSON

"

The knowledge gap in industrial maintenance is not a training problem — it is a retrieval problem. We train technicians well. What fails them at 2 AM is access: the right page of the right manual, the note from the engineer who fixed this exact failure mode four years ago, the service bulletin that superseded the original torque spec. RAG solves retrieval. When a Mistral 7B model indexed over 800 equipment documents answers a technician's query in under a second with a page citation, the quality outcome is not just speed — it is confidence. Technicians who trust their information source make better decisions under pressure. The plants that deploy this correctly see measurable reductions in MTTR within 60 days, not because the work is different, but because the knowledge is available exactly when it is needed.

Dr. Anke Bremer, PhD (Comp. Eng.), CMRP

Lead Maintenance AI Architect — formerly Siemens Energy Digital Industries · 19 Years Industrial AI and CMMS Integration · Certified Maintenance and Reliability Professional (SMRP) · Research focus: RAG systems for industrial asset management and technician knowledge augmentation

60-Day Deployment Roadmap

Days 1–14 · Discovery

Audit all existing documentation: OEM PDFs, SOPs, CMMS exports, scanned manuals

Classify by asset ID, doc type, and revision date for metadata tagging

Select vector DB and LLM based on GPU inventory and concurrent user estimate

Days 15–30 · Ingestion Pilot

Ingest highest-priority asset documents (top 20 critical assets by downtime history)

Run chunking, embedding, and vector store indexing — validate retrieval accuracy on test queries

Pilot with 5–10 technicians on shift — measure MTTR and query response confidence

Days 31–60 · Production Scale

Ingest full document library — all OEM manuals, SOPs, bulletins, historical work orders

Integrate RAG answers into OxMaint mobile work order interface — technician queries without context switching

Configure auto-population: resolved fault answers suggested to work order description field

Frequently Asked Questions

Can a private LLM handle scanned OEM manuals that are not text-searchable PDFs?

Yes — with an OCR preprocessing step. Tools like Unstructured.io and PyMuPDF combined with Tesseract OCR convert scanned PDFs to text before chunking and embedding. Most pre-2005 OEM manuals were scanned rather than digitally created, and OCR accuracy on clean industrial document scans typically reaches 96–99%. After OCR, the pipeline is identical to native PDF processing. OxMaint's ingestion pipeline includes scanned document support as a standard module — no separate tooling setup is required.

Start a free trial to see document ingestion in action.

How does RAG prevent LLM hallucination in maintenance-critical answers?

RAG anchors every LLM response to retrieved document chunks — the model is instructed to answer only from the retrieved context and to cite its source. If the answer is not present in the retrieved documents, a well-configured RAG system returns "no information found in indexed documents" rather than generating a plausible but incorrect response. This is enforced through the system prompt and validated during deployment testing with known-answer queries across each asset's manual. Citation display (document name, section, page number) in the technician interface allows instant cross-check of any answer that affects safety-critical work.

Book a demo to see OxMaint's hallucination guardrail configuration.

What happens to query data — does the private LLM send any data to the cloud?

In a correctly deployed on-prem RAG system, no query data, document content, or retrieved answers leave the facility network. The embedding model, vector database, and LLM inference engine all run on local hardware. The only external dependency is the initial model download during setup — after which the system operates in full air-gap mode if required. This makes private LLM deployment compatible with OEM licensing agreements that prohibit third-party data sharing, ISO 27001 data governance requirements, and regulated industry environments in defence, pharma, and energy. OxMaint's deployment guide covers network isolation configuration for fully air-gapped environments.

Explore OxMaint's on-prem AI deployment options with a free trial.

OxMaint · Private LLM · On-Prem RAG

Stop Searching Through 400-Page Manuals. Start Getting Answers in Under a Second.

OxMaint's private RAG deployment gives every technician instant, cited answers from your own equipment manuals — on-prem, zero cloud, no hallucination risk.