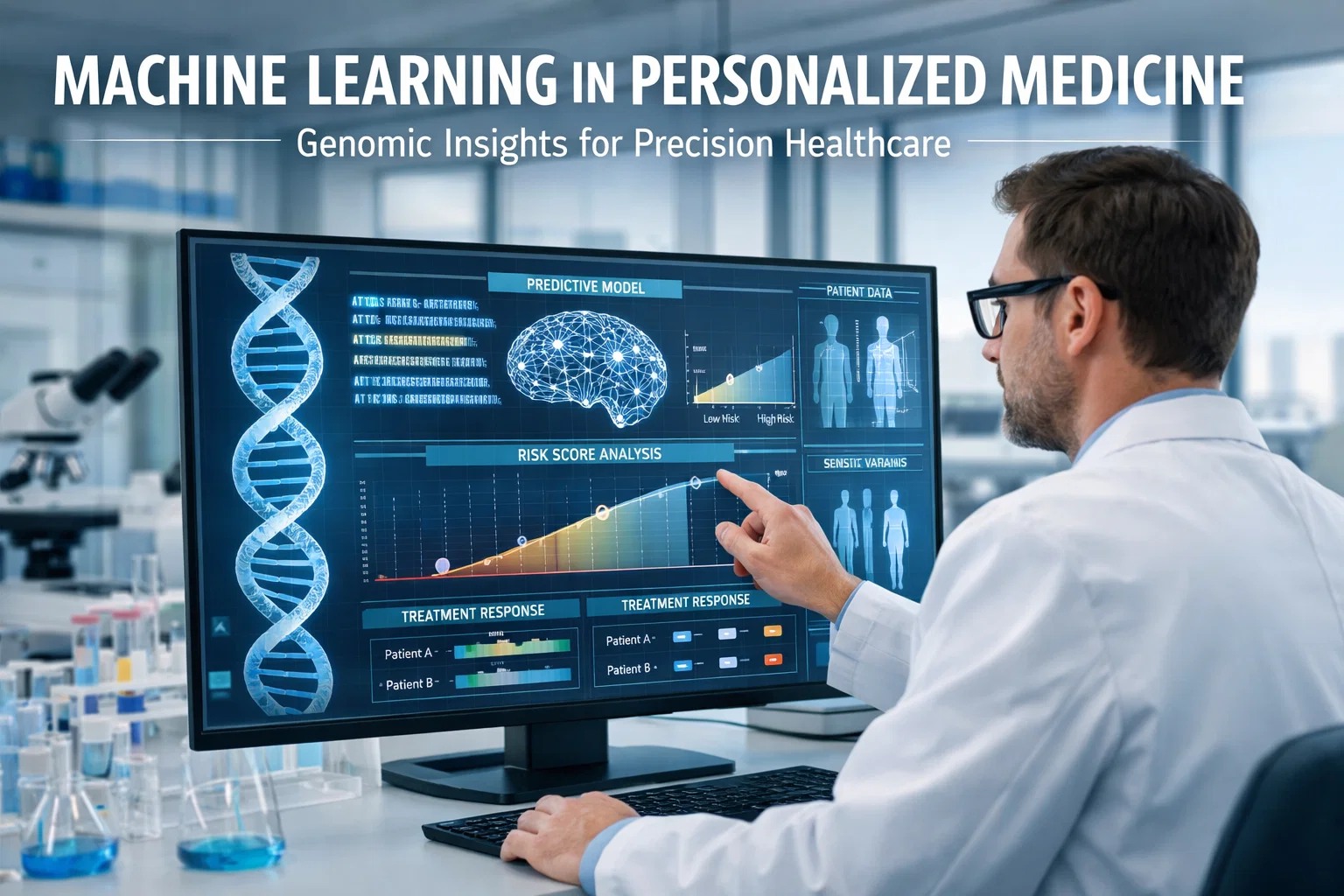

Machine learning is reshaping how clinicians understand, diagnose, and treat disease — moving healthcare from population averages to individual biological truth. For decades, medicine treated the average patient. Today, algorithms trained on billions of genomic data points are enabling treatments tailored to each person's unique biology — their DNA, their clinical history, their drug metabolism profile. This convergence of ML and genomics is not a future promise; it is happening in cancer wards, rare disease clinics, and NICU units right now. The precision medicine market is projected to reach $26 billion by 2028, driven by the ability of ML to process what no human team ever could: 3.2 billion base pairs, 4-5 million variants per genome, and petabytes of multi-omic data — analyzed in hours, not months. Whether you are a researcher, clinician, or healthcare technology leader, understanding how machine learning is reshaping genomic medicine is essential. Sign up free to explore how Oxmaint applies the same predictive intelligence to physical asset operations, or book a demo and see it in action for your facility.

The Same Intelligence — Applied to Your Assets

Oxmaint brings the predictive power of data-driven decision making to facility and asset management. Condition-based triggers, rolling CapEx forecasting, and real-time asset health — built for multi-site operations. No heavy onboarding. No guesswork.

What Is Machine Learning in Personalized Medicine?

Personalized medicine — also called precision medicine — moves healthcare away from one-size-fits-all protocols. Instead of treating the average patient, clinicians treat the actual patient: their genome, their history, their biochemistry.

Machine learning is the engine that makes this possible at scale. Raw genomic data is massive, multi-dimensional, and deeply non-linear. Classical statistical models break down under the weight of 4-5 million variants per genome. ML algorithms — from deep neural networks to gradient-boosted decision trees — identify patterns across millions of genetic variants, correlating them with disease outcomes, drug responses, and survival rates.

The result: a clinician who can act on biological truth, not population averages. Every data point becomes actionable intelligence. Want to see how data-driven decision-making transforms operations, start a free trial or book a demo to see data intelligence at work in your facility.

ML in personalized medicine = algorithms trained on genomic, clinical, and phenotypic data to predict individual disease risk, optimal treatment pathways, and expected drug responses — with greater speed and accuracy than any human analyst.

Key ML Frameworks Driving Genomic Precision

Eight foundational ML approaches powering the shift from population medicine to individual medicine.

Convolutional neural networks analyze raw sequencing reads to identify pathogenic variants with up to 99.7% specificity, outperforming rule-based callers.

Handles high-dimensional genomic feature sets. Identifies the most predictive SNPs from millions of candidates with built-in feature importance scoring.

Extracts phenotypic data from unstructured clinical notes, linking symptoms and diagnoses to genomic profiles for richer predictive models.

ML-enhanced Cox models and DeepSurv algorithms predict disease onset timelines, giving clinicians a proactive window to intervene before symptoms appear.

Aggregates thousands of low-effect variants into a single predictive score. ML refines these scores using biobank data from millions of individuals.

GANs generate privacy-preserving synthetic genomes for training larger models without compromising patient data — solving data scarcity in rare disease research.

Models pretrained on large cancer genomic datasets transfer knowledge to rare diseases where sample sizes are insufficient for training from scratch.

Hospitals train shared models without sharing raw patient data. A 2024 consortium study showed federated ML matched centralized model accuracy within 1.3%.

Critical Pain Points in Genomic Medicine Without ML

The problems that persist when genomic data is analyzed without intelligent automation.

A single whole-genome sequence produces 4-5 million variants. Manual clinical interpretation takes weeks per case. Without ML, analysis cannot scale to population need.

Without pharmacogenomic ML, 30-50% of patients fail their first-line treatment. Each failed course costs time, money, and patient health — especially in oncology and psychiatry.

Genomic labs, EHRs, imaging systems, and wearable devices generate data in isolation. Without integration, up to 80% of potentially predictive signals are never analyzed.

The average rare disease patient waits 4.8 years for a correct diagnosis. Rare variants are missed by standard pipelines; ML models identify them 6x faster.

Over 78% of genomic research has focused on European-ancestry populations. Population-blind models carry risk stratification errors for underrepresented groups exceeding 35%.

Clinical genomic AI tools require rigorous validation before deployment. Without structured pipelines, institutions face FDA/CE mark barriers and liability exposure in clinical decision support.

Where ML Genomics Is Delivering Real Clinical Outcomes

Active deployment areas where machine learning is reshaping care pathways today — with measurable, documented results.

ML classifies tumor subtypes from somatic mutation profiles, predicts immunotherapy response with 62% accuracy improvement over clinical staging alone, and identifies actionable driver mutations in liquid biopsies with 94% sensitivity.

CYP2D6, CYP2C19, and SLCO1B1 variant classifiers guide dosing for antidepressants, anticoagulants, and statins. Institutions implementing ML-driven PGx protocols report 38% fewer medication-related adverse events.

Polygenic risk scores for coronary artery disease, trained on UK Biobank and FinnGen data, identify high-risk individuals 10-15 years before symptom onset — enabling preventive intervention windows unavailable through traditional risk factors alone.

Phenotype-driven ML tools match patient symptom clusters to likely genetic diagnoses. Diagnostic yield in undiagnosed rare disease programs increased from 25% to 41% with ML augmentation — a 64% improvement in actionable outcomes.

Rapid whole-genome sequencing combined with ML triaging reduces diagnosis-to-treatment time for critically ill neonates from 23 days to under 26 hours in leading NICU programs.

APOE4 and LRRK2 variant models combined with proteomics and imaging predict Alzheimer's and Parkinson's risk 8-12 years ahead of clinical presentation — with enough lead time for disease-modifying therapies to intervene.

These breakthroughs share a common thread: structured data pipelines, predictive intelligence, and platforms that translate insight into action. Bring that same intelligence to your operations — start a free trial of Oxmaint today or book a demo to speak directly with our team.

Traditional Genomic Analysis vs ML-Driven Precision Medicine

The gap between legacy methods and machine learning-powered genomics is not incremental — it is transformational.

| Metric | Traditional Approach | ML-Powered Precision Medicine |

|---|---|---|

| Variant Analysis Speed | 2-4 weeks per genome | Under 8 hours for full WGS |

| Diagnostic Accuracy | ~65% sensitivity in complex cases | Up to 97% with multi-modal ML |

| Drug Response Prediction | Trial-and-error; 1-3 medication changes | First-prescription accuracy 78%+ with PGx ML |

| Data Sources Integrated | Genome only | Genome + transcriptome + EHR + imaging + wearables |

| Rare Disease Diagnosis | Average 4.8 year diagnostic odyssey | Diagnosis within days; 6x faster resolution |

| Population Coverage | Primarily European-ancestry datasets | Multi-ancestry training with bias correction |

| Scalability | Bottlenecked by clinical geneticist capacity | Scales to millions of analyses simultaneously |

| Cost Per Diagnosis | $3,500-$8,000 average | Projected under $500 by 2027 |

Measurable Impact: ML Precision Medicine in Numbers

Predictive intelligence at this scale mirrors what Oxmaint delivers for physical assets — structured data, smart triggers, and decisions made before failure occurs. Ready to apply the same logic to your operations? Start a free trial or book a demo and see it firsthand.

Stop Reacting. Start Predicting.

The same data-driven logic that transformed genomic medicine — condition-based triggers, multi-source data integration, predictive analytics — is what Oxmaint brings to your equipment and facilities. Over 1,200 operations teams across 6 countries trust Oxmaint to cut reactive maintenance costs, extend asset life, and produce investor-grade CapEx forecasts.

Frequently Asked Questions

What types of machine learning are most effective for genomic data analysis?

Deep learning architectures — particularly convolutional neural networks and transformers — are most effective for raw sequence data. Gradient-boosted ensemble methods like XGBoost and LightGBM perform best for structured variant-feature tables. For survival prediction, ML-enhanced Cox proportional hazards models and architectures like DeepSurv outperform classical statistical methods. The most robust clinical systems use ensemble approaches combining multiple model types, achieving accuracy scores 15-25% above any single architecture operating alone.

How does federated learning protect patient genomic privacy while enabling ML model training?

Federated learning trains models at each participating institution using only local data. Only model weight updates — not raw genomic records — are shared with the central aggregation server. This architecture is GDPR and HIPAA compatible by design. A 2024 multi-hospital consortium demonstrated that federated models trained across eight institutions without sharing a single patient record achieved accuracy within 1.3% of centrally-trained models. Differential privacy techniques further protect against reconstruction attacks by introducing calibrated noise into shared model updates.

What is a polygenic risk score and how does machine learning improve it?

A polygenic risk score (PRS) aggregates the small effect sizes of thousands of common genetic variants to estimate an individual's genetic predisposition to a complex disease. Classical PRS methods use GWAS summary statistics directly. ML improves PRS by incorporating non-linear interaction effects between variants, integrating PRS with clinical and lifestyle data for multi-modal risk scores, and using biobank populations of 500,000+ individuals for training. ML-refined PRS models for coronary artery disease identify the top 8% of risk individuals, who carry 3x the average population risk.

What are the main barriers to clinical deployment of ML genomic tools?

Four primary barriers slow deployment: regulatory validation (FDA Software as a Medical Device pathway, CE mark in Europe), data interoperability between LIMS, EHR, and genomic platforms, population bias in training datasets reducing accuracy for non-European ancestry patients, and clinician trust — with 43% of physicians citing explainability as a prerequisite for adoption. Institutions making the most progress invest in multi-ancestry biobanks, deploy explainable AI frameworks, pursue regulatory pathways proactively, and embed geneticists in ML development teams.

Bring Predictive Intelligence to Your Operations

The same data-driven logic powering ML in precision medicine — structured records, condition-based triggers, predictive analytics — is what Oxmaint delivers for your equipment, facilities, and assets. No guesswork. No reactive firefighting. Just clean data, smart forecasting, and maintenance that runs ahead of failure.

Zero heavy implementation fees. Mobile-first from day one. Multi-site ready out of the box.