Every major equipment failure on your production floor was preceded by warning signs that nobody could see in time — because the data existed in sensors but the intelligence to connect those signals into a meaningful failure prediction did not. Digital twins change that equation entirely by creating a live, learning virtual replica of every critical asset that runs simulations, detects anomalies, and predicts failures before they reach the physical machine. If you are ready to explore how digital twin-powered predictive maintenance works in practice, start with Oxmaint — or book a 30-minute demo with our team to see the technology applied to your specific asset types.

Digital Twin Technology Is No Longer Experimental — It Is Generating Measurable ROI on Factory Floors Right Now

The global digital twin market is expanding from $21.14 billion in 2025 to a projected $149.81 billion by 2030 — a 47.9% annual growth rate driven almost entirely by demonstrated ROI in manufacturing, not by pilot programs or academic research.

What a Digital Twin Actually Is — and What It Is Not

A digital twin is a continuously updated virtual model of a physical asset — a motor, a conveyor, a CNC machine, an entire production line — that mirrors the asset's real-time operating state using live sensor data. It is not a static 3D model. It is not a CAD drawing. It is a dynamic, data-fed simulation that learns from how the physical asset actually behaves under real operating conditions.

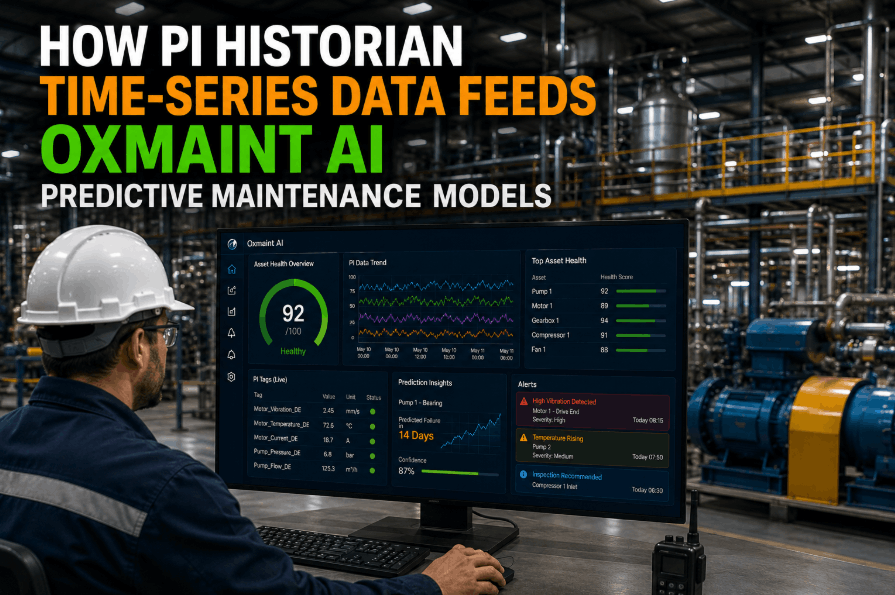

The physical asset sends data — vibration readings, temperature, current draw, pressure, cycle counts — through IoT sensors to the digital model. The model processes that data against physics-based equations, historical failure patterns, and machine learning algorithms to produce one output that matters above all others: a prediction of when and how the physical asset will next fail, and what to do about it before it does.

Six High-Impact Digital Twin Applications in Manufacturing

Digital twins are not a single application — they are a platform capability that unlocks different operational value in each context. These are the six use cases generating the strongest ROI in manufacturing environments today.

The digital twin runs continuous simulations of the physical asset's degradation trajectory. When vibration patterns, thermal signatures, or load profiles deviate from the baseline model, the twin calculates remaining useful life and triggers a maintenance work order — hours or days before the failure would have stopped the line. A semiconductor plant deploying this approach eliminated unplanned calibration stops entirely from targeted equipment within 18 months.

Physics-based models inside the digital twin calculate precisely how much operating life remains in critical components — bearings, seals, cutting tools, hydraulic actuators — based on actual usage intensity, not calendar intervals. This replaces the wasteful practice of replacing components on fixed schedules regardless of their actual condition. Components are replaced when the data says to, not when the calendar says to.

The twin simulates thousands of production parameter combinations — temperature setpoints, feed rates, pressure profiles, cycle timings — without touching the physical production line. Operators discover the optimal settings for quality, throughput, and energy efficiency through simulation before implementing changes. What previously required weeks of physical trials and associated scrap now takes hours of virtual simulation.

Before a new asset is physically installed on the production floor, its digital twin validates the integration — checking for interference with adjacent equipment, simulating startup sequences, and identifying likely failure modes based on design data and operational profiles from similar assets in the fleet. Commissioning time and startup scrap are both reduced significantly because problems that would normally surface in physical startup are found and resolved in simulation first.

Digital twins of production lines model energy draw per unit of output under different operating conditions. They identify assets operating at inefficient points on their performance curve — running hotter than necessary, consuming more current than their actual workload demands, or scheduled during peak tariff periods. Energy management improvements of 12% on average have been documented in manufacturing facilities using digital twin energy models.

Plant-level digital twins that model production schedules, maintenance windows, inventory levels, and supplier lead times allow operations teams to simulate the impact of supply chain disruptions before they occur. When a key component supplier signals a delivery delay, the twin immediately runs simulations showing which production sequences are viable given current inventory, which maintenance windows can be safely deferred, and where buffer stock should be positioned.

Your Equipment Is Already Generating the Data a Digital Twin Needs

Most manufacturing plants already have the sensor infrastructure digital twins require — the missing layer is a maintenance platform that turns that sensor data into structured, queryable equipment history that predictive models can learn from. Oxmaint builds that layer: every work order, every PM completion, every parts record becomes the training foundation that makes your digital twin predictions more accurate over time. Start building your data foundation today, or talk to our team about how Oxmaint connects to your existing sensor infrastructure.

The Digital Twin Data Architecture — From Sensor to Prediction

Understanding how digital twins actually work helps manufacturing teams make better deployment decisions. The architecture has four layers, each dependent on the quality of the layer below it.

What Changes When Digital Twins Enter the Maintenance Workflow

| Decision or Activity | Without Digital Twin | With Digital Twin |

|---|---|---|

| When to schedule maintenance | Fixed calendar intervals or after failure | When twin signals approaching failure threshold |

| Repair vs replace decision | Engineering judgement based on incomplete history | Remaining useful life calculation from actual usage data |

| Process optimisation testing | Physical trials with associated scrap and downtime risk | Simulated in the twin — validated before physical implementation |

| New equipment startup | Learn failure modes through operational experience | Failure modes identified in commissioning simulation |

| Supply chain disruption response | Ad hoc schedule adjustment under pressure | Simulated scenarios identify optimal response before committing |

| Energy optimisation | Annual energy audits with static recommendations | Continuous real-time energy modelling per asset per shift |

Scroll right to view full table on mobile

How to Start Your Digital Twin Journey — Without a $10M Budget

The biggest misconception about digital twins is that they require a complete IoT overhaul of the entire plant before generating value. The highest-ROI deployment strategy is the opposite: start with one critical asset, prove the economics, and scale.

Calculate the total annual cost of failures on each critical asset: emergency parts, overtime, lost production value, and quality rejects from restart. The asset with the highest failure cost is the correct starting point for your first digital twin — the economics of failure prevention are most compelling there, and the ROI case is clearest to management.

Before installing new sensors, inventory what data already exists: existing sensor outputs, manual inspection records, maintenance work order history, production logs, and parts consumption data. Digital twin models built with rich historical maintenance context outperform those relying on sensor data alone — which is why structured CMMS data is a foundational input, not an optional add-on.

Install sensors appropriate for the asset's primary failure modes: vibration for rotating equipment, thermal imaging for electrical systems, pressure for hydraulic circuits. Run the asset in normal operating conditions for 4–8 weeks to establish the baseline performance signature that the twin model will use to detect deviations. The baseline must cover multiple operating modes — startup, full load, partial load, shutdown — to avoid false positives.

A digital twin that generates predictions no one acts on is a data science experiment, not a business tool. The prediction layer must connect to a maintenance execution system that automatically creates work orders, assigns technicians, checks parts availability, and tracks completion. The feedback loop from completed maintenance back to the model is what makes the twin progressively more accurate — closed-loop systems outperform open-loop predictions in every documented study.

Compare unplanned downtime, maintenance cost, and MTBF for the twinned asset against the 12-month pre-deployment baseline. Validated ROI from a single asset is the business case for the next five. Scale sequentially — adding assets as the organisation's digital twin capability matures — rather than attempting a simultaneous plant-wide deployment that overwhelms both the technology team and the change management capacity of the maintenance workforce.

Digital Twins in Manufacturing — Questions Decision-Makers Ask

Pilot deployments targeting a single critical asset typically range from $30,000 to $150,000 depending on existing sensor infrastructure, integration complexity, and model sophistication. The most cost-effective starting point is an asset that already has some sensor coverage and 12+ months of structured maintenance history in a digital system. Plants using Oxmaint as their CMMS already have the structured maintenance history layer that reduces digital twin implementation costs by eliminating the historical data reconstruction step. Cloud-based and subscription-model platforms have also significantly reduced the upfront capital required compared to on-premise deployments from five years ago.

Effective failure prediction requires three data streams working together: real-time sensor telemetry from the physical asset, historical maintenance records showing what was repaired and when, and operational context data including production rates, material processed, and environmental conditions. The sensor data identifies deviation from normal; the maintenance history identifies what those deviations previously meant; the operational context explains why the deviation is occurring. Plants without structured digital maintenance history can still deploy digital twins, but prediction accuracy improves significantly — often by 20–30% in documented studies — when historical work order data is available as training context. Talk to our team about how Oxmaint structures this data foundation.

Traditional predictive maintenance software applies threshold-based or statistical alerts to sensor data: "vibration above X triggers an alert." A digital twin goes further by building a physics-based model of how the asset actually behaves and degrades, enabling it to simulate failure trajectories rather than just detecting threshold breaches. The practical difference is that a digital twin can predict failure 48–72 hours ahead with a specific failure mode identified, while threshold-based systems typically alert only when the symptom is already advanced. Digital twins also enable the "what-if" simulations — testing parameter changes, maintenance timing scenarios, and supply disruptions — that sensor monitoring alone cannot provide. See how Oxmaint structures the maintenance execution layer that both approaches require.

Quality inspection and predictive maintenance pilots targeting high-failure-cost assets typically demonstrate positive ROI within 6–12 months of go-live, with the first prevented failure event often representing the full implementation cost of the pilot. A global automotive plant documented a 30% maintenance cost reduction and 40% uptime improvement within the first year of integrating digital twin analytics with their predictive maintenance programme. The payback timeline is fastest when the baseline is highest: plants with frequent unplanned failures on high-value assets recover their investment faster than those applying digital twins to assets with relatively stable operating histories. Book a demo and we can model expected ROI for your specific asset profile.

Yes — the majority of successful digital twin deployments in established manufacturing facilities are built on legacy equipment retrofitted with external sensors rather than replaced with connected-native assets. Wireless vibration, temperature, and current-draw sensors can be installed non-invasively on motors, pumps, and compressors without production interruption or equipment modification. The key constraint is data quality: a legacy asset with rich maintenance history in a digital system like Oxmaint will support a more accurate digital twin model than the same physical asset with no accessible maintenance history, regardless of how sophisticated the sensor package is.

The Maintenance Data You Capture Today Is the Intelligence Your Digital Twin Learns From Tomorrow

Every work order completed in Oxmaint — asset, fault type, parts used, time taken, corrective action — becomes a training data point that makes your future digital twin predictions more accurate. Plants that build a structured, searchable digital maintenance history today are the ones whose digital twin implementations will outperform competitors in two years. Start your data foundation with Oxmaint now, or talk to our team about connecting your sensor infrastructure to a maintenance execution platform that closes the prediction-to-action loop.