A single unplanned outage at a nuclear generating station costs between $700,000 and $2 million per day in replacement power alone — and the reactor coolant pump seal that failed without warning, the feedwater valve that degraded faster than its last inspection suggested, or the heat exchanger that fouled beyond its operating curve all had something in common: measurable data signatures weeks before the failure that no human reviewer caught in time. OxMaint's AI-driven predictive maintenance platform exists to close that gap — turning continuous sensor data into component health scores, failure probability windows, and scheduled interventions before the NRC ever hears about it. This page is for maintenance engineers, reliability managers, and operations directors at nuclear facilities who want to understand exactly what machine learning can do for reactor equipment reliability in the real world.

Nuclear Predictive Maintenance:

AI and Machine Learning for Reactor Equipment

Detect pump degradation, valve wear, and heat exchanger fouling weeks before failure — with ML models built for the reliability standards nuclear operations demand.

The Real Cost of Missing Early Failure Signals in Nuclear Operations

Time-based preventive maintenance protects against statistical averages. It does not protect against the specific unit in front of you degrading faster than the schedule predicts. NRC reliability data consistently shows that approximately 42% of nuclear component failures occur within 90 days of a completed scheduled maintenance event — meaning the interval was right on paper, but wrong for that asset in those operating conditions. The financial and regulatory consequences of each failure category are not equal, and they are rarely visible until after the event.

How Machine Learning Detects What Human Inspection Misses

Machine learning models for nuclear equipment reliability do not replace engineering judgment — they extend it. A vibration analyst reviewing monthly trend data sees what changed since last month. An ML model monitoring the same sensor stream in real time sees the micro-trend that began developing 34 days ago, compares it against 2,400 historical operational cycles across identical equipment, and calculates the probability and predicted time window of a threshold exceedance. That is a fundamentally different capability — and it is available continuously, without adding a single analyst to your team.

Alarms trigger when a reading crosses a fixed limit — typically set conservatively, meaning detection happens late in the degradation curve.

Human reviewers examine one parameter at a time. Early bearing failure signatures often require correlation of vibration, temperature, and current draw simultaneously.

Weekly or monthly data reviews leave detection gaps. Failure signatures that develop and cross critical thresholds between reviews go undetected until the next scheduled look.

Models correlate 6–24 sensor streams simultaneously — identifying degradation signatures that are invisible in any single parameter but clear when analyzed as a system.

Every asset receives a live health score updated continuously from streaming sensor data — not a snapshot from last Tuesday's rounds or last month's trend report.

Models output a predicted failure date range with confidence intervals — giving maintenance planners 2–6 weeks of lead time to schedule interventions during planned windows.

Component Health Scoring: What a 0–100 Score Means for Your Equipment

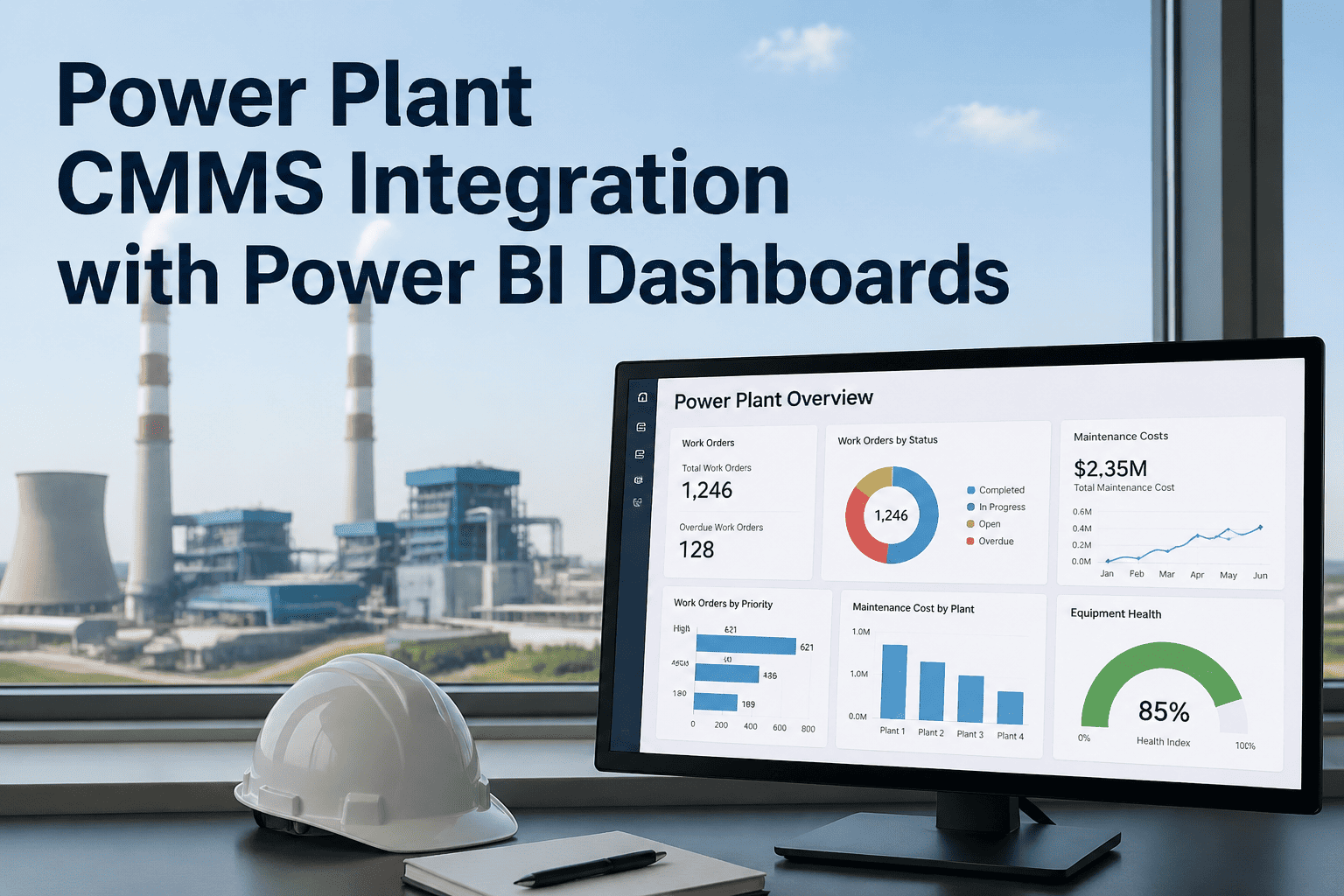

OxMaint assigns every monitored asset a live Component Health Score — a single number that synthesizes all available sensor streams, historical failure patterns, and operational context into an immediately actionable equipment status. Health scores are not approximations — they are statistically calibrated outputs from ML models trained on your plant's own historical data, validated against known failure events before going live.

All sensor parameters within normal operating envelope. No anomalies detected. Next planned maintenance interval confirmed appropriate by current condition data.

Early degradation signature detected in one or more parameters. Not yet at intervention threshold but trending toward advisory. Engineering review recommended within 14 days.

Degradation confirmed across multiple parameters. Predicted failure window calculated. Work order should be generated and maintenance scheduled within the predicted intervention window.

Failure probability high within near-term operational window. Immediate supervisor notification triggered. Expedited maintenance response required before next planned outage opportunity.

Know the Health Score of Every Reactor Asset Before the Shift Starts

OxMaint gives your reliability team a live, continuously updated view of every monitored component — no more waiting for the weekly trend report or the monthly analyst visit to know what is happening inside your rotating equipment.

Where OxMaint's ML Models Have the Greatest Impact: Nuclear Equipment

Predictive analytics delivers the highest reliability dividend on equipment where degradation is continuous, sensor data is rich, and failure consequences extend well beyond the asset itself. The following four equipment categories represent the highest-priority deployment targets for nuclear facilities adopting AI predictive maintenance — based on EPRI research, INPO equipment reliability data, and operating experience from AI-enabled plants in North America, France, South Korea, and the UAE.

RCP vibration signatures, bearing temperature trends, seal leak-off flow rates, and motor current draw feed ML models that can distinguish the subtle multi-parameter pattern of early bearing degradation from normal operational variation — at a sensitivity level no calendar-based inspection program can match.

MSIV stroke time trending between surveillance tests, acoustic emission monitoring for seat wear, and actuator current profiling give reliability engineers a continuous condition picture — replacing the 18–24 month pass/fail snapshot with a real-time degradation curve that prompts planned intervention before test failure occurs.

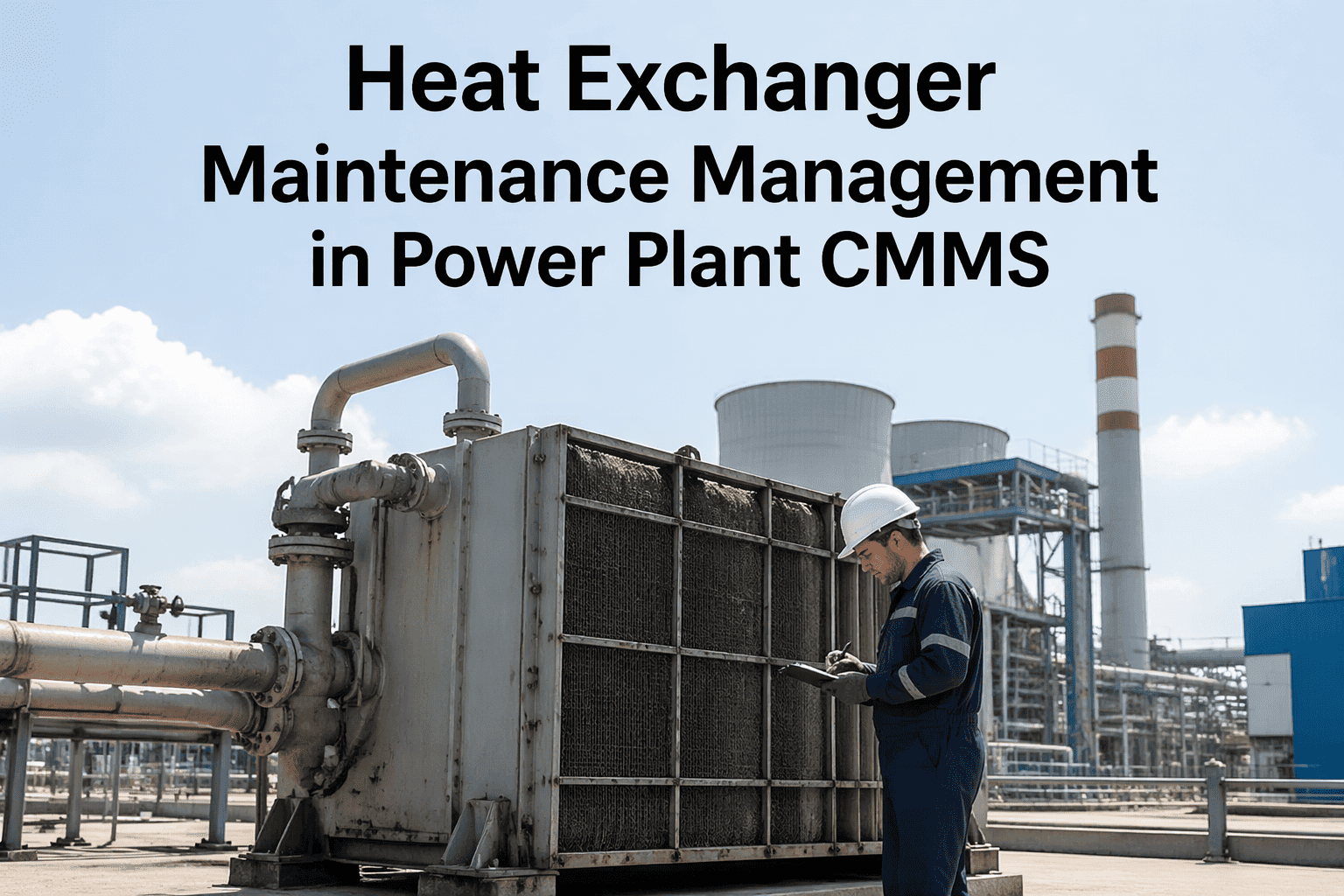

Fouling progression follows predictable thermal efficiency curves that ML models extrapolate into precise cleaning and replacement interval recommendations — eliminating both premature intervention (unnecessary dose and cost) and over-fouled operation (efficiency loss and tube risk). The cleaning decision becomes data-driven, not calendar-driven.

EDGs are tested infrequently but must perform on demand with zero tolerance for failure. ML health scoring between test events — using lube oil analysis trends, exhaust temperature profiling, and vibration data from the last test run — gives reliability engineers a continuous readiness confidence score rather than a quarterly binary result.

Industry Performance Data: What AI Predictive Maintenance Achieves in Nuclear Operations

The benchmarks below are sourced from documented operating experience at AI-enabled nuclear generating stations in North America, France, South Korea, and the UAE — published through EPRI research programs, NRC event analysis reports, and operator technical papers from 2021 through 2024. Every figure represents achieved outcomes at facilities operating under regulatory oversight equivalent to your plant's requirements.

Built for NRC Scrutiny. Trusted by Reliability Engineers.

Every ML prediction, health score, and resulting work order is logged, timestamped, and audit-ready. OxMaint's nuclear compliance documentation gives regulators the evidence chain they expect — and gives your team the operational confidence they need.

Frequently Asked Questions — Nuclear AI Predictive Maintenance

Stop Managing Equipment on Schedule. Start Managing It on Condition.

OxMaint's ML Prediction Models and Component Health Scoring give nuclear reliability teams the continuous equipment intelligence that time-based PM programs were never designed to provide — with the complete, timestamped audit trail that NRC, INPO, and your own quality organization will stand behind.