When the plant manager at a Midwest automotive stamping facility reviewed the Q1 2025 downtime report, one number dominated the page: $2.4 million in unplanned failures across 14 hydraulic presses, 22 CNC machining centres, and 8 overhead cranes. The maintenance team had attempted an IoT predictive maintenance pilot six months earlier — purchasing 200 vibration sensors from an online vendor, mounting them on critical assets, and connecting them to a cloud dashboard. Within 90 days, 40% of the sensors had dropped offline due to wireless interference from the plant's steel structure, battery life proved to be 4 months instead of the advertised 3 years, edge gateways couldn't handle the data throughput during peak production, and the remaining sensor data sat in a standalone dashboard that nobody checked because it wasn't connected to a single work order. The $180,000 investment produced zero predictive work orders, zero avoided failures, and zero ROI. The sensors didn't fail — the deployment architecture did. Without proper sensor selection matched to asset failure modes, wireless protocol planning for the actual RF environment, edge computing sized for real data volumes, and a CMMS pipeline that converts sensor anomalies into prioritised work orders, IoT predictive maintenance is just expensive data collection with no operational outcome. Facilities ready to deploy IoT sensors that actually prevent failures can start their free trial today.

The technology to predict equipment failure before it impacts production exists and is proven. But the gap between "sensors installed" and "failures prevented" is an engineering challenge that requires deliberate architecture across four layers: the right sensor types matched to specific failure modes, wireless protocols selected for the actual plant environment, edge computing sized for real-time data processing, and a CMMS data pipeline that converts every anomaly into a prioritised, dispatched, and verified repair action. This guide provides the complete hardware and network setup framework for deploying IoT sensors that deliver measurable predictive maintenance outcomes — not just dashboards. Schedule a consultation to design your IoT-to-CMMS predictive maintenance architecture with Oxmaint.

Why Most IoT Sensor Deployments Fail to Prevent Failures

The failure pattern is remarkably consistent: facilities purchase sensors based on vendor marketing, mount them without matching to specific failure modes, connect them to a standalone dashboard, and wait for "predictive insights" that never materialise into maintenance actions. The root cause isn't bad sensors — it's missing architecture. Without a complete pipeline from sensor selection through edge processing to CMMS work order generation, IoT data becomes noise that maintenance teams learn to ignore. The cascade below shows how a well-intentioned IoT deployment collapses when architecture is missing.

A properly architected IoT deployment prevents this cascade at every layer. Sensor types are matched to documented failure modes through FMEA analysis, wireless protocols are selected based on RF site surveys, edge computing is sized for peak data throughput with 40% headroom, and every sensor anomaly flows through a CMMS pipeline that generates prioritised work orders dispatched to mobile crews. Facilities that follow this architecture don't just collect data — they prevent failures.

The Four Layers of IoT Predictive Maintenance Architecture

Successful IoT predictive maintenance operates across four integrated layers — each solving a specific failure point that collapses standalone sensor deployments. Skip any layer and the pipeline breaks. Implement all four and sensor data transforms from unused dashboards into prevented failures and documented savings.

Layer 1: IoT Sensor Types — Matching Hardware to Failure Modes

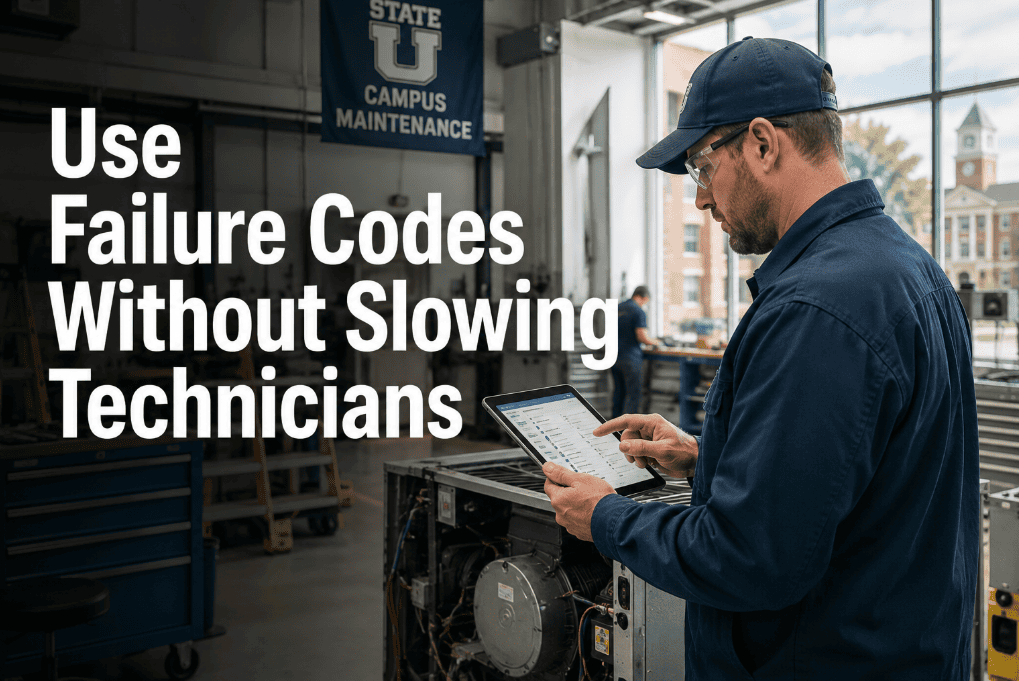

The single most common IoT deployment mistake is selecting sensors based on vendor availability rather than failure mode analysis. A vibration sensor on a motor that fails from winding insulation breakdown provides zero predictive value — but a current signature sensor on that same motor predicts winding failure 60-90 days in advance. Sensor selection must start with FMEA: what fails, how does it fail, and what physical parameter changes first? The answer determines the sensor type. Schedule a consultation to map your critical asset failure modes to the right sensor types.

| Sensor Type | Detects | Best For | Lead Time |

|---|---|---|---|

| Accelerometer (Vibration) | Imbalance, misalignment, bearing wear, looseness | Rotating equipment — motors, pumps, fans, compressors | 30-90 days before failure |

| Temperature (RTD/Thermocouple) | Overheating, friction, insulation breakdown, cooling loss | Bearings, electrical panels, transformers, heat exchangers | 7-30 days before failure |

| Current / Power (CT Clamp) | Winding degradation, phase imbalance, overload, locked rotor | Electric motors, drives, compressors, conveyor systems | 60-120 days before failure |

| Ultrasonic (Acoustic Emission) | Compressed air leaks, steam leaks, bearing defects, cavitation | Pneumatic systems, steam traps, valve seats, gearboxes | Immediate detection |

| Pressure Transmitter | Filter blockage, pump degradation, system leaks, seal wear | Hydraulic systems, HVAC, lubrication systems, pipelines | 14-60 days before failure |

| Oil Condition (Particle Counter) | Contamination, wear metals, moisture ingress, viscosity change | Gearboxes, hydraulic units, turbines, large bearings | 30-90 days before failure |

Layer 2: Wireless Protocols — Selecting the Right Network for Your Plant

Wireless protocol selection is the second most common failure point. Wi-Fi sounds familiar but fails in metal-dense industrial environments. Bluetooth has range limitations. LoRaWAN offers kilometres of range but limited data rates. The right protocol depends on four factors: plant RF environment, data payload size, sensor density, and battery life requirements. Most facilities need multiple protocols in a hybrid architecture — LoRaWAN for distributed low-data sensors and Wi-Fi 6/5G for high-bandwidth vibration waveform capture.

Layer 3: Edge Computing — Processing Data Before It Leaves the Plant

Edge computing is the layer most frequently undersized in IoT deployments. Raw sensor data volumes from vibration accelerometers alone can exceed 1 GB per sensor per day at high sampling rates. Sending all of this to the cloud is expensive, slow, and unnecessary. Edge gateways perform local AI inference, filter noise, detect anomalies, and send only actionable insights upstream — reducing cloud bandwidth by 90%+ while enabling sub-second response to critical equipment events.

Layer 4: CMMS Data Pipeline — Converting Sensor Data Into Prevented Failures

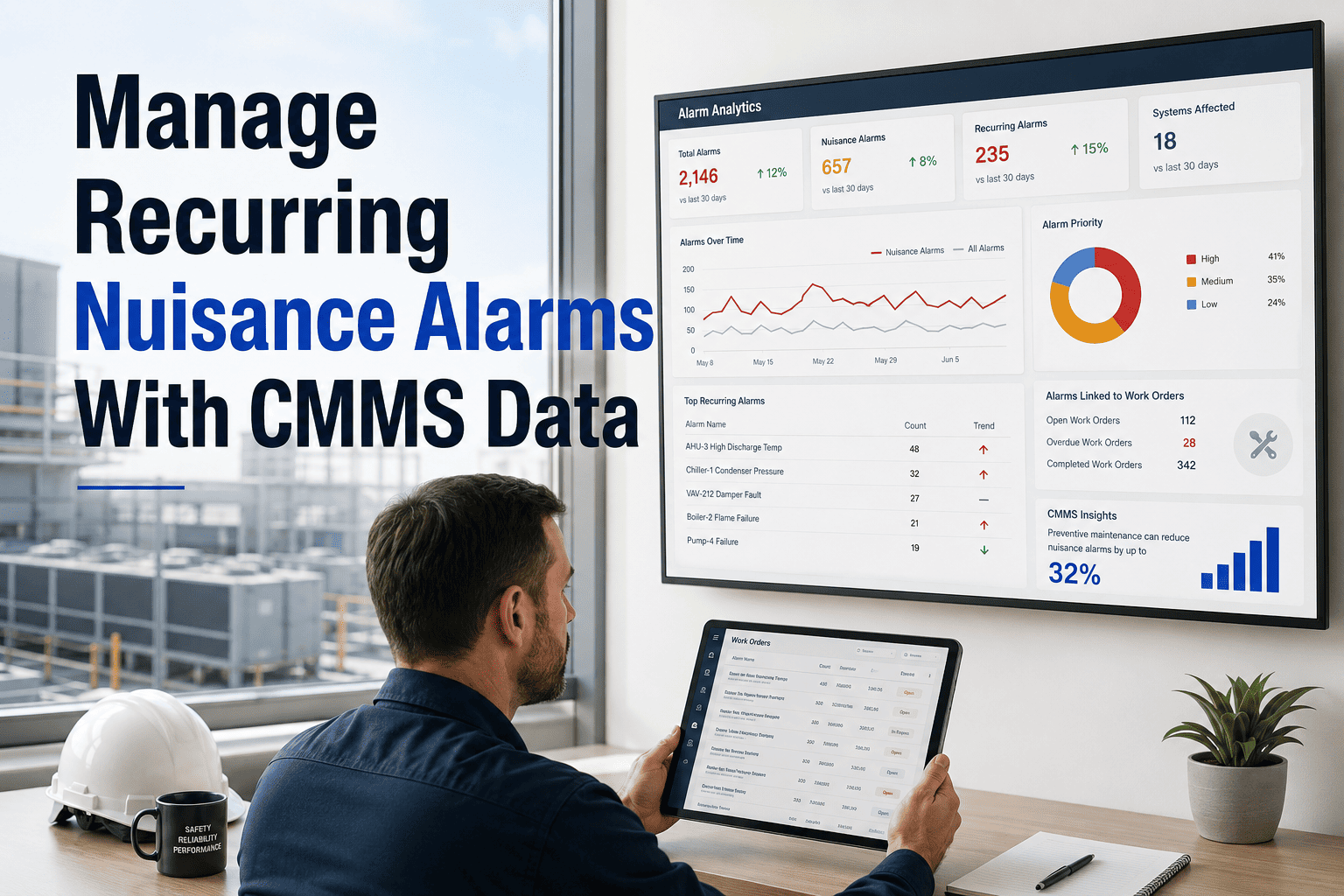

The CMMS pipeline is where IoT deployments either deliver ROI or become expensive dashboards. Without a direct, automated connection between sensor anomalies and maintenance work orders, predictive data sits in a dashboard that nobody checks. Oxmaint's data pipeline ingests edge-processed anomalies, scores them by severity and asset criticality, auto-generates prioritised work orders with sensor evidence, dispatches mobile crews, and verifies repairs through post-maintenance sensor confirmation.

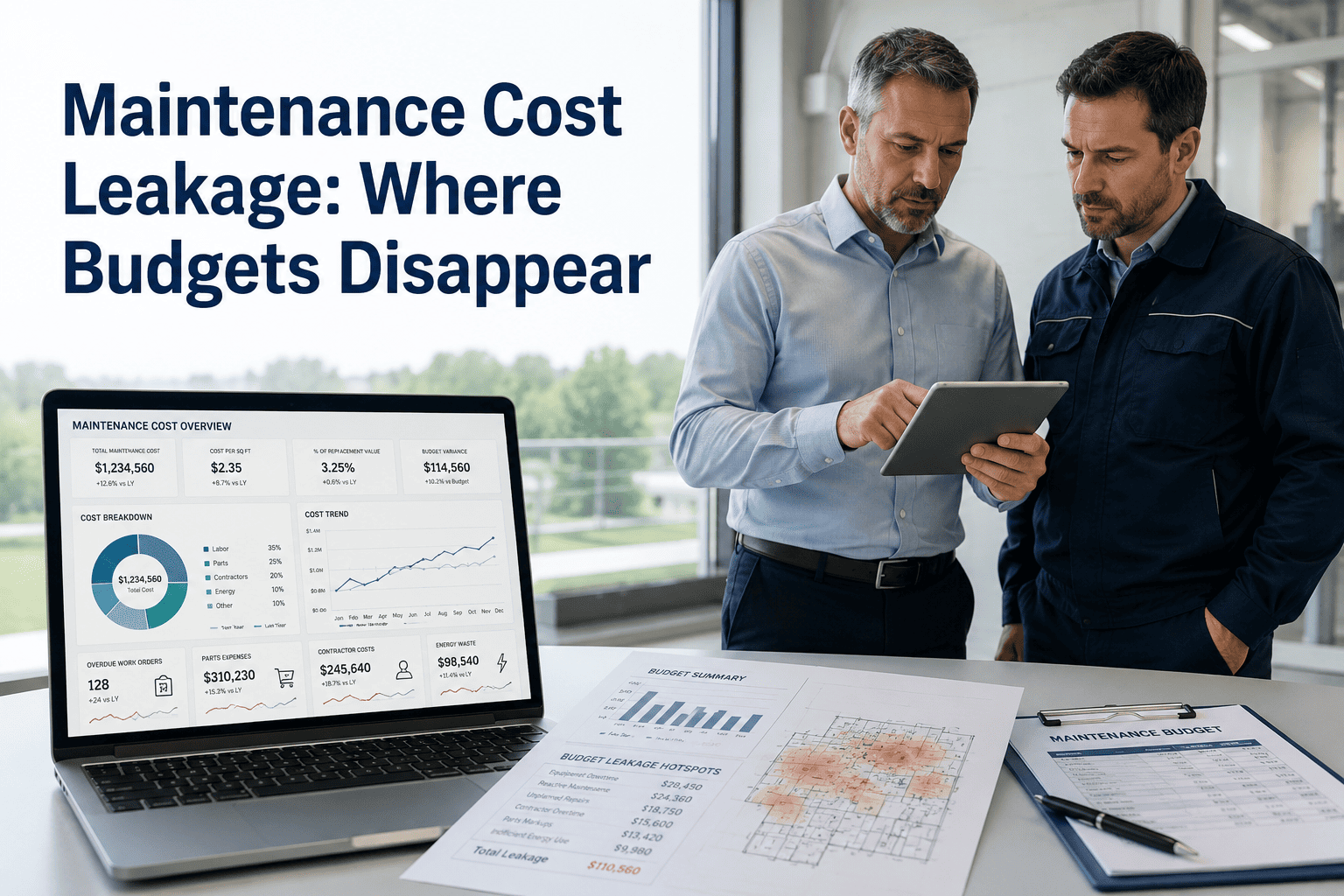

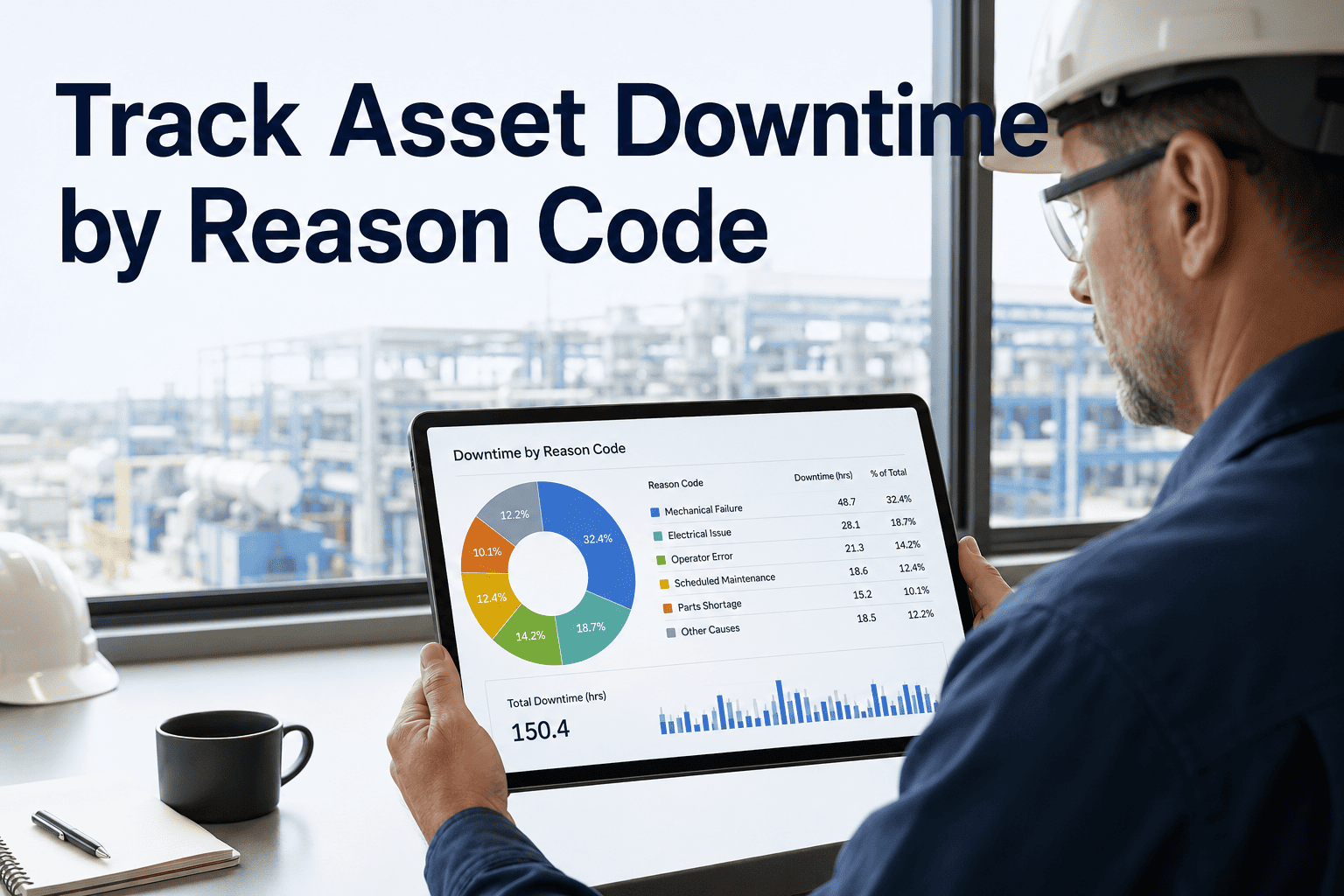

Deployment ROI: Standalone Dashboard vs. CMMS-Integrated Pipeline

The difference between IoT predictive maintenance that delivers ROI and IoT that becomes an expensive science project is the CMMS pipeline. Dashboard-only deployments produce data that maintenance teams ignore. CMMS-integrated pipelines produce work orders that maintenance teams execute. The cost comparison below demonstrates why the integration layer — not the sensors themselves — determines programme success.

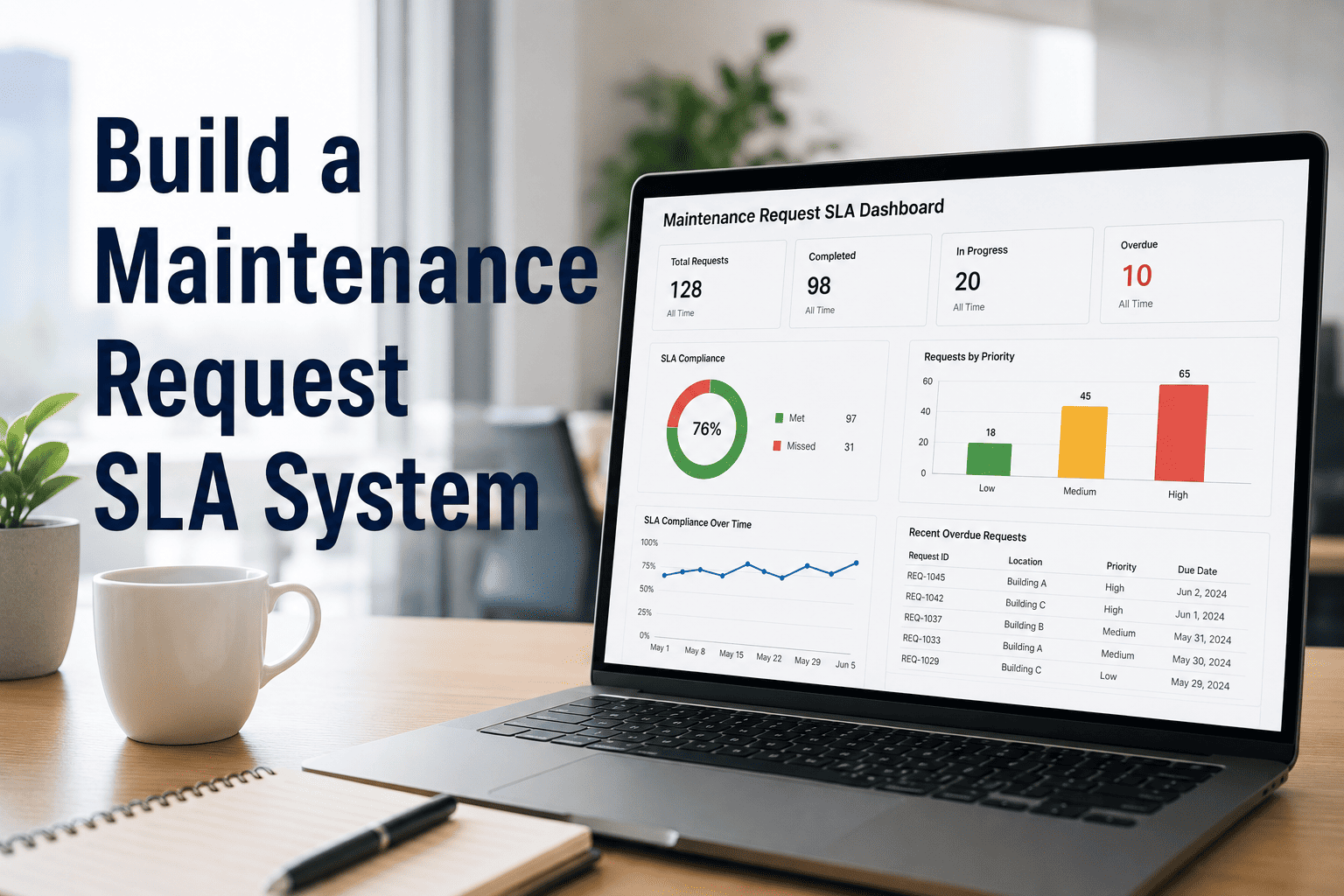

| Metric | No IoT (Reactive) | IoT + Dashboard Only | IoT + CMMS Pipeline |

|---|---|---|---|

| Unplanned Downtime | $2.4M/yr (baseline) | $1.8M/yr (25% reduction) | $480K/yr (80% reduction) |

| Alert-to-Repair Time | N/A — no alerts | Days to weeks (manual) | Under 24 hours (automated) |

| Finding-to-WO Conversion | 0% (no findings) | 15-30% (manual entry) | 100% (auto-generated) |

| Sensor Data Utilisation | N/A | Viewed by 1-2 engineers | Drives all PdM work orders |

| 12-Month ROI | N/A | Negative (cost without return) | 3x-8x investment recovery |

Expert Perspective: Why the Pipeline Matters More Than the Sensor

I've deployed IoT sensors in over 40 manufacturing plants across 8 industries. The pattern is consistent: facilities that buy sensors first and worry about integration later fail. Facilities that design the CMMS work order pipeline first and select sensors to feed it succeed. The sensor is the easy part — it's a $200 device that measures physics. The hard part is turning that measurement into a dispatched, completed, and verified maintenance action that prevents a $50,000 failure. That's an architecture problem, not a hardware problem. Every successful deployment I've seen started with the question "how does this sensor's data become a work order?" and worked backwards to select the right hardware, protocol, edge processing, and CMMS integration. The failures all started with "let's install sensors and see what we get."

Facilities that follow this architecture — FMEA-driven sensor selection, RF-validated wireless protocols, properly sized edge computing, and CMMS pipeline integration — achieve 80%+ reduction in unplanned downtime within 12 months. The sensor technology is mature and proven; the differentiator is the deployment architecture that connects sensor data to maintenance action. Schedule a consultation to design your IoT predictive maintenance architecture.

Your First 90 Days: From Zero to Predictive

A phased 90-day deployment delivers first prevented failures within weeks — not months. Start with 5-10 critical assets, prove the sensor-to-work-order pipeline, document ROI, then expand. Attempting to instrument an entire plant at once is the primary cause of deployment failure. The roadmap below structures the deployment for rapid, measurable results that justify programme expansion.

For facilities ready to move from reactive maintenance to sensor-driven predictive operations, the path is clear: match sensors to failure modes first, validate wireless coverage second, size edge computing third, and connect every anomaly to a CMMS work order fourth. Book a consultation to structure your IoT deployment for measurable predictive maintenance results.