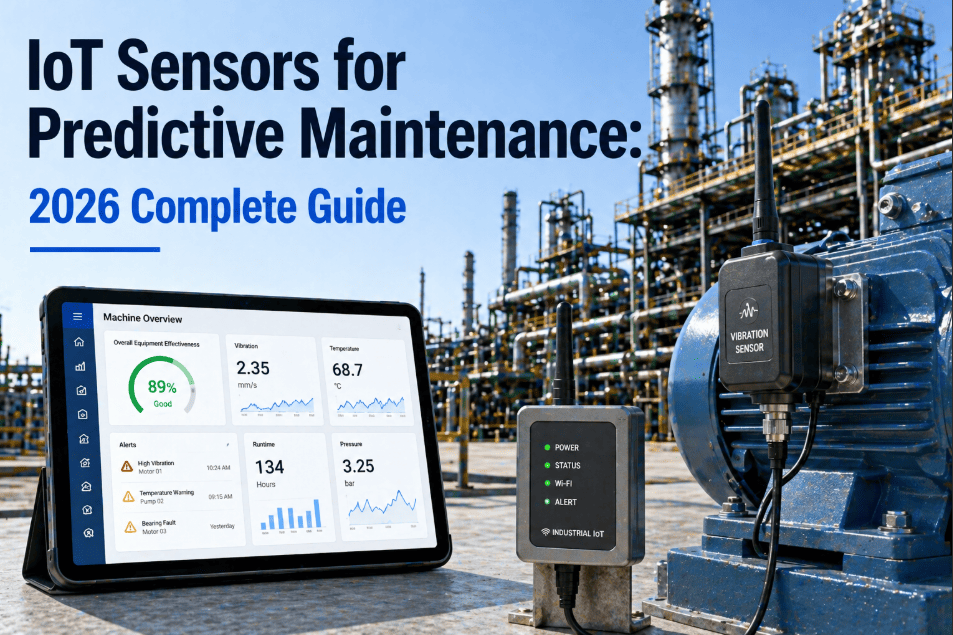

When a warehouse robot's servo motor starts drawing anomalous current at 2 AM, the difference between a $200 scheduled repair and a $45,000 emergency shutdown is measured in milliseconds—not minutes. Sending raw telemetry to the cloud for analysis introduces latency that turns predictive maintenance into reactive firefighting. In 2026, the standard for robotic fleet maintenance is IoT edge computing: onboard and near-robot processors that analyse vibration, thermal, current, and positional data in real time, filter noise locally, and push only actionable maintenance alerts to the CMMS. If your robot fleet still streams every sensor reading to a centralised server and waits for cloud-based decisions, you are building maintenance intelligence on a foundation of delay. The difference between operations that prevent failures and those that chase them is where the computing happens. Talk to our team about deploying edge-first maintenance intelligence across your robotic fleet.

Technology Guide — 2026 Edition

Top IoT Edge Computing for Real-Time Robot Maintenance Decisions 2026

IoT edge computing processes maintenance data in real-time on robots—CMMS receives only actionable alerts, not raw noise.

Edge Computing Maturity Model for Robotic Maintenance

5

Autonomous

Self-Healing

4

Predictive

Edge AI

3

Conditional

Edge Filter

2

Connected

Cloud-Only

1

Manual

No IoT

<50ms

Edge inference latency vs. 2-8 second cloud round-trip for maintenance decisions

92%

Reduction in data transmitted to cloud through intelligent edge filtering

3–6x

ROI within 12 months from prevented failures and bandwidth savings

85%

Of robotic fault patterns detectable at the edge without cloud dependency

Why Edge Computing Outperforms Cloud-Only Maintenance

Every robot generates thousands of sensor readings per second—motor currents, joint torques, vibration signatures, thermal profiles, navigation errors, and battery states. Streaming all of this to the cloud creates bandwidth bottlenecks, latency delays, and cloud compute costs that scale linearly with fleet size. Edge computing solves this by running inference models directly on or beside the robot, making maintenance decisions in milliseconds and forwarding only confirmed anomalies to the CMMS. The result: faster detection, lower costs, and maintenance intelligence that works even when connectivity drops.

What Edge-First Maintenance Enables

On-Robot Inference

ML models embedded in robot controllers analyse motor current, vibration, and thermal data locally—detecting anomalies in under 50ms without network dependency.

Intelligent Data Filtering

Edge processors compress, deduplicate, and filter 92% of raw telemetry—transmitting only statistically significant deviations and confirmed alerts to cloud/CMMS.

Sub-Second Decisions

Critical maintenance decisions—slow-down, safe-stop, reroute—execute in milliseconds at the edge versus 2-8 seconds via cloud round-trip latency.

Offline Resilience

Edge models operate independently during network outages, connectivity drops, or bandwidth congestion—maintenance intelligence never goes dark.

CMMS Alert Injection

Only validated, context-rich alerts reach the CMMS—auto-generating work orders with fault classification, severity score, and recommended action attached.

Bandwidth Cost Reduction

Transmitting alerts instead of raw streams cuts cloud ingestion costs by 80-95%, making fleet-scale predictive maintenance economically viable.

The Edge Computing Stack: Technology Integration Map

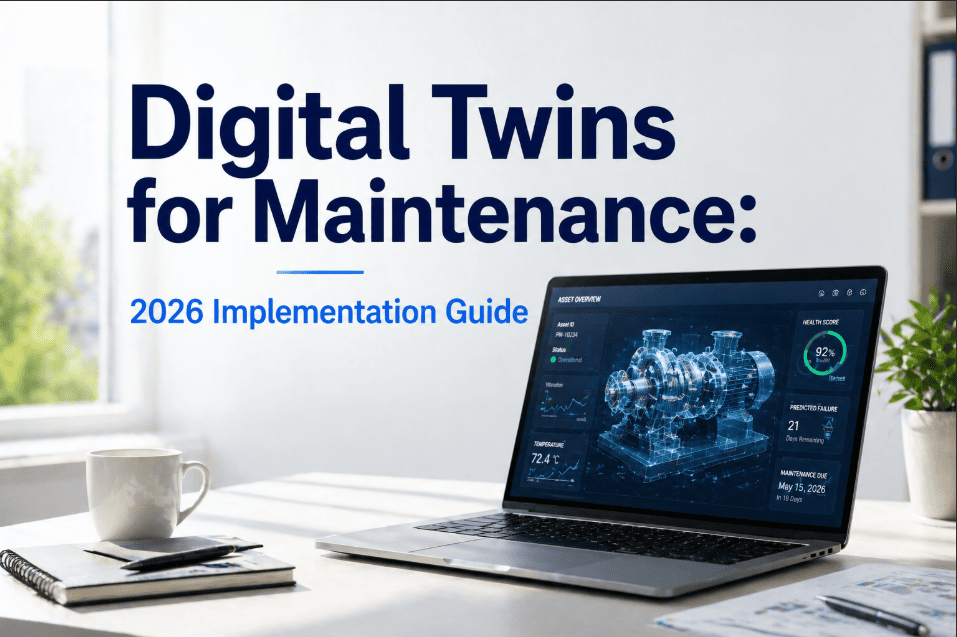

No single layer handles the full pipeline from raw sensor data to CMMS work order. A robust edge maintenance architecture layers hardware, firmware, inference engines, communication protocols, and CMMS connectors into a coherent stack. Understanding each layer is essential for selecting the right combination of edge hardware, AI frameworks, and integration patterns for your robotic fleet.

Edge Stack Layers & Integration Points

Sensor Layer

Motor Current Signatures

Critical

MEMS Vibration / IMU

High

Thermal & Proximity Arrays

High

Source: Robot Controllers & Add-On IoT

Output: Raw Multi-Channel Streams

Edge Hardware

NVIDIA Jetson / Orin Modules

Critical

Industrial ARM SBCs

High

FPGA Accelerator Cards

Medium

Function: Local Compute & Inference

Output: Sub-50ms Decision Latency

AI Inference Engine

TensorRT / ONNX Runtime

Critical

Anomaly Detection Models

High

RUL Prediction Algorithms

High

Function: Pattern Recognition & Classification

Output: Fault Labels & Severity Scores

Communication Layer

MQTT / AMQP Messaging

Critical

OPC-UA / ROS2 Bridge

High

5G / Wi-Fi 6E Uplink

High

Function: Alert Routing & Data Sync

Output: Compressed Alert Payloads

CMMS Platform

Auto Work Order Generation

Critical

Priority Scoring Engine

High

Fleet Health Dashboard

High

Function: Decision & Action Orchestration

Output: Maintenance Intelligence

Process Robot Data at the Edge, Act in the CMMS

Oxmaint receives edge-processed maintenance alerts from any robotic fleet—transforming validated anomalies into prioritised work orders, predictive schedules, and fleet health dashboards without ingesting raw telemetry noise.

The 1–5 Edge Maturity: From Manual to Autonomous

To prioritise investments in edge computing for robotic maintenance, organisations must assess where they fall on the edge intelligence maturity curve. A standardised 1-5 scale translates complex IoT architecture into a clear roadmap for operations leadership. This framework moves teams from "No IoT" (Level 1) to "Autonomous Self-Healing Fleets" (Level 5) with measurable milestones at each stage.

Edge Computing Maturity Scale for Robotic Maintenance

5

Autonomous — Self-Healing Fleets

Edge AI triggers autonomous safe-stop, reroute, or degraded-mode operation. CMMS auto-schedules repair in optimal maintenance windows. Models retrain continuously from confirmed outcomes.

Action: Expand autonomous response coverage

Goal State

4

Predictive — Edge AI Inference

On-robot ML models predict component failure 7-14 days ahead. Only actionable alerts with RUL estimates reach CMMS. Work orders auto-generated with edge-computed evidence packages.

Action: Tune models & expand to non-critical assets

High Reliability

3

Conditional — Edge Filtering Active

Edge gateways filter raw telemetry and apply threshold-based rules. Alerts based on fixed limits with basic anomaly flags. CMMS integration is partial—some manual interpretation required.

Action: Deploy ML inference at edge layer

Standard

2

Connected — Cloud-Only Processing

All telemetry streamed to cloud for analysis. High bandwidth costs, 2-8 second latency for decisions. Offline robots lose all monitoring capability. CMMS alerts delayed by processing queue.

Action: Deploy edge gateway with local processing

Inefficient

1

Manual — No IoT Monitoring

No sensor data collection. Robots run until failure or scheduled manual inspection. Emergency repairs dominate. No visibility into fleet degradation patterns. Safety risk from undetected faults.

Action: Immediate sensor deployment + edge gateway

High Risk

The Cost of Cloud-Only Latency

When maintenance decisions depend on cloud round-trips, every second of latency is a second closer to failure. A bearing that generates a detectable anomaly at the edge in 50ms will take 2-8 seconds to process through cloud pipelines—and that gap grows exponentially in cost as the fault progresses. The "Latency Cost Model" below shows how delayed decisions compound from minor planned interventions to catastrophic production losses.

Cost of Decision Latency Over Time

Cost multiplier relative to real-time edge detection and response

5 Edge Real-Time

$150 (Planned PM)

1x

4 Minutes Delay

$800 (Scheduled Fix)

5x

3 Hours Delay

$8,000 (Urgent Repair)

53x

2 Shift Delay

$45,000 (Emergency + Downtime)

300x

1 Catastrophic

$350K+ (Replacement + Loss)

2300x

Edge computing catches faults at Level 4-5, preventing the exponential costs of delayed cloud-only decisions at Levels 1-2.

Turn Edge Alerts into Maintenance Intelligence

Oxmaint ingests edge-processed alerts from robotic fleets into a single maintenance command centre—automatically prioritising work orders, scheduling predictive repairs, and giving your team verified intelligence instead of raw data noise.

Deployment Lifecycle: The 5-Phase Edge Integration Cycle

A robust edge-first maintenance system follows a disciplined deployment lifecycle—from fleet telemetry audit through edge hardware provisioning, model deployment, CMMS integration, and continuous model improvement. This phased approach ensures edge compute is placed where it delivers the highest diagnostic value, data pipelines are reliable, and maintenance teams trust the system's outputs from day one.

Edge Integration Lifecycle

1

Fleet Telemetry Audit & Failure Mode Mapping

Inventory all sensor data available from each robot type—motor currents, joint torques, temperatures, navigation errors. Map dominant failure modes to determine which signals require edge inference versus simple threshold monitoring.

Assessment Phase

2

Edge Hardware Provisioning & Network Design

Select edge compute modules (NVIDIA Jetson, industrial ARM, FPGA) based on inference requirements and robot form factor. Design local network topology with MQTT brokers, failover paths, and bandwidth allocation for alert-only uplinks.

Deployment Phase

3

Model Training & Edge Deployment

Train anomaly detection and RUL models on historical failure data. Optimise for edge inference (quantisation, pruning, TensorRT conversion). Deploy to fleet edge nodes with OTA update pipeline for continuous model improvements.

Build Phase

4

CMMS Integration & Alert Calibration

Connect edge alert output to Oxmaint CMMS for automated work order generation. Calibrate alert thresholds during a learning period. Tune sensitivity to minimise false positives while catching every real fault pattern.

Calibration Phase

5

Feedback Loop & Autonomous Expansion

Feed confirmed fault outcomes back into edge models to improve accuracy. Expand coverage to lower-criticality subsystems. Progress from threshold alerting to predictive RUL estimation and autonomous safe-mode responses.

Continuous Phase

Expert Perspective: The Edge Computing Advantage

"

We had 140 AMRs streaming full telemetry to the cloud—our monthly data ingestion bill was enormous, and alerts arrived 4-6 seconds after the event. After deploying edge inference on each robot, we cut cloud data transfer by 93% and dropped decision latency to 40ms. The CMMS now receives only confirmed anomalies with fault classification, severity, and RUL estimate attached. Our technicians stopped chasing false alarms and started preventing real failures. In the first quarter, we prevented 18 unplanned stops that would have cost $12,000-$45,000 each.

— Director of Automation, Major Logistics & Distribution Centre

93%

Reduction in cloud data transfer costs after edge deployment

18

Unplanned robot stops prevented in the first quarter

40ms

Average edge inference latency for fault classification

Operations achieving true predictive robotic maintenance share a common architecture: intelligence at the edge, action in the CMMS. By processing sensor data locally, filtering noise before it reaches the network, and delivering only verified maintenance alerts to the work order system, these teams eliminate the latency tax that makes cloud-only approaches reactive by design. When every robot thinks locally and reports intelligently, the full fleet operates as a self-monitoring system. Start building your edge-first maintenance architecture with the CMMS platform built for real-time robotic fleet intelligence.

Build Your Edge-First Robot Maintenance System

Oxmaint provides the unified CMMS platform for edge-driven predictive maintenance—ingesting validated alerts from any edge compute layer to deliver prioritised work orders, fleet health dashboards, and maintenance intelligence that eliminates cloud-latency bottlenecks.

Frequently Asked Questions

What is IoT edge computing for robotic maintenance?

IoT edge computing for robotic maintenance means running data processing and AI inference models directly on or beside the robot—rather than sending all raw sensor data to the cloud for analysis. Edge processors analyse motor currents, vibration signatures, thermal data, and positional accuracy locally, in under 50 milliseconds. Only confirmed anomalies, fault classifications, and remaining useful life (RUL) estimates are transmitted to the CMMS. This eliminates cloud latency, reduces bandwidth costs by 80-95%, and ensures maintenance intelligence operates even during network outages.

Why is edge processing better than cloud-only for robot maintenance?

Cloud-only architectures introduce 2-8 seconds of round-trip latency for every maintenance decision—an eternity when a servo motor is overheating or a bearing is failing. Edge computing reduces this to under 50ms. Beyond speed, edge processing cuts cloud data ingestion costs by 80-95% (since only alerts, not raw streams, are transmitted), works during network outages, and scales linearly with fleet size without proportional cloud cost increases. For safety-critical responses like autonomous safe-stop or degraded-mode operation, edge latency is the only viable option.

Sign up free to see how edge alerts flow into CMMS work orders.

What types of robots support edge computing for maintenance?

Any robot with onboard compute capability or that can connect to a nearby edge gateway supports edge maintenance: autonomous mobile robots (AMRs), automated guided vehicles (AGVs), collaborative robots (cobots), industrial robot arms (FANUC, ABB, KUKA), quadruped inspection robots (Boston Dynamics Spot, ANYmal), warehouse picking systems, and drone fleets. Modern robots typically have ARM or x86 processors onboard that can run lightweight inference models. For older robots, a nearby edge gateway (NVIDIA Jetson, Raspberry Pi industrial, or FPGA module) connected via Ethernet or Wi-Fi provides the same capability.

How does edge-processed data integrate with a CMMS?

The edge processor runs inference models locally and generates structured alert payloads containing: fault classification (e.g., "bearing wear stage 2"), severity score (1-10), estimated RUL, affected component ID, and supporting evidence (vibration spectrum snapshot, thermal reading). These payloads are transmitted via MQTT or REST API to the CMMS—in this case Oxmaint—which auto-generates a prioritised work order with all evidence attached. The CMMS tracks repair execution, and confirmed outcomes feed back to edge models for continuous accuracy improvement.

Book a demo to see the edge-to-CMMS pipeline in action.

What is the ROI timeline for edge computing in robotic maintenance?

Most operations see payback within 8-12 months. The ROI comes from three areas: prevented unplanned failures (a single prevented AMR stop saves $12,000-$45,000 in lost throughput and emergency repair), cloud bandwidth and compute cost reduction (80-95% less data transmitted), and optimised maintenance scheduling (eliminating unnecessary PMs while catching real degradation early). Additionally, edge-enabled autonomous responses—safe-stop before catastrophic failure—prevent the $100K-$500K replacement costs that cloud-latency delays cannot avoid. Facilities with 50+ robots commonly save $150,000-$600,000 annually through edge-first maintenance intelligence.