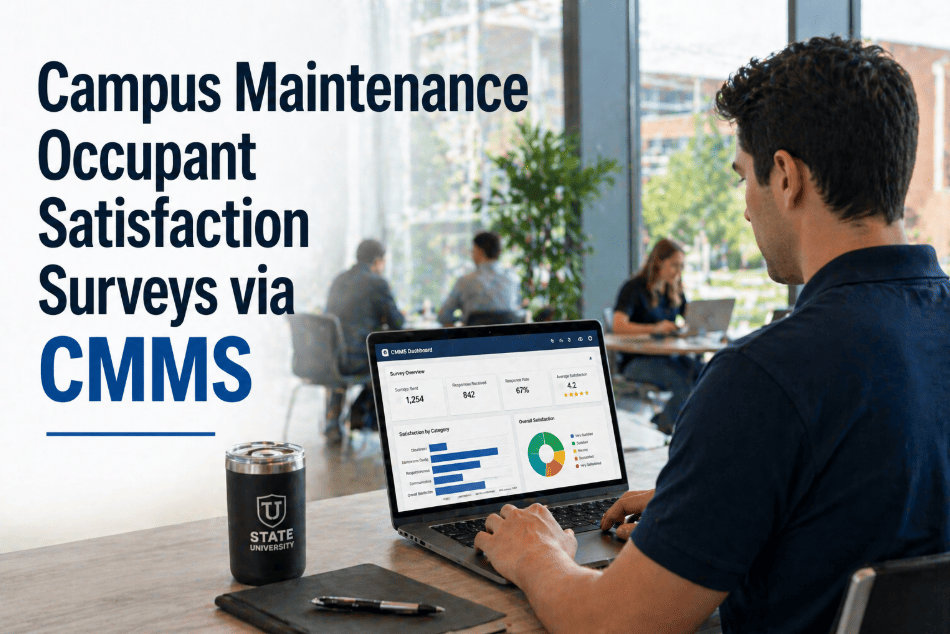

A student submits a facilities request at 9:47 AM: the heating in her seminar room has been out since Monday. A technician resolves the work order at 2:15 PM the same day. The work order closes in the CMMS. And then — nothing. The student never hears that it was fixed. She finds out only because the room is warm when she returns on Wednesday. The maintenance team performed well. The communication loop never closed. Occupant satisfaction surveys sent automatically after work order closure are the mechanism that closes that loop — converting a good maintenance outcome into a documented, measurable, and improvable service experience. Campuses running automated post-WO satisfaction feedback report 34% improvement in occupant satisfaction scores within 18 months and significantly better visibility into which technicians, which building types, and which request categories are consistently underperforming. If your campus has no systematic way to measure how occupants experience maintenance service, start a free trial with Oxmaint or book a demo to see automated satisfaction surveys in action.

Campus CMMS · Occupant Feedback · Service Quality

Campus Maintenance Occupant Satisfaction Surveys — How Automated CMMS Feedback Loops Transform Service Quality

Two questions. Sent automatically. Thirty seconds to complete. The occupant satisfaction survey that triggers when a work order closes is the simplest performance management system in campus facilities — and the most consistently underused. Here is how to build it and what it delivers.

34%

Improvement in occupant satisfaction scores within 18 months of automated survey implementation

2 Qs

Optimal survey length — "Was the issue resolved?" and "How satisfied were you with the service?" — maximizes response rate

68%

Average response rate for automated post-WO surveys vs. 12% for periodic manual satisfaction surveys

The Feedback Gap

Why Campus Facilities Teams Operate Blind to Occupant Experience

Most campus maintenance operations measure what they can see — work order volume, completion time, PM compliance. What they cannot see without systematic feedback: whether the technician was professional, whether the issue was actually resolved, whether the occupant felt their request was taken seriously. Four organizational blind spots follow.

01

Work Orders Closed Before Issues Are Resolved

A technician replaces a HVAC filter. The room is still cold because the thermostat sensor is also faulty. The work order closes as "completed." The occupant submits a new request three days later. Without a "was it resolved?" question sent at closure, the CMMS never knows the first intervention failed. Repeat-contact rate on the same issue is invisible without the feedback loop.

02

Poor Service Technicians Protected by Absent Data

A technician who consistently resolves work orders quickly but leaves spaces in worse condition than he found them, communicates poorly, and dismisses occupant concerns generates no negative signal in the CMMS — because work order closure time does not measure service quality. Without satisfaction scores linked to technician IDs, chronically poor performers are invisible to management.

03

Annual Satisfaction Surveys Measure the Wrong Thing

A campus-wide annual facilities satisfaction survey asks occupants to rate their general experience over the past 12 months. Most occupants cannot accurately recall specific maintenance interactions from 6 months ago. The survey measures impressions and biases, not actual service quality events. A 2-question survey sent 24 hours after work order closure measures the actual event with the actual occupant while memory is accurate.

04

Service Improvements Have No Performance Baseline

A facilities director implements a new technician training program and wants to know if it improved service quality. Without pre-training satisfaction score baselines by technician, the program cannot be evaluated. Without post-training scores, improvement cannot be documented. The investment in training is unmeasurable — and therefore unjustifiable in the next budget cycle.

Survey Design

The Two-Question Survey That Actually Gets Answered

Survey design determines response rate. Campus facilities satisfaction surveys that fail almost always fail because they are too long. Research consistently shows that surveys exceeding 3 questions see response rates drop below 20%. The optimal post-work-order survey is exactly two questions — one resolution verification, one satisfaction rating — sent via mobile-friendly link within 24 hours of work order closure.

Q1

Was your maintenance issue fully resolved?

Yes — fully resolved

Partially resolved

No — still an issue

Q2

How satisfied were you with the maintenance service?

1 = Very dissatisfied 5 = Very satisfied

Optional: Any additional comments? (text field)

68%

Response rate

2-question format vs. 12% for multi-page surveys

24hr

Optimal send timing

Within 24 hours of closure — memory accuracy highest

30 sec

Completion time

Mobile-optimized single-page format — no login required

Auto

Triggered by CMMS

No manual step — survey sends when work order status changes to "closed"

Automated Feedback Loops in Oxmaint

Every Closed Work Order Triggers a Survey. Every Survey Feeds a Performance Dashboard.

Oxmaint's satisfaction survey module sends automatically when work orders close, routes negative responses to supervisors immediately, and aggregates scores by technician, building, and request category — turning feedback into actionable performance data.

Platform Capabilities

How Oxmaint Manages Campus Occupant Satisfaction Feedback

Automated

Work Order Closure Trigger

Survey sends automatically within configured time window after work order status changes to closed — no manual step, no forgotten sends. Configured per work order category (exclude internal PM from survey queue, include all occupant-requested repairs).

100% send rate on configured work order types — no manual oversight required

Escalation

Low-Score Immediate Alert

When a satisfaction score of 1 or 2 is submitted, or when "No — still an issue" is selected, an immediate alert routes to the supervising facilities manager with the work order context, technician assigned, and occupant comment. Recovery response within 2 hours is the target standard.

Service failures identified and escalated within minutes of occupant response

Technician

Per-Technician Performance Scores

Satisfaction scores aggregated by technician with minimum response volume thresholds to ensure statistical validity. Rolling 90-day scores, trend direction, and score distribution visible to supervisors. Technicians with declining scores flagged for coaching conversation before formal performance process.

Objective performance data replaces subjective supervisor impressions

Building

Satisfaction Scores by Building and Category

Scores segmented by building, department, request category, and time of year. Identifies systemic issues — a specific residence hall consistently scoring below 3.2 signals a pattern requiring structural investigation, not individual technician coaching.

Systemic vs. individual service failures distinguished by data pattern

Resolution

First-Contact Resolution Rate Tracking

Q1 response data (fully resolved / partially / not resolved) tracked against repeat work order submission rates for the same location. First-contact resolution rate becomes a measurable KPI — industry benchmark for campus facilities: 82% on first contact.

First-contact resolution tracked as a leading performance indicator

Reporting

Board-Ready Satisfaction Reports

Monthly and annual satisfaction trend reports exportable in presentation format — campus-wide score trend, by-building breakdown, technician performance distribution, and first-contact resolution rate. Provides the occupant experience evidence that strategic planning and accreditation processes increasingly require.

Occupant satisfaction evidence for strategic planning and accreditation

The Full Loop

The Complete Occupant Satisfaction Feedback Loop — From Request to Improvement

Step 1

Occupant Submits Request

Student, faculty member, or staff submits maintenance request via CMMS portal, mobile app, or email-to-ticket. Auto-acknowledgment sent with reference number and estimated response time.

Step 2

Work Order Assigned and Completed

Technician receives and completes work order. Logs completion notes, parts used, and actual time. Changes work order status to "Closed" in mobile CMMS app at the asset location.

Step 3

Survey Triggers Automatically (Within 24 Hours)

CMMS detects work order closure and sends 2-question satisfaction survey to the original requester's email. Mobile-optimized, no login required, completes in 30 seconds.

Step 4

Response Logged and Scored

Survey response tagged to the work order, the technician, and the building. Score added to rolling performance averages. Text comments indexed for keyword analysis (recurring themes surface automatically).

Step 5

Low Scores Escalate Immediately

Scores of 1–2 or unresolved responses trigger immediate supervisor notification with work order context. Recovery contact made within 2 hours. Re-opened work order created automatically if issue is still present.

Step 6

Performance Data Drives Improvement

Monthly technician score reviews inform coaching conversations. Building-level patterns inform staffing and process decisions. Campus-wide trends inform strategic facilities planning and annual board reporting on occupant experience.

Before vs After

Manual Satisfaction Measurement vs. Automated CMMS Feedback Loop

| Dimension | Annual Campus Survey (Traditional) | Oxmaint Automated Post-WO Survey |

| Survey frequency |

Once per year |

After every closed work order |

| Response rate |

8–15% typical |

68% average — event-linked timing |

| Memory accuracy |

12-month recall — heavily biased |

24-hour recall — highly accurate |

| Technician attribution |

Not possible — general impressions |

Score linked to specific technician |

| Low score escalation |

Discovered months later in aggregate |

Immediate alert — same day recovery possible |

| Resolution verification |

Not measured |

Q1 directly measures resolution |

| Performance improvement |

Annual report — lagging indicator |

Monthly technician coaching data |

Measurable Outcomes

What Campus Facilities Teams Report After Implementing Automated Satisfaction Surveys

34%

Improvement in Satisfaction Scores

Within 18 months of automated survey implementation — behavioral change from technicians who know their work is being measured

68%

Survey Response Rate

Post-WO 2-question format vs. 12% for annual campus-wide surveys — statistically significant sample size within 60 days

82%

First-Contact Resolution Rate Target

Industry benchmark — campuses with automated feedback loops achieve and maintain this rate vs. 61% without systematic tracking

2hr

Average Recovery Response Time

From low-score submission to supervisor contact with the occupant — converting service failures into service recovery opportunities

Common Questions

Campus Occupant Satisfaction Surveys — Questions Answered

Should satisfaction surveys go to all occupants or only those who submitted requests?+

Only to the original requester — the person who submitted the work order. This ensures the survey respondent has direct experience with the specific service event being measured, rather than general impressions. For work orders initiated by facilities staff rather than occupants (internal PM, inspection-triggered repairs), suppress the survey — these have no external requester to receive it. For contractor-completed work orders where the facilities manager is the intermediary, the facilities manager becomes the survey recipient with questions adapted to their contractor management role.

Book a demo to see how survey routing is configured in Oxmaint.

How do we prevent gaming — technicians closing work orders prematurely to avoid low scores?+

Three safeguards prevent gaming. First, Q1 ("Was your issue resolved?") directly measures whether premature closure occurred — a high "not resolved" rate on a specific technician flags the behavior immediately. Second, repeat work order submission rate for the same location within 14 days of closure is tracked separately — a high repeat rate correlates with premature closure. Third, supervisor spot-check audits on a random 10% of closed work orders verify completion quality against work order notes. The combination creates a system where gaming premature closures produces more evidence, not less, of performance problems.

Can satisfaction score data be used in formal technician performance reviews?+

Yes — with appropriate statistical validity thresholds. A minimum of 30 scored work orders per rolling quarter ensures the score reflects pattern rather than isolated events. Oxmaint's per-technician reports include score distribution, trend direction, and volume context — so a 3.8 average on 12 surveys is presented differently from a 3.8 average on 186 surveys. HR and legal recommend that satisfaction scores be one input in performance reviews alongside supervisor observation, PM compliance rate, and work order completion quality — not the sole determinant. The data is most valuable as a coaching conversation starter.

How do we communicate the survey program to campus occupants so they participate?+

Three communication touchpoints maximize participation. At work order submission, the auto-acknowledgment mentions that a brief satisfaction survey will be sent when the work is complete. In the survey email itself, the subject line references the specific work order number and building — making it immediately relevant rather than generic. On the campus facilities portal, a "Your Feedback Shapes Our Service" message with aggregate satisfaction score trends shows occupants that responses are read and acted on. Campuses that close the loop publicly — "Your feedback led us to add morning coverage in the Science Building" — see response rates 15–25% higher than those that collect feedback silently.

Start a free trial to configure the full communication sequence.

Close the Feedback Loop

Every Work Order Is a Service Experience. Every Service Experience Deserves a Measurement.

Oxmaint's automated satisfaction survey module sends a 2-question survey when every applicable work order closes, routes low scores to supervisors immediately, and aggregates performance data by technician, building, and category — giving campus facilities the service quality intelligence that annual surveys can never provide.